- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

-

- NXP Tech Blogs

- Home

- :

- Software Forums

- :

- eIQ Machine Learning Software Knowledge Base

- :

- eIQ FAQ

eIQ FAQ

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

eIQ FAQ

eIQ FAQ

This document will cover some of the most commonly asked questions we've gotten about eIQ and embedded machine learning.

Anything requiring more in-depth discussion/explanation will be put in a separate thread. All new questions should go into their own thread as well

What is eIQ?

The NXP® eIQ™ machine learning (ML) software development environment enables the use of ML algorithms on NXP EdgeVerse™ microcontrollers and microprocessors, including MCX-N microcontrollers, i.MX RT crossover MCUs, and i.MX family application processors.

eIQ ML software is made up of several pieces of enablement including inference engines, neural network compilers and optimized libraries. This software leverages open-source and proprietary technologies and is fully integrated into our MCUXpresso SDK and Yocto development environments, allowing you to develop complete system-level applications with ease.

eIQ enablement also enables models to use the new eIQ Neutron NPU found on the MCX-N and i.MX RT700 microcontroller devices and upcoming future NPU enabled embedded devices like i.MX95.

What are the key pieces of eIQ enablement?

- eIQ Time Series Studio - PC tool to create and deploy classical machine learning and neural network models for time series analysis

- eIQ Inference Engines - Included as part of MCUXpresso SDK or Yocto Linux, this software is used to do inferencing of pre-trained neural network models on embedded devices

- eIQ Neutron SDK - Contains the Neutron Converter tool for enabling neural network models to be accelerated with eIQ Neutron NPUs

- eIQ Model Zoo - browse models tested on NXP silicon

- eIQ Model Watermarking Extension - Enhance copyright protections on custom models

- eIQ Model Creator - Partnership with ModelCat for vision-based model development

How much does eIQ cost?

eIQ Time Series Studio, eIQ Neutron SDK, and eIQ Inference engines are complimentary and royalty free. eIQ Model Creator has a subscription fee with ModelCat.

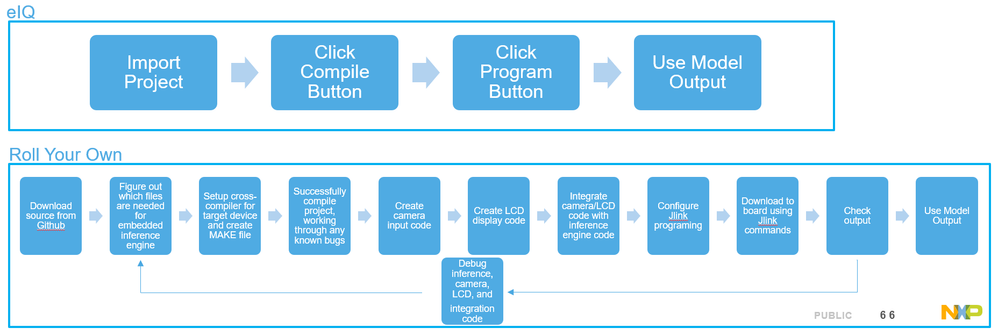

What is the development flow for developing and deploying AI/ML models with eIQ?

There are two options depending on if already have a model or not, or if interested in Time Series or not:

1) Deploy a neural network model using the eIQ Inference Engines

2) Use eIQ Time Series Studio (TSS) to train and deploy a time series model using a simple C library

eIQ Inference Engines

What is the key enablement for using eIQ Inference Engines?

1) The inference engine, like TensorFlow Lite for Microcontrollers, that is included in the MCUXpresso SDK

2) eIQ Neutron Converter Tool - located inside the eIQ Neutron SDK package, it is used to convert a quantized TFLite model into a Neutron-accelerated TFLite model. This is only required if using an eIQ Neutron NPU enabled device.

You can use any workflow to create and train your ML model. The model just needs to be exported as TFLite file so it can be converted by the eIQ Neutron Converter Tool and/or use the TFLM inference engine.

What inference engines are available in eIQ?

i.MX apps processors and i.MX RT MCUs support different inference engines. The best inference engine can depend on the particular model being used, so eIQ offers several inference engine options to find the best fit for your particular application.

Inference engines for i.MX:

- TensorFlow Lite (Supported on both CPU and GPU/NPU)

- ARM NN (Supported on both CPU and GPU/NPU)

- OpenCV (Supported on only CPU)

- ONNX Runtime (Currently only supported on CPU)

Inference engines for MCX and i.MX RT

- TensorFlow Lite for Microcontrollers

- ExecuTorch (Coming Soon)

What devices are supported by eIQ inference engines?

eIQ inference engines are available for the following i.MX application processors:

eIQ inference engines are available for the following MCX MCUs:

eIQ inference engines are is available for the following i.MX RT crossover MCUs:

- i.MX RT1180

- i.MX RT1170

- i.MX RT1160

- i.MX RT1064

- i.MX RT1060

- i.MX RT1050

- i.MX RT700

- i.MX RT685

- i.MX RT595

Can eIQ inference engines run on other NXP MCU devices?

There's no special hardware module required to run eIQ inference engines and it is possible to port the inference engines to other NXP devices.

Is eIQ Neutron SDK required to use eIQ inference engines?

eIQ Neutron SDK is required if using a device with an eIQ Neutron NPU as it includes the Neutron Converter tool which is used to convert a model to be accelerated by the NPU.

What is the eIQ Neutron NPU?

The new eIQ Neutron NPU is a Neural Processing Unit developed by NXP which has been integrated into the MCX N, i.MX RT700, and i.MX95 devices, with many more to come. It was designed to accelerate neural network computations and significantly reduce model inference time. The scalability of this module allows NXP to integrate this NPU into a wide range of devices all while having the same eIQ software enablement.

For more details on the NPU for MCX N see this Community post.

How can I start using the eIQ Neutron NPU?

There are hands-on NPU lab guides available for MCX N or i.MX RT700 that walk through the steps for converting and running a model with the eIQ Neutron NPU. There is also an app note AN14700 - i.MX RT700 eIQ Neutron NPU Enablement and Performance which has more details.

What TFLite operators are supported bythe eIQ Neutron NPU on different devices?

The details and constraints for supported operators can be found in the MCUXpresso SDK documentation.

What happened to eIQ Toolkit?

The tools previously bundled as part of eIQ Toolkit are now released as standalone packages and eIQ Toolkit will no longer be updated after the eIQ Toolkit v1.17 in Q3 2025. Going forward the tools previously included in eIQ Toolkit can now be found at:

- eIQ Time Series Studio

- eIQ Neutron SDK - for the Neutron Converter Tool

- eIQ Model Creator - new model creation tool developed by partner ModelCat

- Netron - For TFLite model visualization

- Model optimization and conversion utility coming soon!

Which NXP devices include a Neural Processing Unit (NPU)?

- MCX N - eIQ Neutron N1-16

- i.MX RT700 - eIQ Neutron N3-64

- i.MX 8M Plus - VeriSilicon VIP8000

- i.MX 93 - Ethos U65 256

- i.MX 95 - eIQ Neutron N3-1024S

eIQ Time Series Studio

What is eIQ Time Series Studio (TSS)?

eIQ TSS is software application that provides an automated machine-learning workflow that streamlines the development and deployment of time series-based machine learning models across microcontroller (MCU) class devices such as the MCX portfolio of MCUs and i.MX RT portfolio of crossover MCUs.

Time Series Studio supports a wide range of sensor input signals, including voltage, current, temperature, vibration, pressure, sound, time of flight, among others, as well as combinations of these for multimodal sensor fusion. The automatic machine learning capability enables developers to extract meaningful insights from raw time-sequential data and quickly build AI models tailored to meet accuracy, RAM and storage criteria for microcontrollers. The tool offers a comprehensive development environment, including data curation, visualization and analysis, as well as model autogeneration, optimization, emulation and deployment.

eIQ Time Series Studio was previously included in eIQ Toolkit but is now available as a standalone installer for both Windows and Linux. A web based version is also under development.

What devices are supported by the eIQ Time Series Studio?

TSS will generate a C library that can be included in your application and does not require an Deep Learning inference engine, so it can be deployed to a much wider range of NXP devices as it has very minimal flash and RAM requirements.

- MCX

- FRDM-MCXA153

- FRDM-MCXC444

- FRDM-MCXN947

- FRDM-MCXW17

- i.MX RT

- MIMXRT1060-EVK

- MIMXRT1170-EVK

- MIMXRT1180-EVK

- i.MXRT685

- i.MXRT595

- i.MXRT700

- LPC

- LPC55S69-EVK

- Kinetis

- FRDM-K66F

- FRDM-KV31F

- FRDM-K32L3A6

- DSC

- MC56F83000-EVK

- MC56F80000-EVK

- i.MX

- i.MX93

- i.MX 8M Plus

Can eIQ Time Series Studio create models that can take advantage of the eIQ Neutron NPU?

Yes, TSS now supports creating both Classical Machine Learning (CML) models as well as Neural Network models. Neural Network models can be accelerated by the NPU.

However in many cases it will make more sense to use the CML models for time series applications as they can be just as accurate for many time series datasets but will be much faster and use far less memory due to their smaller model size. Even when using NPU acceleration Neural Network models can be slower than their far smaller CML model counterparts. However in some situations Neural Networks may give better accuracy for complex multi-modal analysis. TSS make it easy to determine if a NN or CML model is the best fit for a particular dataset.

But as many time series applications perform well with CML models then it opens up running time series AI on a wide variety of devices even if they do not have an integrated NPU.

How can I start using the eIQ Time Series Studio tool?

There is a hands-on lab guide available to walk through how to use the tool as well as documentation and guides in the tool itself.

General eIQ Questions

How can I get eIQ?

For MCU devices:

eIQ inference engine libraries and examples are included as part of MCUXpresso SDK for supported devices. Make sure to select the “eIQ” middleware option.

eIQ Neutron Converter Tool that converts your own neural network model to use the Neutron NPU can be found in eIQ Toolkit.

eIQ Time Series Studio is available as a standalone installer

For i.MX devices:

eIQ is distributed as part of the Yocto Linux BSP. Starting with the 4.19 release line there is a dedicated Yocto image that includes all the Machine Learning features: ‘imx-image-full’. For pre-build binaries refer to i.MX Linux Releases and Pre-releases pages.

There is eIQ Toolkit - for model conversion.

What documentation is available for eIQ?

For i.MX RT and MCX devices:

eIQ MCUXPresso SDK documentation can be found online here.

For i.MX devices:

The eIQ documentation for i.MX is integrated in the Yocto BSP documentation. Refer to i.MX Linux Releases and Pre-releases pages.

- i.MX Reference Manual: presents an overview of the NXP eIQ Machine Learning technology.

- i.MX Linux_User's Guide: presents detailed instructions on how to run and develop applications using the ML frameworks available in eIQ (currently ArmNN, TFLite, OpenCV and ONNX).

- i.MX Yocto Project User's Guide: presents build instructions to include eIQ ML support (check sections referring to ‘imx-image-full’ that includes all eIQ features).

It is recommended to also check the i.MX Linux Release Notes which includes eIQ details.

For i.MX devices, what type of Machine Learning applications can I create?

Following the BYOM principle described above, you can create a wide variety of applications for running on I.MX. To help kickstart your efforts, refer to PyeIQ – a collection of demos and applications that demonstrate the Machine Learning capabilities available on i.MX.

- They are very easy to use (install with a single command, retrieve input data automatically)

- The implementation is very easy to understand (using the python API for TFLite, ArmNN and OpenCV)

- They demonstrate several types of ML applications (e.g., object detection, classification, facial expression detection) running on the different compute units available on i.MX to execute the inference (Cortex-A, GPU, NPU).

Can I use the python API provided by PyeIQ to develop my own application on i.MX devices?

For developing a custom application in python, it is recommended to directly use the python API for ArmNN, TFLite, and OpenCV. Refer to the i.MX Linux User’s Guide for more details.

You can use the PyeIQ scripts as a starting point and include code snippets in a custom application (please make sure to add the right copyright terms) but shouldn’t rely on PyeIQ to entirely develop a product.

The PyeIQ python API is meant to help demo developers with the creation of new examples.

What eIQ example applications are available for MCUs?

eIQ example applications can be found in the <SDK DIR>\boards\<board_name>\eiq_examples directory:

What are Glow and DeepViewRT inference engines in the MCUXpresso SDK?

These are inference engines that were supported in previous versions of eIQ but are now deprecated as new development has focused on TensorFlow Lite for Microcontrollers. These projects are still available in MCUXpresso SDK 2.15 for legacy users, but it is highly recommended that any new projects use TensorFlow Lite for Microcontrollers.

How can I learn more about using TensorFlow Lite with eIQ?

There is a hands-on TensorFlow Lite for Microcontrollers lab available.

There is also a i.MX TensorFlow Lite Lab that provide a step-by-step guide on how to get started with eIQ for TensorFlow Lite for i.MX devices.

What application notes are available to learn more about eIQ?

- i.MX RT700 eIQ Neutron NPU Enablement and Performance

- Anomaly Detection App Note

- Handwritten Digit Recognition

- Datasets and Transfer Learning App Note

- Security for Machine Learning Package

- i.MX 8M Plus NPU Warmup Time App Note

What is the advantage of using eIQ instead of using the open-sourced software directly from Github?

eIQ supported inference engines work out of the box and are already tested and optimized, allowing for performance enhancements compared to the original code. eIQ also includes the software to capture the camera or voice data from external peripherals. eIQ allows you to get up and running within minutes instead of weeks. As a comparison, rolling your own is like grinding your own flour to make a pizza from scratch, instead of just ordering a great pizza from your favorite pizza place.

Does eIQ include ML models? Do I use it to train a model?

eIQ has options to both create model and run pre-existing models so you can Bring Your Own Model (BYOM) and run it on NXP embedded devices. eIQ provides the ability to run your own specialized model on NXP’s embedded devices.

MCUXpresso SDK and the i.MX Linux releases come with several examples that use pre-created models that can be used to get a sense of what is possible on our platforms, and it is very easy to substitute in your own model into those examples.

eIQ Time Series Studio can be used to create and deploy time series models

eIQ Model Creator is an option to create your own vision based models with our partner ModelCat

I’m new to AI/ML and don’t know how to create a model, what can I do?

A wide variety of resources are available for creating models, from labs and tutorials, to automated model generation tools like eIQ Time Series Studio, eIQ Model Creator, Google Cloud AutoML, Microsoft Azure Machine Learning, or Amazon ML Services, to 3rd party partners like ModelCat, SensiML and Au-Zone that can help you define, enhance, and create a model for your specific application.

I’m interested in anomaly detect or time series models on microcontrollers, where can I get started?

The eIQ Time Series Studio (TSS) tool, included as part of the eIQ Toolkit, is perfect for getting started with time series or anomaly detection models. It allows you to import time series datasets, generate models, and deploy them to NXP microcontrollers.

There is also ML-based System State Monitor Application Software Pack which provides an example of gathering time-series data, in this case vibrations picked up by an accelerometer, and includes Python scripts to use the data that was collected to generate a small model that can be deployed on many different microcontrollers (including i.MX RT1170, LPC55S69, K66F) for anomaly detection. The same concepts and technique can be used for any sort of times series data like magnetometers, pressure, temperature, flow speed, and much more. This can simplify the work of coming up with a customer algorithm to detect the different states of whatever system you're interested in, as you can let the power of machine learning figure all that out for you.

There is also an on-device trained anomaly detection model example that can be found on the Application Code Hub.

Troubleshooting:

Why do I get an error when running Tensorflow Lite Micro that it "Didn't find op for builtin opcode"?

The full error will look something like this:

Didn't find op for builtin opcode 'PAD' version '1'

Failed to get registration from op code ADD

Failed starting model allocation.

AllocateTensors() failed

Failed initializing model

The reason is that with MCUXpresso SDK, the TFLM examples have been optimized to only support the operands necessary for the default models. If you are using your own model, it may use extra types of operands.

To fix this issue, add that operator to MODEL_GetOpsResolver function found in source\model\model_name_ops_npu.cpp Also make sure to also increase the size of the static array s_microOpResolver to match the number of operators

An alternative method is also described in the TFLM Lab Guide on how to use the All Ops Resolver. Add the following header file

#include"tensorflow/lite/micro/all_ops_resolver.h"

and then comment out the micro_op_resolver and use this instead:

//tflite::MicroOpResolver µ_op_resolver = //MODEL_GetOpsResolver(s_errorReporter);

tflite::AllOpsResolver micro_op_resolver;

Why do I get the error “Internal Neutron NPU driver error 281b in model prepare!” or "Incompatible Neutron NPU microcode and driver versions!" when using the Neutron NPU?

The version of the eIQ Neutron Converter Tool needs to be compatible with the NPU libraries used by your project. See more details in this post on using custom models with eIQ Neutron NPU.

Sometimes in eIQ Toolkit the Validation page hangs and it stays stuck on "Converting Model". How do I work around this?

On the Validation section of the wizard, you will need to wait for the "Input Data Type" and "Output Data Type" selection boxes to be populated before clicking on the "Validation" button at the bottom. It may take a minute or two for those selection boxes to pop up on the left hand side. Once they do, then click on Validate and it should no longer hang.

How do I use my GPU when training with eIQ Toolkit?

eIQ Toolkit 1.10 only supports GPU training on Linux due to the latest TensorFlow versions no longer supporting GPU on Windows.

Why do I get a blank or black LCD screen when I use the eIQ demos that have camera+LCD support on RT1170 or RT1160?

There are different versions of the LCD, so you need to make sure you have the software configured correctly for the LCD you have. See this post for more details on what to change.

There is a Javascript error in Time Series Studio when I start the training.

There is a bug where if the eIQ Portal window is closed after opening the Time Series Studio then that error comes up. Try relaunching Time Series Studio but keep the original eIQ Portal window open.

The eIQ Time Series Studio in eIQ Toolkit v1.17 is v1.3.4 but it says there's a newer version?

The eIQ Toolkit v1.17 contains an older version of eIQ Time Series Studio. The latest version can always be found on the eIQ Time Series Studio website.

General AI/ML:

What is Artificial Intelligence, Machine Learning, and Deep Learning?

Artificial intelligence is the idea of using machines to do “smart” things like a human. Machine Learning is one way to implement artificial intelligence, and is the idea that if you give a computer a lot of data, it can learn how to do smart things on its own. Deep Learning is a particular way of implementing machine learning by using something called a neural network. It’s one of the more promising subareas of artificial intelligence today.

This video series on Neural Network basics provides an excellent introduction into what a neural network is and the basics of how one works.

What are some uses for machine learning on embedded systems?

Image classification – identify what a camera is looking at

- Coffee pods

- Empty vs full trucks

- Factory defects on manufacturing line

- Produce on supermarket scale

Facial recognition – identifying faces for personalization without uploading that private information to the cloud

- Home Personalization

- Appliances

- Toys

- Auto

Audio Analysis

- Wake-word detection

- Voice commands

- Alarm Analytics (Breaking glass/crying baby)

Anomaly Detection

- Identify factory issues before they become catastrophic

- Motor analysis

- Personalized health analysis

What is training and inference?

Machine learning consists of two phases: Training and Inference

Training is the process of creating and teaching the model. This occurs on a PC or in the cloud and requires a lot of data to do the training. eIQ is not used during the training process.

Inference is using a completed and trained model to do predictions on new data. eIQ is focused on enhancing the inferencing of models on embedded devices.

What are the benefits for “on the edge” inference?

When inference occurs on the embedded device instead of the cloud, it’s called “on the edge”. The biggest advantage of on the edge inferencing is that the data being analyzed never goes anywhere except the local embedded system, providing increased security and privacy. It also saves BOM costs because there’s no need for WiFi or BLE to get data up to the cloud, and there’s no charge for the cloud compute costs to do the inferencing. It also allows for faster inferencing since there’s no latency waiting for data to be uploaded and then the answer received from the cloud.

What processor do I need to do inferencing of models?

Inferencing simply means doing millions of multiple and accumulate math calculations – the dominant operation when processing any neural network -, which any MCU or MPU is capable of. There’s no special hardware or module required to do inferencing. However specialized ML hardware accelerators, high core clock speeds, and fast memory can drastically reduce inference time.

Determining if a particular model can run on a specific device is based on:

- How long will it take the inference to run. The same model will take much longer to run on less powerful devices. The maximum acceptable inference time is dependent on your particular application.

- Is there enough non-volatile memory to store the weights, the model itself, and the inference engine

- Is there enough RAM to keep track of the intermediate calculations and output

As an example, the performance required for image recognition will be very dependent on the model is being used to do image recognition. This will vary depending on how many classes, what size of images to be analyzed, if multiple objects or just one will be identified, and how that particular model is structured. In general image classification can be done on i.MX RT devices and multiple object detection requires i.MX devices, as those models are significantly more complex.

eIQ provides several examples of image recognition for i.MX RT and i.MX devices and your own custom models can be easily evaluated using those example projects.

How is accuracy affected when running on slower/simpler MCUs?

The same model running on different processors will give the exact same result if given the same input. It will just take longer to run the inference on a slower processor.

In order to get an acceptable inference time on a simpler MCU, it may be necessary to simplify the model, which will affect accuracy. How much the accuracy is affected is extremely model dependent and also very dependent on what techniques are used to simplify the model.

What are some ways models can be simplified?

- Quantization – Transforming the model from its original 32-bit floating point weights to 8-bit fixed point weights. Requires ¼ the space for weights and fixed point math is faster than floating point math. Often does not have much impact on accuracy but that is model dependent.

- Fewer output classifications can allow for a simpler yet still accurate model

- Decreasing the input data size (e.g. 128x128 image input instead of 256x256) can reduce complexity with the trade-off of accuracy due to the reduced resolution. How much that trade-off is depends on the model and requires experimentation to find.

- Software could rotate image to specific position using classic image manipulation techniques, which means the neural network for identification can be much smaller while maintaining good accuracy compared to case that neural network has to analyze an image that could be in all possible orientations.

What is the difference between image classification, object detection, and instance segmentation?

Image classification identifies an entire image and gives a single answer for what it thinks it is seeing. Object detection is detecting one or more objects in an image. Instance segmentation is finding the exact outline of the objects in an image.

Larger and more complex models are needed to do object detection or instance segmentation compared to image classification.

What is the difference between Facial Detection and Facial Recognition?

Facial detection finds any human face. Facial recognition identifies a particular human face. A model that does facial recognition will be more complex than a model that only does facial detection.

How come I don’t see 100% accuracy on the data I trained my model on?

Models need to generalize the training data in order to avoid overfitting. This means a model will not always give 100% confidence , even on the data a model was trained on.

What are some resources to learn more about machine learning concepts?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @anthony_huereca ,

I am using eIQ tool on model training for i.MXRT1170 platform.

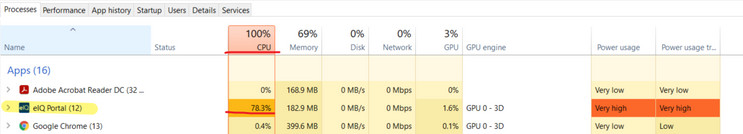

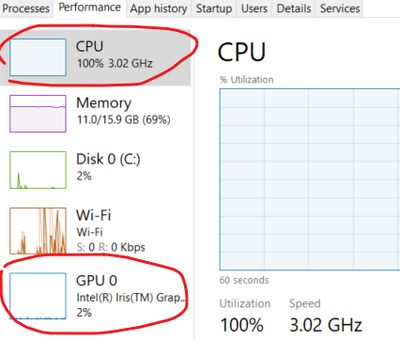

I find eIQ tool is always use PC CPU resource, so when it perform the model training that will occupy around 60 ~ 80% CPU resource. But it not use any GPU resource.

Is there any method to configure eIQ tool to use GPU resource on the model training ? In case that will have better performance on the AI training. Is it?

Thanks.

David

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi David,

eIQ Toolkit 1.0.5 is using TensorFlow version is 2.3.2, so to use the GPU when training you will need to install cuDNN v7.6 and CUDA 10.2. If you have newer versions of those tools installed on your PC you may need to uninstall those first before installing the version needed for the 1.0.5 version of eIQ Toolkit. I've also updated the FAQ with this information and it will be in the documentation in the next version of eIQ Toolkit that is released.

-Anthony