- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Digital Signal Controllers

- Vybrid Processors

- ColdFire/68K Microcontrollers and Processors

- 8-bit Microcontrollers

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

-

- Solution Forums

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- Identification and Security

- i.MX Processors

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- CodeWarrior

- Wireless Connectivity

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

-

- Home

- :

- i.MX Processors

- :

- i.MX RT Crossover MCUs Knowledge Base

- :

- The “Hello World” of TensorFlow Lite

The “Hello World” of TensorFlow Lite

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The “Hello World” of TensorFlow Lite

The “Hello World” of TensorFlow Lite

Goal

Our goal is to train a model that can take a value, x, and predict its sine, y. In a real-world application, if you needed the sine of x, you could just calculate it directly. However, by training a model to approximate the result, we can demonstrate the basics of machine learning.

TensorFlow and Keras

TensorFlow is a set of tools for building, training, evaluating, and deploying machine learning models. Originally developed at Google, TensorFlow is now an open-source project built and maintained by thousands of contributors across the world. It is the most popular and widely used framework for machine learning. Most developers interact with TensorFlow via its Python library. TensorFlow does many different things. In this post, we’ll use Keras, TensorFlow’s high-level API that makes it easy to build and train deep learning networks.

To enable TensorFlow on mobile and embedded devices, Google developed the TensorFlow Lite framework. It gives these computationally restricted devices the ability to run inference on pre-trained TensorFlow models that were converted to TensorFlow Lite. These converted models cannot be trained any further but can be optimized through techniques like quantization and pruning.

Building the Model

To building the Model, we should follow the below steps.

- Obtain a simple dataset.

- Train a deep learning model.

- Evaluate the model’s performance.

- Convert the model to run on-device.

Please navigate to the URL in your browser to open the notebook directly in Colab, this notebook is designed to demonstrate the process of creating a TensorFlow model and converting it to use with TensorFlow Lite.

Deploy the mode to the RT MCU

- Hardware Board: MIMXRT1050 EVK Board

Fig 1 MIMXRT1050 EVK Board

- Template demo code: evkbimxrt1050_tensorflow_lite_cifar10

Code

/* Copyright 2017 The TensorFlow Authors. All Rights Reserved.

Copyright 2018 NXP. All Rights Reserved.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

==============================================================================*/

#include "board.h"

#include "pin_mux.h"

#include "clock_config.h"

#include "fsl_debug_console.h"

#include <iostream>

#include <string>

#include <vector>

#include "timer.h"

#include "tensorflow/lite/kernels/register.h"

#include "tensorflow/lite/model.h"

#include "tensorflow/lite/optional_debug_tools.h"

#include "tensorflow/lite/string_util.h"

#include "Sine_mode.h"

int inference_count = 0;

// This is a small number so that it's easy to read the logs

const int kInferencesPerCycle = 30;

const float kXrange = 2.f * 3.14159265359f;

#define LOG(x) std::cout

void RunInference()

{

std::unique_ptr<tflite::FlatBufferModel> model;

std::unique_ptr<tflite::Interpreter> interpreter;

model = tflite::FlatBufferModel::BuildFromBuffer(sine_model_quantized_tflite, sine_model_quantized_tflite_len);

if (!model) {

LOG(FATAL) << "Failed to load model\r\n";

exit(-1);

}

model->error_reporter();

tflite::ops::builtin::BuiltinOpResolver resolver;

tflite::InterpreterBuilder(*model, resolver)(&interpreter);

if (!interpreter) {

LOG(FATAL) << "Failed to construct interpreter\r\n";

exit(-1);

}

float input = interpreter->inputs()[0];

if (interpreter->AllocateTensors() != kTfLiteOk) {

LOG(FATAL) << "Failed to allocate tensors!\r\n";

}

while(true)

{

// Calculate an x value to feed into the model. We compare the current

// inference_count to the number of inferences per cycle to determine

// our position within the range of possible x values the model was

// trained on, and use this to calculate a value.

float position = static_cast<float>(inference_count) /

static_cast<float>(kInferencesPerCycle);

float x_val = position * kXrange;

float* input_tensor_data = interpreter->typed_tensor<float>(input);

*input_tensor_data = x_val;

Delay_time(1000);

// Run inference, and report any error

TfLiteStatus invoke_status = interpreter->Invoke();

if (invoke_status != kTfLiteOk)

{

LOG(FATAL) << "Failed to invoke tflite!\r\n";

return;

}

// Read the predicted y value from the model's output tensor

float* y_val = interpreter->typed_output_tensor<float>(0);

PRINTF("\r\n x_value: %f, y_value: %f \r\n", x_val, y_val[0]);

// Increment the inference_counter, and reset it if we have reached

// the total number per cycle

inference_count += 1;

if (inference_count >= kInferencesPerCycle) inference_count = 0;

}

}

/*

* @brief Application entry point.

*/

int main(void)

{

/* Init board hardware */

BOARD_ConfigMPU();

BOARD_InitPins();

BOARD_InitDEBUG_UARTPins();

BOARD_BootClockRUN();

BOARD_InitDebugConsole();

NVIC_SetPriorityGrouping(3);

InitTimer();

std::cout << "The hello_world demo of TensorFlow Lite model\r\n";

RunInference();

std::flush(std::cout);

for (;;) {}

}

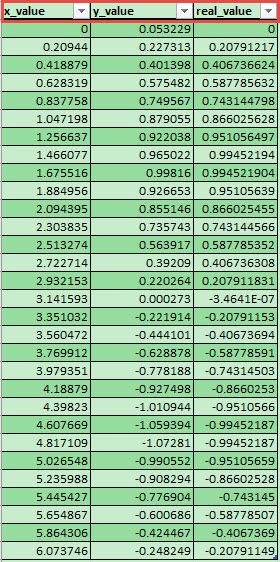

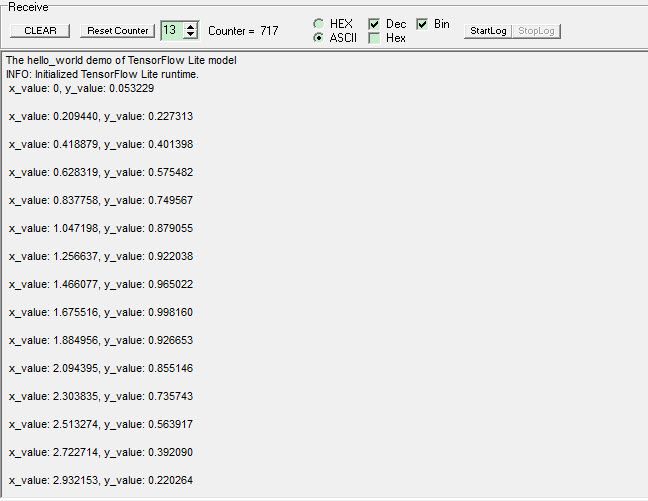

Test result

On the MIMXRT1050 EVK Board, we log the input data: x_value and the inferenced output data: y_value via the Serial Port.

Fig2 Received data

In a while loop function, It will run inference for a progression of x values in the range 0 to 2π and then repeat. Each time it runs, a new x value is calculated, the inference is run, and the data is output.

Fig3 Test result

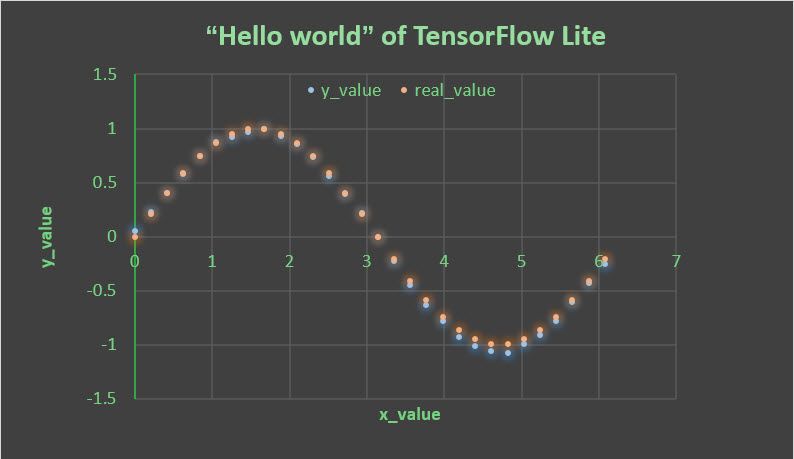

In further, we use Excel to display the received data against our actual values as the below figure shows.

Fig4 Dot Plot

You can see that, for the most part, the dots representing predicted values form a smooth sine curve along the center of the distribution of actual values. In general, Our network has learned to approximate a sine curve.

No ratings