- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- Product Forums

- :

- ColdFire/68K Microcontrollers and Processors

- :

- Different branch execution times

Different branch execution times

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Different branch execution times

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello everyone,

we have a long running loop that just counts towards a given value. This results in following assembler code:

loop:

ADDQ #1,D0

CMP D0,D1

BLS loop

With this loop we observe different execution times. Most of the time executing the loop takes 2 processor cycles, we guess that the branch is correctly predicted and an entry in the branch cache is available resulting in executing the branch instruction in zero cycles. For the first few execution cycles we need 3 processor cycles, we guess that the branch is correctly predicted but no branch cache entry is available. So far everything seems fine.

But sometimes we observe that the execution will continously take 3 processor cycles. The loop is running for quite some time and after an interrupt is handled the long execution times can occur. It seems like the branch cache is not generating an entry resulting in the BLS always taking one cycle.

Is there any known problem with branche cache or is there a state where the branch cache is not updating itself? We have the branch, data and instruction cache enabled and no flushes occur before the "error".

If anyone has an idea where the behavoir is coming from, we appreciate any help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Please let me know what NXP part you working with?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hallo,

I'm working together with Achim Daub at the project. We use a MCF5484.

Here some more information about the observed problem:

The problem seems to appear in case of an immediate interruption after starting or continuing the described loop:

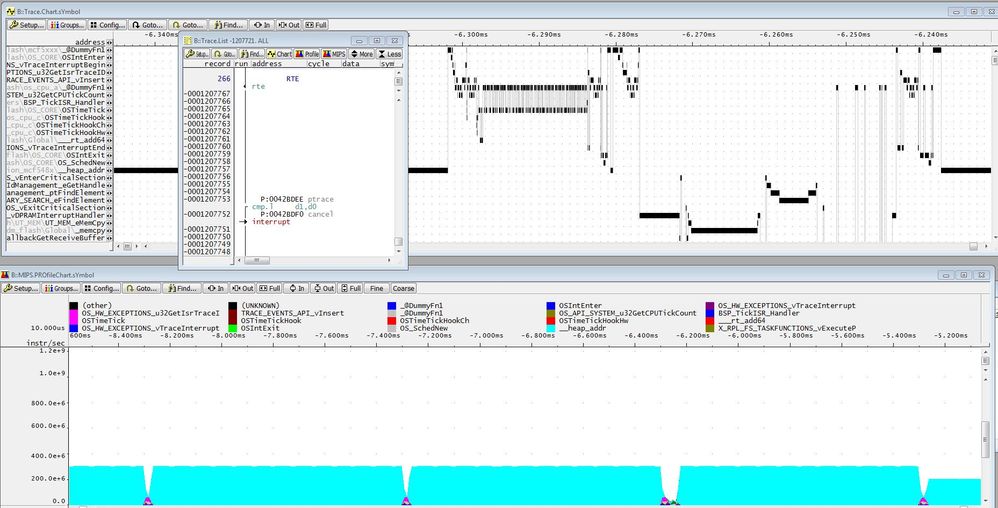

The upper window shows the functions executed.

The small windows above the upper window shows the single opcode (cmp) executed after returning from an interrupt but before the loop is interrupted again by another interrupt.

The lower window shows the performance (mips). The loop execution performance is displayed in light blue. After the last interrupt (shown as interceptions), the performance is degenerated by 1/3. The degeneration is caused by the "bls" command which in case of the error consumes processor cycles. (see picture below)

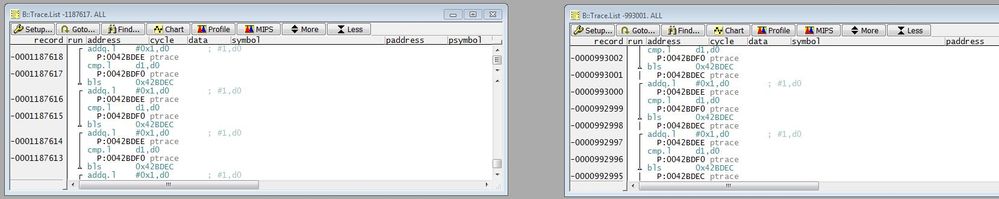

The left window shows the loop execution before the error happens.

The right window shows the loop execution after the error happened.

The error seems to occur when the loop execution is interrupted before the branch prediction works. In this traced example. The loop execution was interrupted by an external timer interrupt. When the timer interrupt was done, the loop execution continued for a single opcode before the loop was again interrupted by an other external interrupt. When this second interrupt returned, the loop executes correctly until the next interrupt (timer) occurs. When the timer interrupt returns, the loop executes only with 2/3 of the possible speed due to the not working branch cache.

Do you have an idea what we can do to solve this problem?

Dennis Pahl

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When I first read your post I assumed you'd found the CFV3 "random branch time bug" that I detailed here:

https://community.nxp.com/thread/324006#404184

The problem with the CFV3 core is that they added a bit in the CONDITION REGISTER that flips the branch prediction, and some "optimised" compiler code can load that with garbage, changing the prediction when you don't want it to.

But you're using the CFV4, and it doesn't seem to have this sort of bug. It has a more complicated one.

I see you're demonstrating this branch-prediction change by using a rather complicated debugger, which seems to be doing "real time tracing" of the CPU. Are you sure it isn't causing the problem? Are you sure this change in execution speed also happens when the CPU is running on its own without the debugger? Can you prove that?

If that isn't the problem, I would guess that the branch cache entry is being invalidated by the interrupt, and isn't being refreshed when your loop resumes. It is possible the cache only works properly when the code is running linearly and then finds the branch instruction. and that returning from an interrupt "in the middle of a loop isn't something it can handle.

But if the cache wasn't working at all I'd expect a far higher delay of up to 8 clocks. The CPU has a "Branch Cache" and a "Prediction Table". I suspect the CPU may be using the latter in your case, taking one extra clock.

> Do you have an idea what we can do to solve this problem?

Deal with it. Don't expect "constant execution speed" on modern CPUs. If you need precise timing, then set up a hardware counter and read it for timing. You might be able to use a GPT or an SLT.

Otherwise, if you do need a "constant execution time loop", see if making the loop longer by adding NOPs [1] can get it to fix the problem and reload the cache (or whatever is going wrong). Use different assembly codes.

I had a similar problem with the i.MX CPU. It is running Linux and has a "Bogomips" loop which it uses for short delays, and calibrated on startup. it is a "countdown loop" like yours. It runs a different number of clocks when compiled on different code boundaries, probably related to the cache line length. It doesn't change during execution, but it is different for different kernel compiles. We just had to deal with it.

Note 1: Don't use the "NOP" instruction as a "NOP". NOP doesn't do nothing on this CPU as it "Synchronises the Pipeline". It takes SIX clocks. But it might do what you want and give you constant execution timing, so try it. If you want a real "NOP" then use "TPF". See the Programmer's Reference Manual for details.

Tom