- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

-

- NXP Tech Blogs

- Home

- :

- i.MX Processors

- :

- i.MX Processors Knowledge Base

- :

- i.MX6Q capture raw(bayer) data and debayer

i.MX6Q capture raw(bayer) data and debayer

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

i.MX6Q capture raw(bayer) data and debayer

i.MX6Q capture raw(bayer) data and debayer

This doc show: on i.MX6Q SabreSD board, configure ov5640 sensor(parallel or MIPI) output 5MP(2592x1944) RAW(Bayer) data at 15fps,and i.MX6Q IPU capture RAW RGB data, and i.MX6Q GPU debayer RAW data then display image.

HW: i.MX6Q-SabreSD board, ov5640 sensor.

SW: Linux 4.14.98_2.0.0 BSP, and patches in this doc.

- Configure at camera sensor side

A Bayer filter is a color filter array (CFA) for arranging RGB color filters on a square grid of photosensors. The filter pattern is 50% green, 25% red and 25% blue, hence is also called BGGR, RGBG ,GRGB, or RGGB.

The ov5640 has an image array capable of operating at up to 15 fps in 5 megapixel (2592x1944) resolution. OV5640 support output formats: RAW(Bayer), RGB565/555/444,CCIR656, YUV422/420, YCbCr422, and compression.

To make ov5640 output 5MP RAW data at 15fps, check my patch imx6_ov5640_dvp_mipi_raw_capture_driver-4.14.98_2.0.0.diff which apply on i.MX Linux 4.14.98_2.0.0 BSP kernel code:

Parallel interface ov5640, use ov5640_raw_setting[] array of drivers/media/platform/mxc/capture/ov5640.c. This register setting is come from ov5640 software application note and data sheet.

MIPI interface ov5640, use ov5640_mipi_raw_setting[] array of drivers/media/platform/mxc/capture/ov5640_mipi.c. This register setting is combine setting of original code (remove ISP register setting), plus PLL register setting for MIPI interface, plus some data format register setting.

- Configure at i.MX6Q side

The i.MX6Q IPU camera port(CSI-2 module) support data format include Raw(Bayer), RGB, YUV 4:4:4, YUV 4:2:2 and grayscale, up to 16 bits per value.

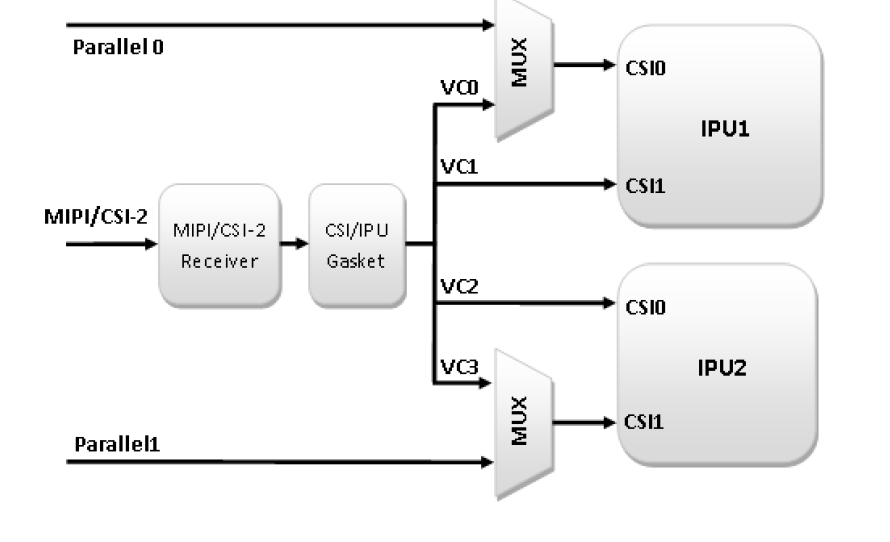

Below is camera data routing for i.MX6Q:

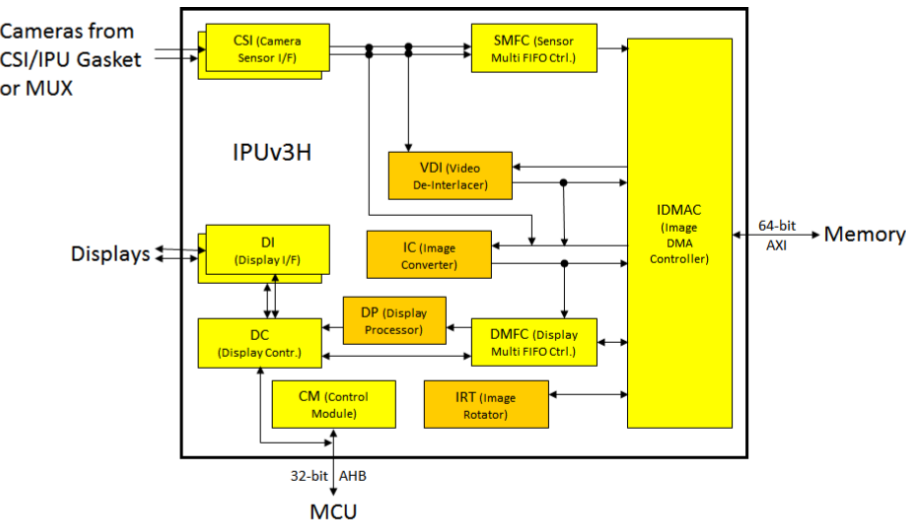

Below is i.MX6Q IPU block daigram:

The CSI-2 of IPU which is responsible for synchronizing and packing the video (or generic data) and sending it to other blocks. The video data received by CSI-2, could be sent to three other blocks: SMFC, VDI, IC.

For RAW (Bayer) data capture, should go through path like this: CSI-2-->SMFC-->IDMAC-->DDR memory

It means RAW data is received as generic data, see IPU_PIX_FMT_GENERIC in my patch, and IPU cannot process this kind data, it is just received to DDR memory.

For MIPI interface camera, need note is i.MX6 side MIPI D-PHY clock must be calibrated to the actual clock range of the camera sensor’s D-PHY clock and the calibrated value must be equal to or greater than the camera sensor clock, detail see AN5305.

Take MIPI ov5640 as example:

Pixel clock = 2592x1944x15fpsx(1/2 cycle/pixel)x1.35 blank interval = 51MHZ

MIPI data rate = 51MHZ x 16 bit = 816Mb/s

so 816/2/2*2 is 408MHZ is i.MX6 side D-PHY clock.

Here due to one bayer pixel is 8bit, and i.MX6 MIPI data bus is 16 bit, so above use 1/2 cycle/pixel.

And check ov5640_mipi_raw_setting[], you will got the sensor side D-PHY clock is about 672/2 = 336MHZ.

And check AN5305, register MIPI_CSI2_PHY_TST_CTRL1 of i.MX6 need set as 0xC, but here i still keep it as default BSP value 0x14.

3.Capture test code

I changed unit test mxc_v4l2_capture.c to capture the RAW data and save it to file. Check my patch imx6_ov5640_raw_captupre_test_4.14.98_2.0.0_ga.diff which apply on i.MX Linux 4.14.98_2.0.0 BSP unit test code.

Note the usage is:

./cap.out -c 1 -i 1 -fr 15 -m 6 -iw 2592 -ih 1944 -ow 2592 -oh 1944 -f BA81 -d /dev/video1 savefile.dmp

parameter -i 1 means use CSI to MEM mode

/dev/video1 is MIPI ov5640, /dev/video0 is parallel ov5640

4.Display RAW data

The RAW data cannot be displayed directly, debayer process is needed to get complete red, green, blue color for each pixel.

The debayer process if run on CPU, will cost much CPU time.

To save CPU time, debayer could done by GPU.

The method is, captured RAW data upload to GPU as texture , then GPU will do the debayer, then full color of each pixel will be got, then display it.

To upload RAW camera data to GPU with zero memory copy, i will use i.MX6Q GPU extension GL_VIV_direct_texture. It create a texture with direct access support. API glTexDirectVIVMap, which support mapping a user space memory or a physical address into the texture surface.

The API glTexDirectVIVMap need logic and physical address of data buffer, so i will allocate data buffer from /dev/mxc_ipu, it is dma-buffer also get logic/physical address of buffer, then queue it as USERPTR to ipu v4l2 capture driver, after dequeue got RAW camera data, pass it to GPU for debayer.

GPU side, I will use OpenGL shader code from "Efficient, High-Quality Bayer Demosaic Filtering on GPUs".

Check my patch imx6-5640-debayer-testcode-gpusdk-5.2.0.diff which apply on i.MX GPU SDK 5.2.0 code.

Note, here i only do is debayer, no extra process.

- Known issue

One thing is ov5640 output 5MP at 15fps, compare with output 5MP at 5fps, there are more noise of camera data at 15fps case. My debug found is , this noise seems come from ov5640 itself.

Reference:

a>https://www.nxp.com/webapp/Download?colCode=IMX6DQRM

b>https://www.nxp.com/webapp/Download?colCode=L4.14.98_2.0.0_MX6QDLSOLOX&appType=license

c>https://github.com/NXPmicro/gtec-demo-framework

d>https://www.nxp.com/docs/en/application-note/AN5305.pdf

e>ov5640 data sheet

f>ov5640 software application note

g>Efficient, High-Quality Bayer Demosaic Filtering on GPUs

https://www.semanticscholar.org/paper/Efficient%2C-High-Quality-Bayer-Demosaic-Filtering-on-McGuire/...