- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- i.MX Processors

- :

- i.MX Processors Knowledge Base

- :

- Measure (RAW) NAND flash performances

Measure (RAW) NAND flash performances

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Measure (RAW) NAND flash performances

Measure (RAW) NAND flash performances

Using a RAW NAND is more difficult compared to eMMC, but for lower capacity it is still cheaper.

Even with the ONFI (Open NAND Flash Interface) you can face initialization issue you can find by measure performance.

I will take example of a non-well supported flash, I have installed on my evaluation board (SABRE AI).

I wanted to do a simple performance test, to check roughly the MB/s I can expected with this NAND.

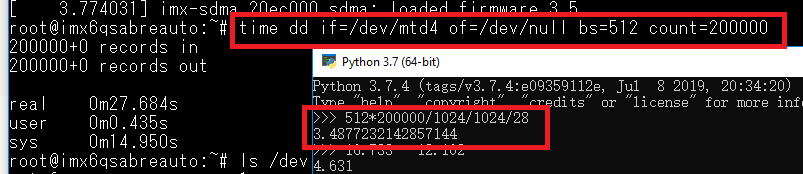

One of a simplest test is to use the dd command:

root@imx6qdlsolo:~# time dd if=/dev/mtd4 of=/dev/null

851968+0 records in

851968+0 records out

436207616 bytes (436 MB, 416 MiB) copied, 131.8884 s, 3.3 MB/s As my RAW was supposed to work in EDO Mode 5, I could expect more than 20MB/s.

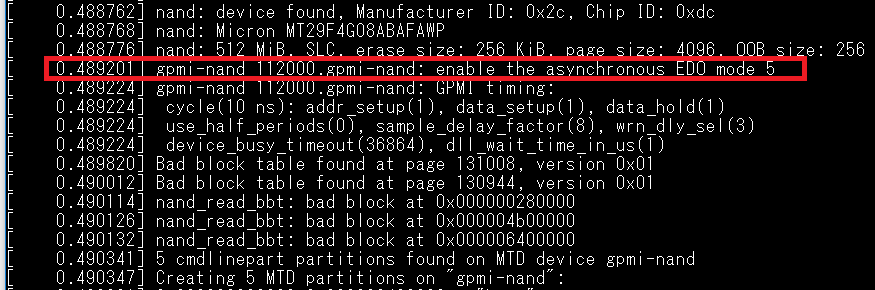

To check what was wrong, read you kernel startup log:

Booting Linux on physical CPU 0x0

Linux version 4.1.15-2.0.0+gb63f3f5 (bamboo@yb6) (gcc version 5.3.0 (GCC) ) #1 SMP PREEMPT Fri Sep 16 15:02:15 CDT 2016

CPU: ARMv7 Processor [412fc09a] revision 10 (ARMv7), cr=10c53c7d

CPU: PIPT / VIPT nonaliasing data cache, VIPT aliasing instruction cache

Machine model: Freescale i.MX6 DualLite/Solo SABRE Automotive Board

[...]

Amd/Fujitsu Extended Query Table at 0x0040

Amd/Fujitsu Extended Query version 1.3.

number of CFI chips: 1

nand: device found, Manufacturer ID: 0xc2, Chip ID: 0xdc

nand: Macronix MX30LF4G18AC

nand: 512 MiB, SLC, erase size: 128 KiB, page size: 2048, OOB size: 64

gpmi-nand 112000.gpmi-nand: mode:5 ,failed in set feature.

Bad block table found at page 262080, version 0x01

Bad block table found at page 262016, version 0x01

nand_read_bbt: bad block at 0x00000a7e0000

nand_read_bbt: bad block at 0x00000dc80000

4 cmdlinepart partitions found on MTD device gpmi-nand

Creating 4 MTD partitions on "gpmi-nand":On line 13 you can read "mode:5, failed in set feature", meaning you are not in mode 5... so you have the "relaxed" timing you have at boot.

After debuging your code (I have just remove the NAND back reading security check), you can redo the test:

root@imx6qdlsolo:~# time dd if=/dev/mtd4 of=/dev/null

851968+0 records in

851968+0 records out

436207616 bytes (436 MB, 416 MiB) copied, 32.9721 s, 13.2 MB/sSo you multiplied the performances by 4!

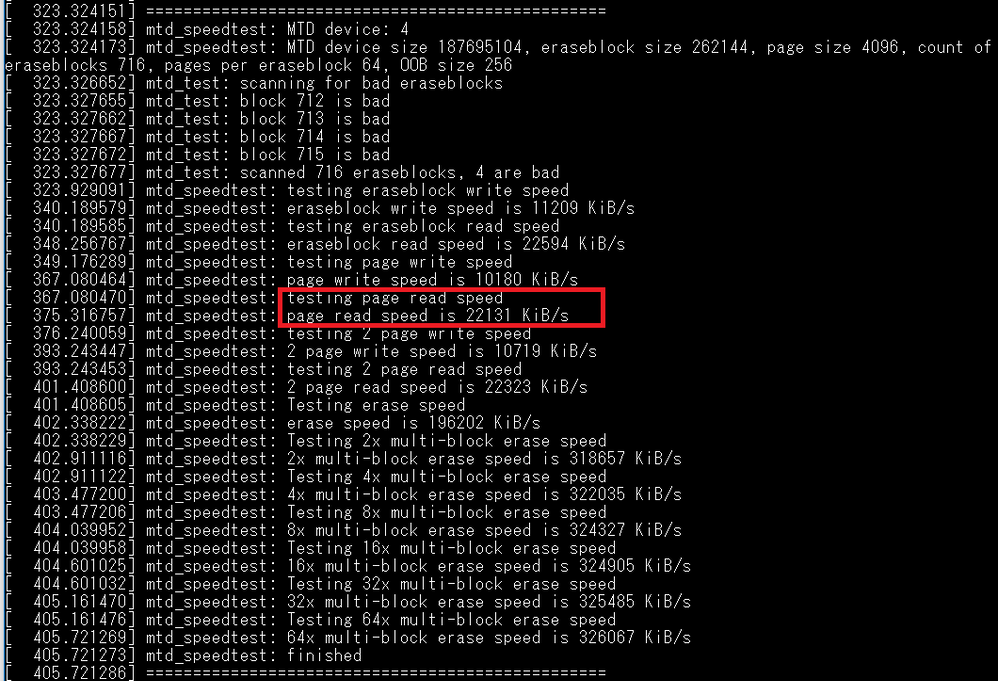

Anyway, you have a better tool to measure your NAND performance, it is mtd_speedtest, but you have to rebuild your kernel.

In Yocto, reconfigure your kernel (on your PC of couse!):

bitbake virtual/kernel -c menuconfigChoose in the menu "Device Drivers" -> "Memory Technology Device (MTD) support" -> "MTD tests support", even it it not recommended!

bitbake virtual/kernel -f -c compile

bitbake virtual/kernel -f -c build

bitbake virtual/kernel -f -c deployThen reflash you board (kernel + rootfs as tests are .ko files):

Then you can do more accurate performance test:

insmod /lib/modules/4.1.29-fslc+g59b38c3/kernel/drivers/mtd/tests/mtd_speedtest.ko dev=2

=================================================

mtd_speedtest: MTD device: 2

mtd_speedtest: MTD device size 16777216, eraseblock size 131072, page size 2048, count of eraseblocks 128, pages per eraseblock 64, OOB size 64

mtd_test: scanning for bad eraseblocks

mtd_test: scanned 128 eraseblocks, 0 are bad

mtd_speedtest: testing eraseblock write speed

mtd_speedtest: eraseblock write speed is 4537 KiB/s

mtd_speedtest: testing eraseblock read speed

mtd_speedtest: eraseblock read speed is 16384 KiB/s

mtd_speedtest: testing page write speed

mtd_speedtest: page write speed is 4250 KiB/s

mtd_speedtest: testing page read speed

mtd_speedtest: page read speed is 15784 KiB/s

mtd_speedtest: testing 2 page write speed

mtd_speedtest: 2 page write speed is 4426 KiB/s

mtd_speedtest: testing 2 page read speed

mtd_speedtest: 2 page read speed is 16047 KiB/s

mtd_speedtest: Testing erase speed

mtd_speedtest: erase speed is 244537 KiB/s

mtd_speedtest: Testing 2x multi-block erase speed

mtd_speedtest: 2x multi-block erase speed is 252061 KiB/s

mtd_speedtest: Testing 4x multi-block erase speed

mtd_speedtest: 4x multi-block erase speed is 256000 KiB/s

mtd_speedtest: Testing 8x multi-block erase speed

mtd_speedtest: 8x multi-block erase speed is 260063 KiB/s

mtd_speedtest: Testing 16x multi-block erase speed

mtd_speedtest: 16x multi-block erase speed is 260063 KiB/s

mtd_speedtest: Testing 32x multi-block erase speed

mtd_speedtest: 32x multi-block erase speed is 256000 KiB/s

mtd_speedtest: Testing 64x multi-block erase speed

mtd_speedtest: 64x multi-block erase speed is 260063 KiB/s

mtd_speedtest: finished

=================================================You can now achieve almost 16MB/s, better than the dd test. Of course you cannot achieve more than 20MB/s, but you are not that far, and the NAND driver need optimizations.

To redo the test:

rmmod /lib/modules/4.1.29-fslc+g59b38c3/kernel/drivers/mtd/tests/mtd_speedtest.ko

insmod /lib/modules/4.1.29-fslc+g59b38c3/kernel/drivers/mtd/tests/mtd_speedtest.ko dev=2

To check your NAND is in EDO mode 5, you can check your clock tree:

/unit_tests/dump-clocks.sh

clock parent flags en_cnt pre_cnt rate

[...]

gpmi_bch_apb --- 00000005 0 0 198000000

gpmi_bch --- 00000005 0 0 198000000

gpmi_io --- 00000005 0 0 99000000

gpmi_apb --- 00000005 0 0 198000000The IO are clocked now at 99MHz, thus you can read at 49.5MHz (20ns in EDO mode 5 definition).

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

How did you remove the "NAND back reading security check"? Is there any side effects of removing it?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi,

I have in fact forced the NAND to be in EDO mode 5.

There were a bug in the RAW NAND, supposed to support EDO mode 5, but the NAND did not answer that.

Forcing EDO mode 5 is dangerous if you change the NAND supplier during production lifetime.

I have enclosed my modified gpmi-lib.c in the main post (modification with NXA22167 comments)

BR

V.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

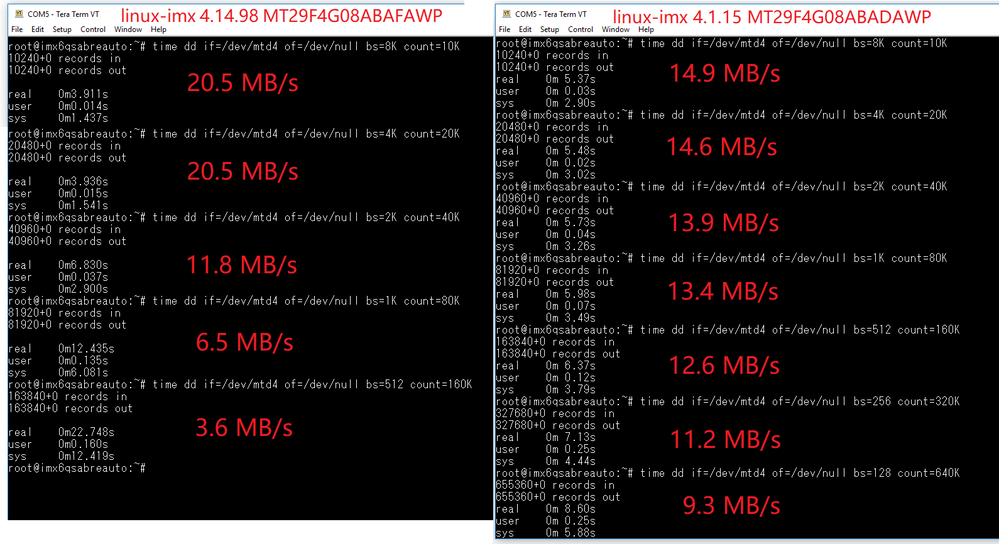

It's weird on our imx6d board: dd is much slower than mtd_speedtest, ~3.5MB/s vs ~20MB/s

P.S. EDO mode is 5 is enabled by linux-imx 4.14.98, without modifying source code.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi,

For the dd command, I am pretty sure the "bs" argument (forced transfer size) is slowing down the transfer, see in this article: Tuning dd block size - tdg5

Can you please try a bigger one (200M for instance).

BR

V.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

vincent.aubineau, Thanks! It's about block_size: