- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- MCUXpresso Training Hub

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

-

- Home

- :

- i.MX论坛

- :

- i.MX处理器知识库

- :

- i.MX8MP support raw format on Android

i.MX8MP support raw format on Android

i.MX8MP support raw format on Android

i.MX8MP support raw format on Android

1. Description:

On the i.MX Android camera HAL, It only supports YUYV sensor, regardless of whether the sensor is connected to ISP or ISI. Some users want to customize the sensor format, such as UYVY or raw, they need a guide to do this, this document intends to describe how to implement raw camera sensor on i.MX8MP android, and output raw data.

Note: Base on i.MX 8M plus, Android12_1.0.0.

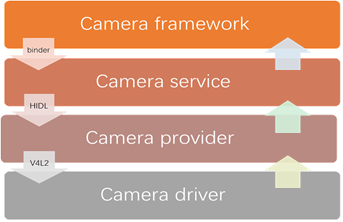

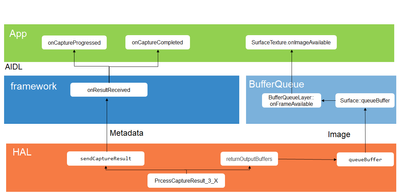

2. Camera HAL

Android's camera hardware abstraction layer (HAL) connects the higher level camera framework APIs in android.hardware.camera2 to your underlying camera driver and hardware. For more detail information, please refer to AOSP document:

https://source.android.google.cn/docs/core/camera/camera3_requests_hal?hl=en

while on I.MX camera HAL, the camera subsystem can be divided into several parts:

- Camera framework: frameworks\av\camera

- Camera service: frameworks\av\services\camera\libcameraservice\

- Camera provider:

- hardware/interfaces/camera/provider/

- hardware/google/camera/common/hal/hidl_service/

hidl_service dlopen the camera HAL3.

- Camera HAL3: vendor\nxp-opensource\imx\camera\

- Camera driver: vendor/nxp-opensource/kernel_imx/drivers/media/i2c

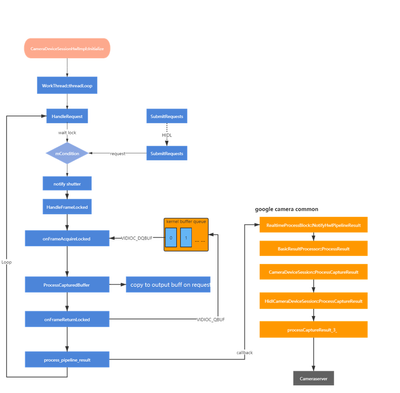

It's callstack can be list as follow:

There are 2 streams on pipeline, preview stream need 3 buffers and capture stream need 2 buffers:

CameraDeviceSessionHwlImpl: ConfigurePipeline, stream 0: id 0, type 0, res 2592x1944, format 0x21, usage 0x3, space 0x8c20000, rot 0, is_phy 0, phy_id 0, size 8388608

CameraDeviceSessionHwlImpl: ConfigurePipeline create capture stream

CameraDeviceSessionHwlImpl: ConfigurePipeline, stream 1: id 1, type 0, res 1024x768, format 0x22, usage 0x100, space 0x0, rot 0, is_phy 0, phy_id 0, size 0

CameraDeviceSessionHwlImpl: ConfigurePipeline create preview streamYou can use this command to dump the stream input/output:

"setprop vendor.rw.camera.test 1" to dump steam 0.

"setprop vendor.rw.camera.test 2" to dump steam 1.

Before you implement the command, you need to run “su; setenforce 0” to close the SeLinux, the data is dumped as "/data/x-src.data", "/data/x-dst.data", where "x" is the stream id as "0, "1,", "2", ...

|

|

Preview Stream |

Capture Stream |

|

ID |

1 |

0 |

|

Resolution |

1024*768 |

2592*1944 |

|

Format |

HAL_PIXEL_FORMAT_IMPLEMENTATION_DEFINED (34) |

HAL_PIXEL_FORMAT_BLOB (33) |

|

Usage |

0x900 GRALLOC_USAGE_HW_TEXTURE (0x100) GRALLOC_USAGE_HW_COMPOSER (0x800) |

0x3 GRALLOC_USAGE_SW_READ_OFTEN |

|

Data space |

0 |

HAL_DATASPACE_V0_JFIF |

The following usage will be added by framework to distinguish preview and video:GRALLOC_USAGE_HW_VIDEO_ENCODER

3. Raw support

3.1 system modification

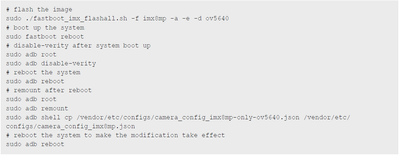

The default image for i.MX 8M Plus EVK supports basler + basler and the cameras can work after the image is flashed and boot up, it’s camera with ISP, but we need ISI to process raw. You should refer to Android_User’s_Guide.pdf, you need find the correct version, as Android12_2.0.0 is different with Android12_1.0.0. To make cameras work with Non-default images, execute the following additional commands:

Only OV5640 (CSI1) on host:

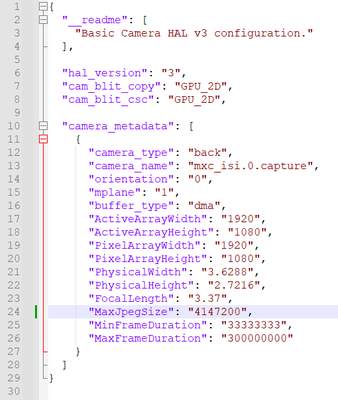

As we use OV2775 which support 1920*1080, unpacked raw12, the json file:

3.2 DTB modification

1. Firstly, change the BoardConfig.mk to generate dtbo-imx8mp-ov2775.img

device\nxp\imx8m\evk_8mp\BoardConfig.mk:

TARGET_BOARD_DTS_CONFIG += imx8mp-ov2775:imx8mp-evk-ov2775.dtb2. Secondly, add imx8mp-evk-ov2775.dts to vendor\nxp-opensource\kernel_imx\arch\arm64\boot\dts\freescale

3. Change imx8mp-evk-ov2775.dts, connect OV2775 to ISI:

&isi_0 {

status = "okay";

};

&isp_0 {

status = "disabled";

};

&dewarp {

status = "disabled";

};4. Build dtbo image and flash it to board:

./imx-make.sh dtboimage -j4

fastboot flash dtbo dtbo.img3.3 Sensor driver modification

I use the OV2775 driver from the isp side, be careful that all the function such as g_frame_interval and enum_frame_size should be implemented, or the HAL will get wrong parameters and return error.

static struct v4l2_subdev_video_ops ov2775_subdev_video_ops = {

.g_frame_interval = ov2775_g_frame_interval,

.s_frame_interval = ov2775_s_frame_interval,

.s_stream = ov2775_s_stream,

};

static const struct v4l2_subdev_pad_ops ov2775_subdev_pad_ops = {

.enum_mbus_code = ov2775_enum_mbus_code,

.set_fmt = ov2775_set_fmt,

.get_fmt = ov2775_get_fmt,

.enum_frame_size = ov2775_enum_frame_size,

.enum_frame_interval = ov2775_enum_frame_interval,

};

3.4 ISI driver modification

We need to add raw format on ISI driver:

}, {

.name = "RAW12 (SBGGR12)",

.fourcc = V4L2_PIX_FMT_SBGGR12,

.depth = { 16 },

.color = MXC_ISI_OUT_FMT_RAW12,

.memplanes = 1,

.colplanes = 1,

.mbus_code = MEDIA_BUS_FMT_SBGGR12_1X12,

}, {

.name = "RAW10 (SGRBG10)",

.fourcc = V4L2_PIX_FMT_SGRBG10,

.depth = { 16 },

.color = MXC_ISI_OUT_FMT_RAW10,

.memplanes = 1,

.colplanes = 1,

.mbus_code = MEDIA_BUS_FMT_SGRBG10_1X10,

}3.5 Camera HAL modification

As there are preview stream and capture stream on the pipeline.

GPU does not support raw format, it will print error log when application set raw format:

02-10 18:49:02.162 436 436 E NxpAllocatorHal: convertToMemDescriptor Unsupported fomat PixelFormat::RAW10

02-10 18:49:02.163 2390 2445 E GraphicBufferAllocator: Failed to allocate (1920 x 1080) layerCount 1 format 37 usage 20303: 7

02-10 18:49:02.163 2390 2445 E BufferQueueProducer: [ImageReader-1920x1080f25m2-2390-0](id:95600000002,api:4,p:2148,c:2390) dequeueBuffer: createGraphicBuffer failed

02-10 18:49:02.163 2390 2405 E BufferQueueProducer: [ImageReader-1920x1080f25m2-2390-0](id:95600000002,api:4,p:2148,c:2390) requestBuffer: slot 0 is not owned by the producer (state = FREE)

02-10 18:49:02.163 2148 2423 E Surface : dequeueBuffer: IGraphicBufferProducer::requestBuffer failed: -22

02-10 18:49:02.163 2390 2445 E BufferQueueProducer: [ImageReader-1920x1080f25m2-2390-0](id:95600000002,api:4,p:2148,c:2390) cancelBuffer: slot 0 is not owned by the producer (state = FREE)

02-10 18:49:02.164 2148 2423 E Camera3-OutputStream: getBufferLockedCommon: Stream 1: Can't dequeue next output buffer: Invalid argument (-22)

Modifying the gpu code is not recommended.

When preview stream, it's pixel format is fixed to HAL_PIXEL_FORMAT_IMPLEMENTATION_DEFINED, it needs YUYV format, in this patch, we don't convert raw12 to yuyv, just copy the buffer from input to output, so the preview stream is raw12 actually.

When capture stream, we use the Blob format, which usually used for JPEG format. when we find the format is Blob pass down by application, camera HAL will copy the buffer from input to output directly. You can check the detail on function ProcessCapturedBuffer(),

4. Application and Tool

4.1 Application

The test application on the attachment “android-Camera2Basic-master_application.7z”, It's basically a common camera application, it set the capture stream format to blob:

Size largest = Collections.max(

Arrays.asList(map.getOutputSizes(ImageFormat.JPEG)),

new CompareSizesByArea());

mImageReader = ImageReader.newInstance(largest.getWidth(),

largest.getHeight(),

ImageFormat.JPEG, /*maxImages*/2);4.2 Tool

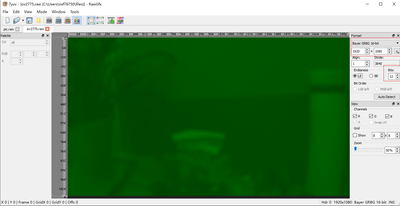

We use 7yuv tool to check the raw12 format, which is captured by applicable or dump by HAL, you need set the parameter on the right side:

ImageJ tool also can be used to review raw format.