- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- i.MX フォーラム

- :

- i.MXプロセッサ

- :

- Re: Streaming Overlapped (Composed) Videos over RTP

Streaming Overlapped (Composed) Videos over RTP

- RSS フィードを購読する

- トピックを新着としてマーク

- トピックを既読としてマーク

- このトピックを現在のユーザーにフロートします

- ブックマーク

- 購読

- ミュート

- 印刷用ページ

- 新着としてマーク

- ブックマーク

- 購読

- ミュート

- RSS フィードを購読する

- ハイライト

- 印刷

- 不適切なコンテンツを報告

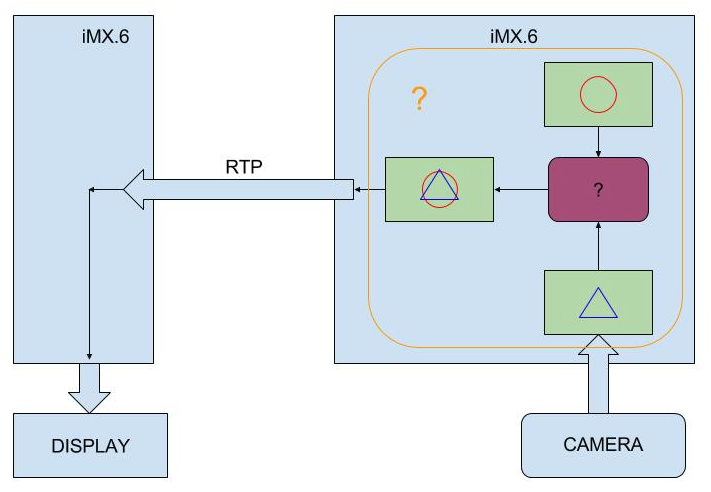

I am trying to implement a system that takes live camera stream, overlays some text and symbols (using Alpha-Channel transparency); and transmits it over RTP/UDP, as a single video (from one port). Both host and client systems have i.MX6QP on. For now, I am trying to figure out the GStreamer and pipeline system with gst-launch, and only using test patterns as video sources.

Here is a simplified diagram to show what I'm trying to achieve:

I have seen the videomixer plugin, but from what I understand, it is only used for overlaying and playing videos, not for creating 'transmittable' video streams. (I can use it with xvimagesink, but I couldn't achieve to implement the pipeline with udpsink. And I couldn't find a workaround for it.)

I haven't been able to find right tools/methods to implement the system described above. Am I right about the videomixer plugin? If so, what do you suggest me to do? Any help is appreciated, thanks in advance.

P.S. : I have asked the same question on S.O., but it seems that this is a more appropriate place.

解決済! 解決策の投稿を見る。

- 新着としてマーク

- ブックマーク

- 購読

- ミュート

- RSS フィードを購読する

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hello,

You may look at section 7 (Multimedia) of “i.MX_Linux_User's_Guide.pdf”

regarding supported GStreamer features: in particular section 7.3.15 (Video

composition) may help.

Summary page :

i.MX 6 / i.MX 7 Series Software and Development Tool|NXP

Have a great day,

Yuri

-----------------------------------------------------------------------------------------------------------------------

Note: If this post answers your question, please click the Correct Answer button. Thank you!

-----------------------------------------------------------------------------------------------------------------------

- 新着としてマーク

- ブックマーク

- 購読

- ミュート

- RSS フィードを購読する

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hello,

You may look at section 7 (Multimedia) of “i.MX_Linux_User's_Guide.pdf”

regarding supported GStreamer features: in particular section 7.3.15 (Video

composition) may help.

Summary page :

i.MX 6 / i.MX 7 Series Software and Development Tool|NXP

Have a great day,

Yuri

-----------------------------------------------------------------------------------------------------------------------

Note: If this post answers your question, please click the Correct Answer button. Thank you!

-----------------------------------------------------------------------------------------------------------------------

- 新着としてマーク

- ブックマーク

- 購読

- ミュート

- RSS フィードを購読する

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Thank you so much for the guidance. Unfortunately, I have no access to my setup for a week, so I am not able to try example pipelines with imxcompositor_g2d.

So you say that I can direct the composed video (created using imxcompositor_g2d) to the ethernet line? The first example in the document you've shown is this:

gst-launch-1.0 imxcompositor_{xxx} name=comp sink_1::xpos=160 sink_1::ypos=120 ! overlaysink videotestsrc ! comp.sink_0 videotestsrc ! comp.sink_1If I modify the pipeline like below, would it work?

gst-launch-1.0 imxcompositor_{xxx} name=comp sink_1::xpos=160 sink_1::ypos=120 ! udpsink host=19.10.1.22 port=5000 videotestsrc ! comp.sink_0 videotestsrc ! comp.sink_1 Like I said, I cannot try it for now, but I did some tryings with another plugin, named imxg2dcompositor, with similar pipeline structure and couldn't achieve to receive properly.

- 新着としてマーク

- ブックマーク

- 購読

- ミュート

- RSS フィードを購読する

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hello,

This is advantage of Gstreamer using, that we can just insert needed (proper) element in pipeline

to get required functionality.

Regards,

Yuri.