- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- General Purpose Microcontrollers

- :

- Kinetis Microcontrollers

- :

- On-chip temperature Calculations

On-chip temperature Calculations

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

On-chip temperature Calculations

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've commented 'often enough' about how to use the on-chip temperature-channel to calculate a temperature, especially as that relates to the existing AN3031 on that topic. I've made these 'temperature' routines a couple of times, and honestly I find AN3031 to be a little 'deep' trying so hard to rework the 'basic' formula into AtoD units everywhere. Maybe it is time to bring all my thoughts together here, and perhaps Freescale NXP (just be glad I didn't say Motorola...) will consider turning this into something 'official' --- like maybe AN3031-A?

This on-chip temperature measurement is based 'more or less' on well-known temperature characteristics of the activation-energy for a PN junction (Vf or Vbe). In any case, that electron-activation-energy physics sets the voltage, with 'secondary' influences only based on things like activation current, which is surely 'well controlled' over Vdd/Vref, making their influence 'negligible'.

In a K70 data sheet (K70P256M120SF3.pdf, to pick a part 'at random'), Vtemp25 is called out as 716mV, and the slope as 1.62mV/C (both typical). Those are 'absolutes' based on semiconductor physics -- the whole 'variation with Vdd' calculations as per AN3031 is because they wanted to represent everything throughout directly in ADC steps, and thus as a % of Vref the Vtemp measurement varies. It is MUCH simpler to keep it all represented in 'true mV', dispensing with ADC units in a straight voltage conversion. Say your ADC reference is 3V (as from a REF3030 = 3.00V+/-0.6%(all errors) @75cents), so we can just define Vref_mV = 3000L. We bring in the raw right-justified16-bit ADC conversion as cpuTemp, then the 'actual voltage' (kept here as mV to keep it all integer, in 32bit math) is just:

Vtemp_mV=Vref_mV * cpuTemp / 65536

One can, of course, use a 'poor' Vref voltage source into the ADC, but 'coincidently' convert a 'better reference voltage' and FIRST work 'backwards' to what that means Vref IS (at that moment) from an assumption on THAT voltage, thus:

Vref_mV=65536 * ExtRefmV / Vmeasured_ref

where ExtRefmV is the assumed 'ideal' secondary reference in mV, and Vmeasured_ref is the right-justified 16-bet ADC conversion result of that voltage. That 'secondary reference' could be the on-chip bandgap, BUT note that the 'worst case' specs for THAT are generally +/-3% (hardly better than decent-grade 3.3V regulators...). More about 'error contributions' later.

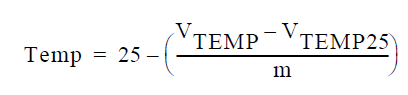

Once we have Vtemp as the on-chip sensor voltage at the current temperature, calculating the measured temperature is a simple application of the basic formula:

except that for MY work I always 'multiply by the inverse' rather than divide using the slope 'm'. Which is to say that for the K70's slope of 0.00162V/C it is better to simply multiply by 617C/V (or mC/mV for my purposes), rather than try to divide by that floating-point fraction.

So given the nominal Vtemp25 for said K70 as 716mV, which we can set as a simple constant vTemp25mV=716 (and TempSlope=617 to match), OR one can calibrate each chip at 25C (or I suppose ANY fixed reference point) and 'some other temperature' to get an individual vTemp25mV and slope factor to store in the chip somewhere to use, but in either case the final formula for the temperature in milliC is just :

intTemp_mC = 25000 -((Vtemp_mV - vTemp25mV) * TempSlope);

Or, rounded to integer Celsius:

if( intTemp_mC < 0 )

intTemp_C = (intTemp_mC - 500)/1000; //Round 'down' for negative

else

intTemp_C = (intTemp_mC + 500)/1000; // or UP for positive

Accuracy

The other thing everybody wants to know is 'how accurate' to expect the result to be. The 'short answer' is that the 'typical characteristics' work well in your favor, and you will GENERALLY get 'good enough' results. But rigorous engineering demands full analysis.

What follows is a more complete study of the potential error contributions (as opposed to the unsubstantiated 'general-numbers' given in AN3031).

AN3031 makes it look like 'fixed point math' will severely limit your results. Using 32-bit math, this is NOT going to be the case. You might note that my voltage representations in mV gives me an inherent resolution of 0.617C, or about 0.6C. Small compared to the upcoming error considerations, but it is FREE to make that better if one is simply willing to look at the voltages in 'tenths of mV':

Vtemp_mVx10=(10*Vref_mV)*cpuTemp/65536

This still fits in 32-bit integer-math because the measured voltage is 'certainly less than a volt' (to be more precise, the 'worst case' K70 measurement for -50C would be 853mV), so the product of this tenth-mV ref-voltage representation and the ADC-measurement MUST be at most 30 bits. Say, for instance, 30,000 tenths-of-mV reference and thus 1V-measurement-max (1/3 ful-scale) is 21,845 counts, the product 655,350,000 being 0x270F D8F0. More generally, for ANY reference voltage, the product of it represented in tenths-of-mV and the ADC-conversion that is the fraction of said full-scale represented by 1V will always be exactly that result (65,535 times 10,000 tenths-of-mV).

Then we just deal with the extra *10 factor in the next equation:

intTemp_mC = 25000 -((vTemp_mVx10 - vTemp25mVx10) * TempSlope)/10L;

Thusly, integer math can be considered 'more than up to the task' (less than 0.1C truncation error), and NOT contribute noticeably to the final error.

Uncalibrated Chip error:

If you are 'unwilling' to calibrate each chip individually, you have to 'live' with the tolerance specified in the NXP datasheet for both Vtemp25 (offset error) AND TempSlope. From that same sample datasheet, Vtemp25 calls out +/-10mV, and the slope as +/-0.07mV/C.

At 0.617C/mV (typical slope) a +/-10mV 'zero error' is clearly +/-6.17C right there.

For a '25C difference' from the 25C starting point (0 or 50C measurement) a 0.07mV/C slope-error would contribute an additional 1.75mV error, or +/-1.08C.

Together these 'agree well' with the +/-8C 'Typical accuracy' shown in AN3031 for Uncalibrated Floating-point math (matched herein by 32-bit integer math).

What AN3031 FAILS to discuss is 'reference accuracy' impact.

IF you allow a 'garden variety' +/-3% as your Vref accuracy, this is what happens:

Let's 'assume' you measure a Vtemp of 738mV. If your 'VCC reference' IS 3.00V, each LSB will be .04577mV, so 738mV nets 16,122 counts. Using the default 716mV for vTemp25mV:

Vtemp_mV=3000L*cpuTemp/65536

returns to 738, and 25000 - (738 - 716)*617 yields 11,426mC or 11.4C.

IF your reference-point-voltage is ACTUALLY 3% 'low', each LSB (from 3V*97%) is .0444mV, so 738mV comes in at 16,620 counts. Using the same two equations, you will come up with a 761mV conversion result, making a -2.8C answer----A huge error!. The 'small differences' in temperature voltages magnify the errors. The 761mV result is indeed 3% above the ideal 738mV return, BUT 'twice as far from 716', so our resulting 'difference from 25C' is twice as far as well. A variation on the butterfly effect. So this +/-3% error leads to about +/-15C error band across the board.

Working with the REF3030 as an external Vref driver gives me +/-0.6% WORST CASE deviation over devices/temp/age. A 0.6% 'low' reference, in the above test condition, yields 0.0455mV/LSB, a 738mV conversion result of 16,219 counts, calculating to 742mV (given the assumption of ideal Vref=3000mV), yielding a final 8.9C answer. Only 2.5C off... The error-band across the board is about +/-3C with this reference accuracy.

Better reference parts are obviously available, like MAX6071AAUT30 (about +/-0.1% over devices//temp/age, for a buck and a half or so in volume). This can hold +/-1C across the conversion range for reference-induced error.

Conclusion:

A 'minimal effort' implementation using the on-chip bandgap as the reference point, and NO other 'per chip' calibration, COULD see errors exceeding 20C. The first step to take is a better reference -- you would want more 0.5%' across devices, and temperature, AND long-term-aging. This ALONE will get you near to 10C overall 'worst case' error band. The next real improvement would come from a single-point calibration somewhere near 25C, from which to calculate (and store, say, in the Flash-memory-controller program-once area) a per-chip vTemp25mV. A full two-point calibration is obviously ideal, AND 'COULD' inherently include calibration of your reference's tolerance and temperature drift, ASSUMING that they are 'consistent'. The NXP datasheets do NOT detail the 'causes' for the worst-case +/-3% (+/-2% from only 0to50C) error-band for the internal bandgap -- perhaps 'much' of THAT error would calibrate out -- and fall into calculated and stored vTemp25mV and TempSlope values.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

According to my chip. My Vtemp25 = 1.396V, I have two Slopes 3.266 mV/°C and 3.638 mV/°C. My Vdd calculate from Vbg is 3.3V.

The inverse of one of these slopes is 274. My Vtemp25mV is 0.001396 as the data sheet gives me this value in volts.

I took an averaged sample on AD22 for the internal temp and got a value of 1481. Using your equation I get

Vtemp_mV=3300L*1481/65536 = 74.57

intTemp_mC = 25000 -((74.57- 0.001396) * 274);

intTemp_mC = 4949.15, so I am reading an internal temperature of 5 degrees C?

Data is from this data sheet: https://www.nxp.com/docs/en/data-sheet/S9KEA128P80M48SF0.pdf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I am using bandgap voltage as a reference for my a2d channels and measuring the temperature.Some hoe in repeated measurements I see the ADCreading of my bandgap voltage to be half of what is expected.

As in,

ADC Resolution / System Voltage = ADCreading BandGap/ BandGap voltage

//4095/5(VoltsAnalog) = ADCreading BandGap/1.16(VoltsAnalog)

//ADCreading BandGap = 950 (approx)

What I am looking at the ADCResult register is around 350 to 500 value in decimal.

I would like to know what steps should be taken to debug this. Thanks.

I am using a 5 Volt system MKE02Z-FRDM (MKE02Z64VQH4) device with Channel 0 as bandgap and Channel 1 as internal temperature sensor. Processor Expert is being used to setup the software for this.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Earl

I notice you use

intTemp_mC = 25000 -((Vtemp_mV - vTemp25mV) * TempSlope);

whereby the data sheets state

"Temp sensor slope Across the full temperature" to be typically 1.62mV/°C (that is, +ve).

That would imply

intTemp_mC = 25000 +((Vtemp_mV - vTemp25mV) * TempSlope);

I wonder why the data sheet doesn't give its polarity as one would expect???

Regards

Mark