- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Home

- :

- i.MX Forums

- :

- i.MX Processors

- :

- Re: IMX8MP use ISI with ISP

IMX8MP use ISI with ISP

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

IMX8MP use ISI with ISP

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello community,

I have connected a RAW10 camera to ISP from IMX8MP. My question is if it is possible to connect the output stream of the ISP to ISI to transform the YUV image to RGB format.

Looking at the TRM, i am not sure if it is possible.

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Will it support IMX8MPlus for RAW16 format ?. If it is supporting can you send me the reference designs or documents ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Quercuspau,

Could you actually succeed capturing RGB?

I found your suggestion to read AXI interesting. Would be great if we can do that.

For snapshots I am using Bayer RG12 raw images that I convert to RGB using software ISP. This is not practical for live video tough,

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Malik,

Yes, it is possible. Do you need to enable M2M device tree node from ISI0 in device tree. Then you will be able to use v4l2convert element from gstreamer to transform YUV to RGB for instance:

gst-launch-1.0 v4l2src device=/dev/video2 num-buffers=1 io-mode=dmabuf ! video/x-raw, width=1920, height=1080 ! v4l2convert output-io-mode=dmabuf-import ! video/x-raw, width=1920, height=1080, format=RGB ! filesink location=image_1080p_RGB888.rgb

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the feedback,

YUV 4:2:2 is already downsampled. When converting YUV back to RGB, one will not get original resolution. Missing pixels are computed. The idea is to get best possible quality after ISP.

In my case, the sensor (imx477) is actually providing 4056x3040 pixels at 12bpp. After ISP treatment only 8bit YUYV will be left.

After de-mosaicing, the ISP has 12Bit RGB internally as can be seen in ISP pipeline flow chart, however this HDR picture is down-converted to YUYV (yuv 4:2:2) prior to write AXI bus.

The question is how to get higher bit-depth ISP processed image? The intermediate ISP steps must be somewhere in the memory. Ideally I am interested in 12bpp RGB or at least 8bit RGB.

BR, MC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Maybe some employee from NXP can answer your last question. But my assumption is: being the ISP a closed peripheral and having its own static RAM. I dont think that you can get the data from the intermediate steps of the ISP. So you will be forced the do the debayern from output RAWs using SoftISP.

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

you can use ISP directly, don't need to use ISI, you can refer to the dts file, which support basler camera via ISP

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ISP only provides YUV or RAW. ISI is able to deliver RGB. I would like to use both ISI channels connected to one ISP stream to get different format RGB video streams

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

one can use GPU to convert YUV to RGB

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it not possible to do it with ISI?

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

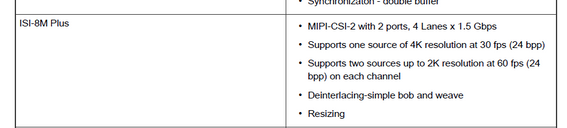

ISI is supposed to connect to mipi csi2 interface, pls see the pic as below:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @joanxie ,

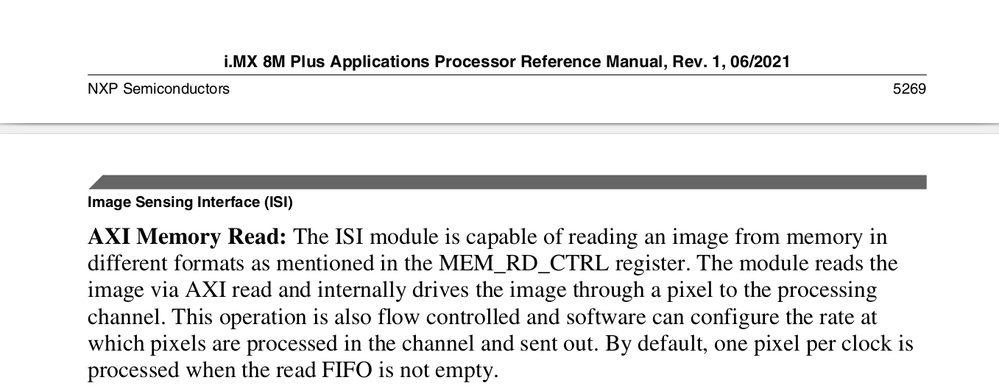

I just found this information on TRM:

However, I haven't found any document about how to use this functionality with NXP Linux BSP.

Could you provide this information?

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ISI supports isi.m2m device and isi.cap device, you can test by ov5640, you can refer to the dts file:

isi_0{

status = "okay";

cap_device {

status = "okay";

};

m2m_device {

status = "okay";

};

};

&isi_1 {

status = "disabled";

cap_device {

status = "okay";

};

they both connect to the camera, for isi source code, one can refer to:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is there no more information?

BR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I explain again, Memory can be the source of ISI, but current bsp doesn't support this, and I consult local team, no one did this and no sample for this, maybe you need to ask for professional support for your special demands