- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

-

- Home

- :

- i.MX Forums

- :

- i.MX Processors

- :

- Zero copy between GPU and VPU

Zero copy between GPU and VPU

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Zero copy between GPU and VPU

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am trying to take physical buffers from the imxvpudec gstreamer element, and modify them using the GPU with GLES and EGL, before passing them to the VPU encoder with imxvpuenc_h264.

For passing the memory used by the decoder to the GPU, I assume I can use the glTexDirectVIVMap function as here: gstreamer-imx/gles2_renderer.c at master · Freescale/gstreamer-imx · GitHub .

However, I am not so sure how to pass the memory to the encoder after having modified it with the GPU. I saw this thread: Texture input to video encoder? . However it is from 2013, so I guess some things may have changed since then.

The accepted solution in the thread was to use the virtual framebuffer from here: GitHub - Freescale/linux-module-virtfb: Virtual frame buffer driver for iMX devices . Is this still the recommended approach? In that case, I guess some updates are in order since it seg faults on initialization. My error trace is here: Segmentation fault on module initialization · Issue #2 · Freescale/linux-module-virtfb · GitHub

In the same thread, AndreSilva says that the glTexDirectVIV function can't be used to get a pointer that can be read from, so I can't pass it to the vpu encoder. However, maybe such a function has been implemented since then?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Update:

I have modified the eglvivsink from gstreamer-imx, so that after it has rendered to my virtual framebuffer, it writes the physical address of the framebuffer to the buffer before sending it downstream. However, I have encountered a problem with opengl on imx6: The GL_EXT_yuv_target extension is not supported by the gpu, so it can only render rgb data to the framebuffer.

This means that in order to encode the rendered image with the vpu, I first need to do a rgba to planar yuv conversion. The entire point with using zero copy between gpu and vpu was so that I wouldn't need to deal with the annoying color space restrictions of the g2d system, so it seems like my solution is worthless for this scenario.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Erlend,

there is another demo we have that might help you somehow:

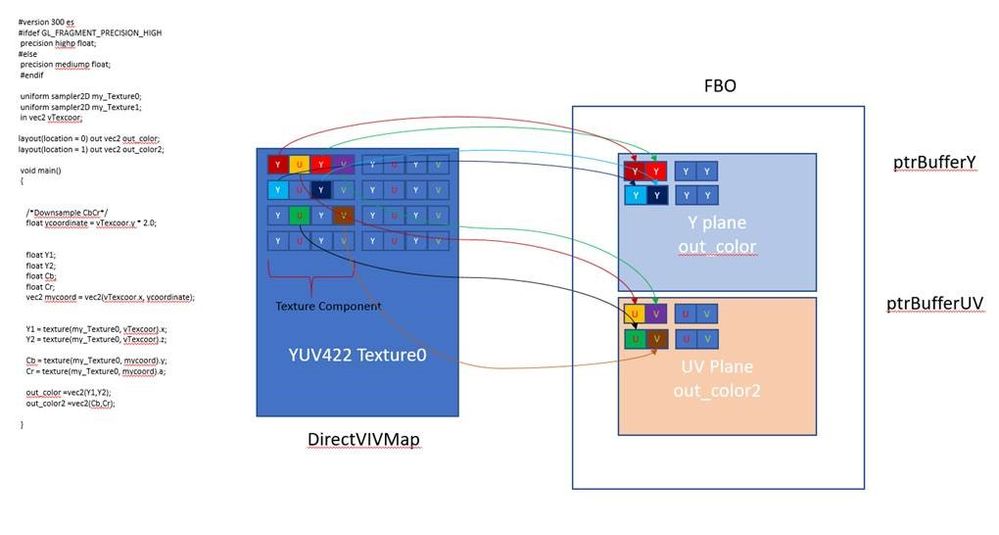

Attached is the patch (0001-YUYV-to-NV12-Converter.patch) to generate the YUYV -> NV12 converter using the GPU. I modified the DirectMultiSamplingVideoYUV for simplicity.

The 0001-GPU-YUV-RGB.patch just modifies the original DirectMultiSamplingVideoYUV project.

The solution is developed in Linux using the GPU SDK gtec-demo-framework/DemoApps/GLES3/DirectMultiSamplingVideoYUV at master · NXPmicro/gtec-demo-framew...

The application creates a video in NV12 format from the camera output in YUV422 (YUYV).

The example and solution now works in the next way:

1.- Setting Gstreamer to output the camera video in the default YUV422 YUYV format.

2.- The GPU maps a texture directly to the video buffer. The texture is defined as a RGBA because we need a 4 8-bit component (YUYV).

3.- Create 2 Color attachments as render targets. These buffers/render targets will contain the Y and UV components of the NV12 format.

4.- In the Fragment shader the Y contents are copied to the first render target. The Cb and Cr are downsampled by ½ in the fragment shader and copied to the last render target.

5.- Using the PBO to get a reference to the pixels in each render target. We have a pointer to Y and a different one for the UV components.

This is better shown below:

Regards,

Andre

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you Andre, this was very helpful. Will definitely have another go at this.

At the moment I am instead trying to use the blending functionality in g2d on just the Y plane of the NV12 image, so that I won't need to convert back and forth between rgb and planar yuv just to overlay text in the image.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Glad it helped.

Regards,

Andre

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was able to somewhat resolve the segmentation fault in the virtual framebuffer kernel module: Segmentation fault on module initialization · Issue #2 · Freescale/linux-module-virtfb · GitHub . Not sure if it works like it's supposed to though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am glad you getting it working.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the code sample. It was helpful insofar as showing how to map memory from vpudec into a texture.

But as I mentioned, I also need to encode the texture data afterwards, using imxvpuenc_h264. This is why I'm looking at the virtual framebuffer solution from that thread.

My gstreamer pipeline looks like this:

imxv4l2videosrc device=/dev/video1 input=0 fps=30/1 ! "image/jpeg, width=1920,height=1080, framerate=30/1" ! queue max-size-bytes=0 max-size-time=0 max-size-buffers=0 ! imxvpudec ! zerocopygpuoverlay ! imxvpuenc_h264 bitrate=14000 ! h264parse ! mp4mux ! filesink location=/videos/out.mp4

Where "zerocopygpuoverlay" is my custom element, modifying the yuv data directly without copying it into or out from the texture.