- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Home

- :

- Model-Based Design Toolbox (MBDT)

- :

- Model-Based Design Toolbox (MBDT)

- :

- ADC Hardware Averaging Question

ADC Hardware Averaging Question

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ADC Hardware Averaging Question

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I need to know how the hardware averaging works so that I can use it effectively without altering my read timing. I'm assuming that if I have a model that samples the ADC every 0.1ms using the PDB trigger and I set hardware averaging to 4 samples then I will get a sample every 0.4ms? Or does the hardware averaging take my step size into account and sample every 0.025ms so that I get an averaged sample every 0.1ms?

For reference, I'm using a tapped delay to get an RMS value for the sine wave input signal and I need to know exactly how many delays I need. I need to know if that RMS value goes above some threshold in a sample period. For instance, if the RMS value goes above 100mV and stays for a period of 40ms, I want to trigger a flag. If it crosses the threshold and dips back below in less than 40ms I will disregard it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello sparkee,

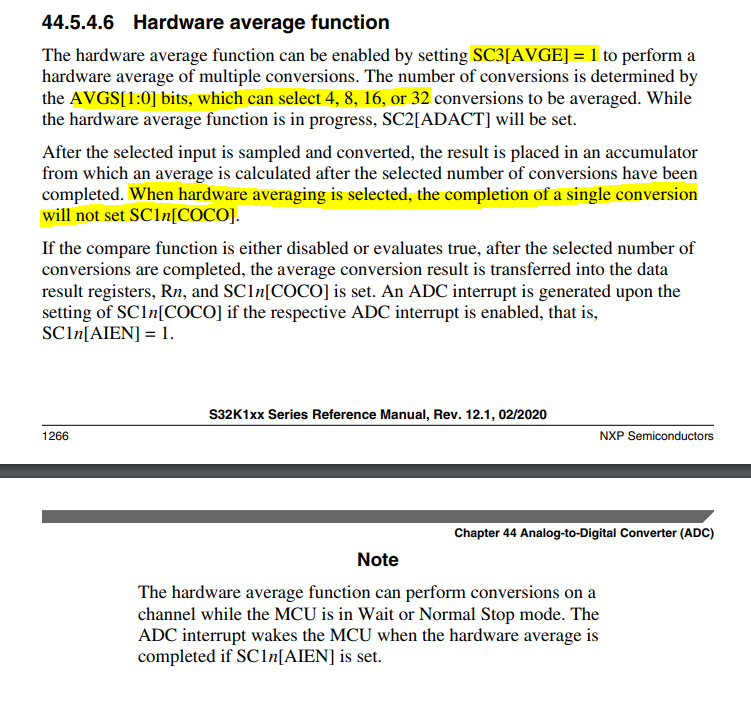

The best answer to the question "How does the ADC hardware averaging works" can be found in the Reference Manual from here https://www.nxp.com/webapp/Download?colCode=S32K1XXRM , the ADC chapter.

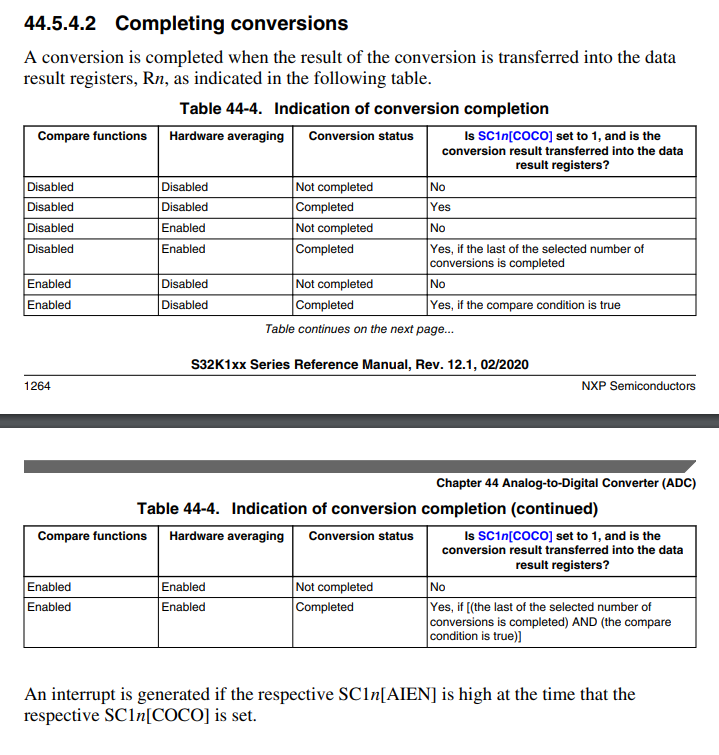

Now, all the toolbox does by enabling the HW ADC and samples to average checkboxes in the config block is to set the AVGE bit and the AVGS1 & AVGS0 bits. From what I've read, once you triggered a conversion, the hardware will perform as many conversions as selected [4, 8, 16, 32], and when it finishes the selected number, the averaging takes place. Once the average is computed, it will trigger the conversion complete interrupt. So, I don't think that the hw averaging takes the sample time into consideration at all.

To be honest with you, I don't know how does that delay block works in generated code, if it is performing busy waiting or not.

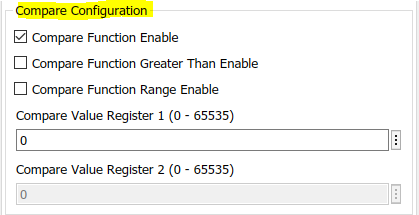

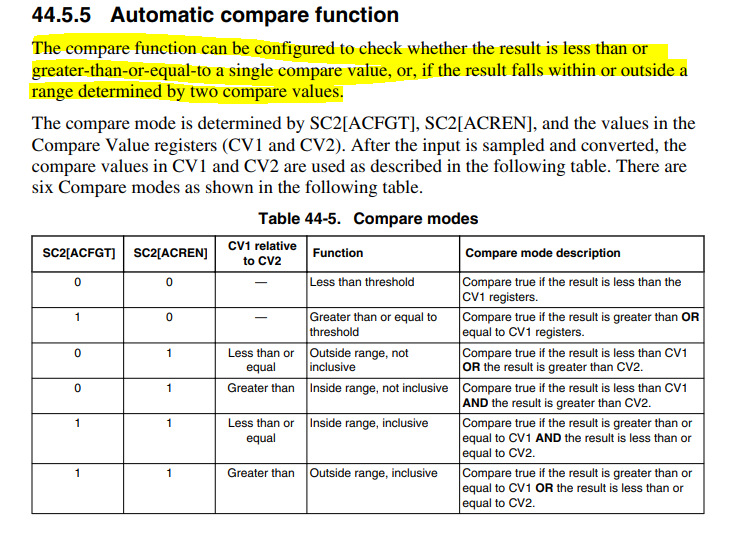

Reading your explanations, reminded me of another hardware functionality: the Automatic compare function, which we also support in the configuration block. And what is nice about it is that the ADC can be set to provide the conversion result only if some threshold conditions are fulfilled. Please have a look at the following screenshots from the Reference Manual.

So I recommend you read the ADC functional description (Chapter 44.5 from the Reference Manual) and maybe my idea of taking into consideration the usage of the ADC automatic compare function may do your task easier. The only question remains how to measure those 40ms of above threshold time, but I think we can figure it out :smileygrin:.

Hope this helps,

Marius

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

mariuslucianandrei to the rescue again, thank you. I’ve read that section of the reference manual before but sadly did not make the right connection in my brain until you highlighted the lines. I still had to read the line twice to make sure I understood it correctly. I was mostly sure this was how the averaging worked but wanted to double-check so that I didn’t have major issues later.

As for the hardware compare, I was aware of this and planned to use it for one portion of my design but I'm needing to make multiple comparisons simultaneously so I'm trying to do that in software.

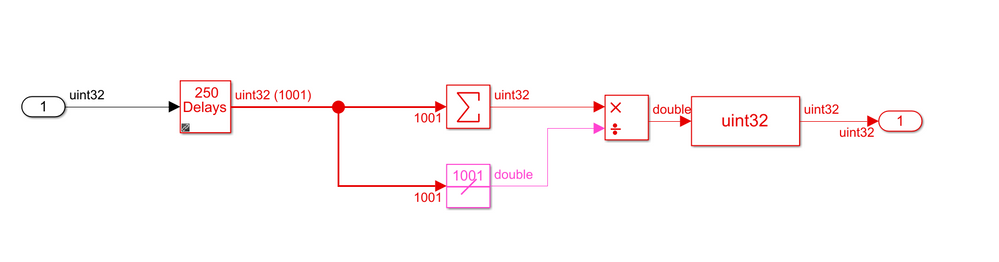

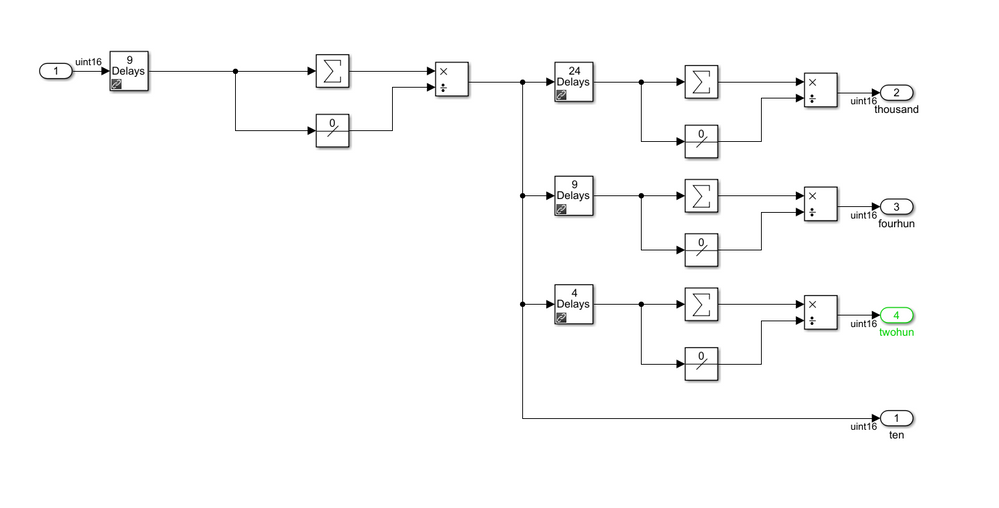

Here's a shot of what I'm doing right now:

I'm nesting the delays so that I don't need a single tapped delay of 1000 delays. I'm using hardware averaging at 4 then a 9 delay (plus current for 10 samples) then split out to more tapped delays to get 200/400/1000 samples for comparison. I'll then compare those averages to my set points, which are different for each sample size. The shorter the sample size, the higher the signal will have to be before I throw a flag.

Now that I'm walking through this I see there may be an issue with my logic on when to throw a flag so I'm going to have to think through this to make sure my approach will work for the desired outcome.

Thanks Marius!