- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

-

- Home

- :

- i.MX Forums

- :

- i.MX Processors

- :

- IMX8M CSI-MIPI data rate settings - start of transmission error on high data rate

IMX8M CSI-MIPI data rate settings - start of transmission error on high data rate

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

IMX8M CSI-MIPI data rate settings - start of transmission error on high data rate

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, I am developing a kernel driver for the OmniVision MIPI CSI camera OH02A10 on the IMX8M processor.

The driver works totally fine, but only if I configure the PLLs of the sensor to output a 360MHz MIPI PHY clock and 45MHz pixel clock, while the sensor normally runs on a 720MHz MIPI PHY clock and 90MHz pixel clock.

Therefore I can only achieve half of the data rate of the sensor, which is 1920x1080, 60fps, 10-bit raw over two lanes (I get 30fps instead).

I have double-checked the sensors registers of the reg settings I got from OmniVision for 720MHz mode, so the sensor should be fine.

When I try to stream with 720MHz, I get the ErrSotSync and ErrSotHs registers. (start of transmission error)

I am trying to debug this but it is hard to find any information I can relate on because the IMX8M Reference Manual has this part still missing and it's not clear which Reference Manual I can adapt.

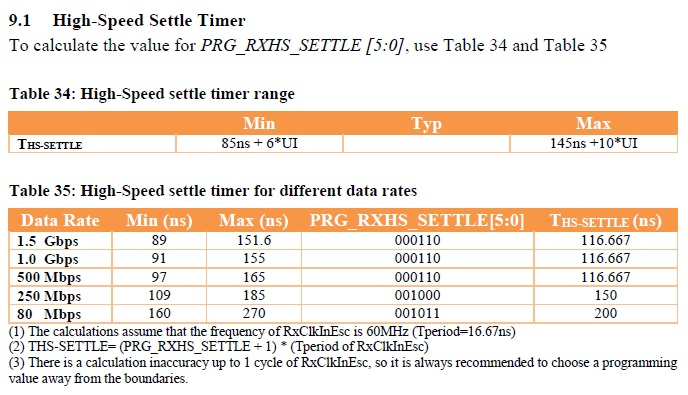

I tried different HS_settle values, which I got from this thread: Explenation for HS-SETTLE parameter in MIPI CSI D-PHY registers .

If you scroll down there is a table posted from an NXP colleague saying it should be used for IMX8M hs_settle calculation, but it confuses me, that it does not relate to drivers/media/platform/imx8/mxc-mipi-csi2_yav.c which is used for imx8m, right? In the file, the HS_settle value increases with higher data rate, but not in the table.

So my questions are, what do I have to configure to get a high data rate on IMX8M CSI-MIPI except for HS_settle? On what HS_settle information can I relate to? Where does the table come from? On which reference manual can I relate to, since it seems to be a mixture of iMX7D and iMX6sll? (see this thread iMX8M MIPI-CSI 4-lane configuration )

Do I have to change anything on these clock configurations? What are they for?

clocks = <&clk IMX8MQ_CLK_DUMMY>,

<&clk IMX8MQ_CLK_CSI1_CORE>,

<&clk IMX8MQ_CLK_CSI1_ESC>,

<&clk IMX8MQ_CLK_CSI1_PHY_REF>;

clock-names = "clk_apb", "clk_core", "clk_esc", "clk_pxl";

assigned-clocks = <&clk IMX8MQ_CLK_CSI1_CORE>,

<&clk IMX8MQ_CLK_CSI1_PHY_REF>,

<&clk IMX8MQ_CLK_CSI1_ESC>;

assigned-clock-rates = <133000000>, <100000000>, <66000000>;Also, the MIPI PHY clock is DDR-mode right? Does that mean when the PLLs on the sensor are set to 720MHz I can stream with 1440Mbps/lane? But then 1920x1080x60fpsx10bit = 1,244,160,000Mbps should be doable with one lane? Or do I have to calculate with 16-bit for that?

Also, there is this "fsl,two-8bit-sensor-mode;" option which says should be turned on, when using two 8-bit camera or 16-bit camera. Does this also relate to 10bit camera?

Best regards,

Johannes

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HS_settle in imx8M is different from imx7D, you can refer to the picture as below:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah, I know that table, as you can see in my question, I am already linking to it. But still using these values it does not work. Can you answer my questions? Or do you have any other ideas, what could be wrong or how to debug it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

did you add any source code in the mx6s_capture.c to support raw10 data? try to add like:

+ .name = "RAWRGB12 (SGRBG10)",

+ .fourcc = V4L2_PIX_FMT_SGRBG10,

+ .pixelformat = V4L2_PIX_FMT_SGRBG10,

+ .mbus_code = MEDIA_BUS_FMT_SRGGB10_1X10,

+ .bpp = 1,

switch (csi_dev->fmt->pixelformat) {

case V4L2_PIX_FMT_YUV32:

case V4L2_PIX_FMT_SBGGR8:

+ case V4L2_PIX_FMT_SGRBG10:

width = pix->width;

break;

add

+ case V4L2_PIX_FMT_SRGGB10:

+ cr18 |= BIT_MIPI_DATA_FORMAT_RAW10;

+ break;

under static int mx6s_configure_csi(struct mx6s_csi_dev *csi_dev), then set TWO_8BIT_

SENSOR in the CSI_CSICR3