- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Home

- :

- i.MX Forums

- :

- i.MX Processors

- :

- IMX6QP + Sony IMX290 CMOS via MIPI

IMX6QP + Sony IMX290 CMOS via MIPI

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have an own IMX6QP board communicating with an own CMOS sensor board (Sony IMX290 based) via MIPI interface. We developed a camera driver and also modified some of the NXP BSP files and more or less the solution is working, but we are experiencing a strange behavior apparently related with the vertical sync which we are not able to solve.

Camera streams data correctly (all MIPI_CSI registers indicate correct value) but we are not receiving the start of frame at the correct position.

For debugging purposes, the camera is sending a pattern, fixing all pixels of a cocnrete row to 0x3FF (all FFs with 10 bits) but we never found this pattern at the indicated row but in other rows, changing with each frame It has some kind of “repetitive” behavior

Some particularities:

Camera is working in 10 bits mode. It means, each 4 pixels are encoded with 5 bytes according the MIPI

Camera is working at 1920x1080

Camera uses 4 MIPI lanes working at 222.75Mbps/lane

Horizontal sync seems to be working correctly

Framerate 1fps (adjusted in sensor with some registers: FRSEL which fixes 1H time to 29.6us and VMAX which fixes the number of lines). Same issue happens at higher framerates.

Patches applied against NXP last kernel:

https://source.codeaurora.org/external/imx/linux-imx/?h=imx_4.14.98_2.0.0_ga

Branch: 4.14.98_2.0.0

Commit: 1175b59611537b0b451e0d1071b1666873a8ec32

Our capture test software is based on standard https://linuxtv.org/downloads/v4l-dvb-apis/uapi/v4l/capture.c.html adapted to our image formats.

Some “suspects”:

It seems there is some relation between the vsync issue and the sensor register WINWV_OB (who fixes the vertical Out Band region of the image). Modifying this register has direct influence on row displacement.

Some frames are “lost”. In the example sent we are capturing 7 frames, but receiving 11 end-of-frame interrupts. Some of them seems are not “arriving” to our DQUEUE

Attached a video showing the effect.

Any ideas of what can be happening?

Thanks for the feedback!

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Joan,

Below is the reply from i.MX Expert team.

-------------------

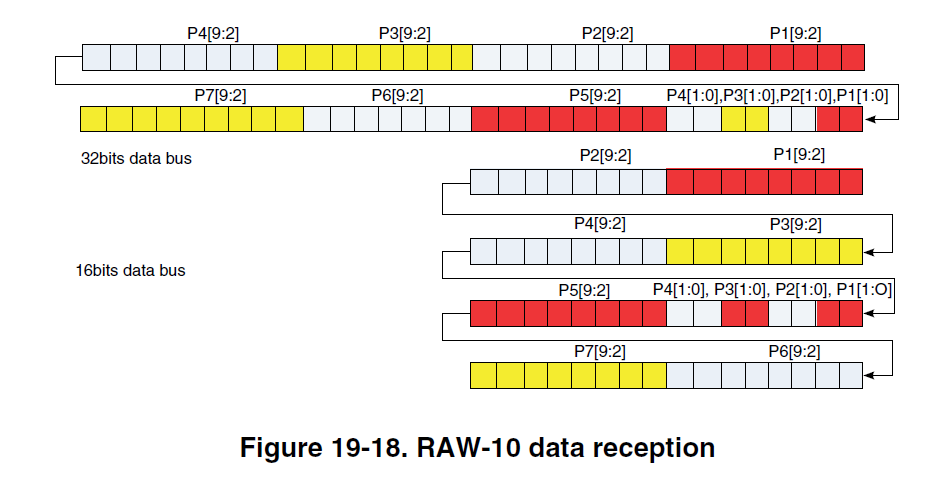

For 1920*1080 RAW10 input, it is total 2400*1080 bytes.

To received it as 16bpp generic mode, width = 1200 and height = 1080.

--------------------

Have a nice day!

BR,

Weidong

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Joan,

See below, please!

------------------------------------------------

The MIPI CSI2 RAW10 data is mapped as followed, so iMX6 CSI should use 16bits generic mode to capture it, the customer should calculate the frame size for IDMAC, if the IDMAC size is not same as real frame data size, scroll issue like the video will happen.

By the way, iMX6 has no hardware ISP to process the RAW video.

Hope above information is helpful for you!

Have a nice day!

BR,

weidong

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for your reply. Just to verify if I understand your comments, I specify my scenario in order to cross check register values: Assuming camera is sending a 1920x1080 image 10 bpp (this is 1920x1080x(5/4) bytes), which would be the correct configuration of the following registers?

- IPU0_CSI0_SENS_CONF: Specially CSI0_DATA_WIDTH & CSI0_SENS_PRTCL

- IPU0_CSI0_SENS_FRM_SIZE

- IPU0_CSI0_ACT_FRM_SIZE

And what about CPMEM?

- BPP

- FW (Frame width)

- FH (Frame height)

- SL (Stride Line)

- PFS

Thanks for you help!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Joan,

Below is the reply from i.MX Expert team.

-------------------

For 1920*1080 RAW10 input, it is total 2400*1080 bytes.

To received it as 16bpp generic mode, width = 1200 and height = 1080.

--------------------

Have a nice day!

BR,

Weidong

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Wigros,

Your indications served to solve the issue!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear, joan vicient. Did you manage to start the camera?