- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Home

- :

- General Purpose Microcontrollers

- :

- LPC Microcontrollers

- :

- Random delays in IRQ triggering with programmable glitch filter

Random delays in IRQ triggering with programmable glitch filter

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Random delays in IRQ triggering with programmable glitch filter

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm seeing substantial random delays in IRQ triggering when I use the programmable glitch filter on an input used to trigger the interrupt. I'm seeing this on LPC1517, but I suppose it is the same with all parts that have the programmable glitch filter. As a test, the first thing my ISR does is turn off an output pin, and the last thing it does is turn the pin back on. This interrupt is set at the highest priority in the system running at 72MHz.

Without the programmable filter I see a very consistent result. From the moment my input requests the IRQ there is a delay of either ~750ns or 990ns to when my test output falls. That's a difference of about 17 instructions if I did my math right. I can deal with that. Disabling the default 10ns glitch filter produces pretty much the same results.

Next I test with a custom 1us glitch filter (72MHz/72) on the input pin. As I expected, the quickest response time is 1us longer than without the filter. However, sometimes it takes up to an additional 1.2us to trigger my test output. Why the huge variance? Further testing shows that the random variance in processing time to call the ISR increases as the glitch filter time increases.

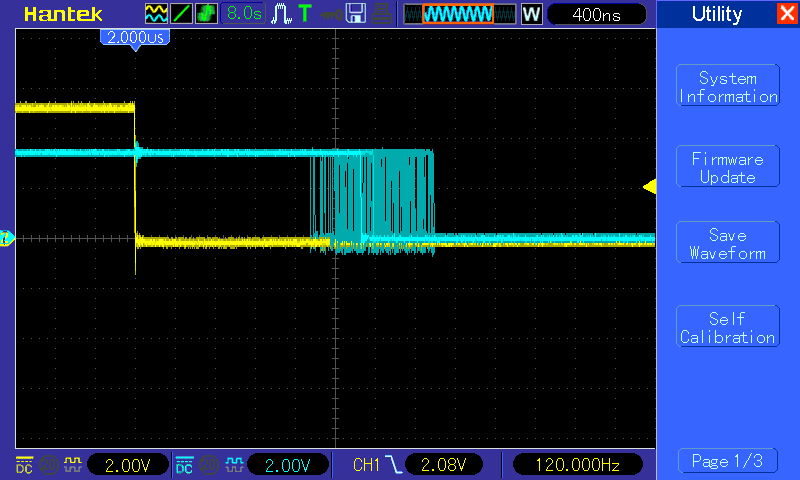

Default 10ns glitch filter. Yellow is the IRQ input, green is pin turned off by the ISR. (IRQ happens about 120Hz & there is 8 seconds of image persistence on the scope.)

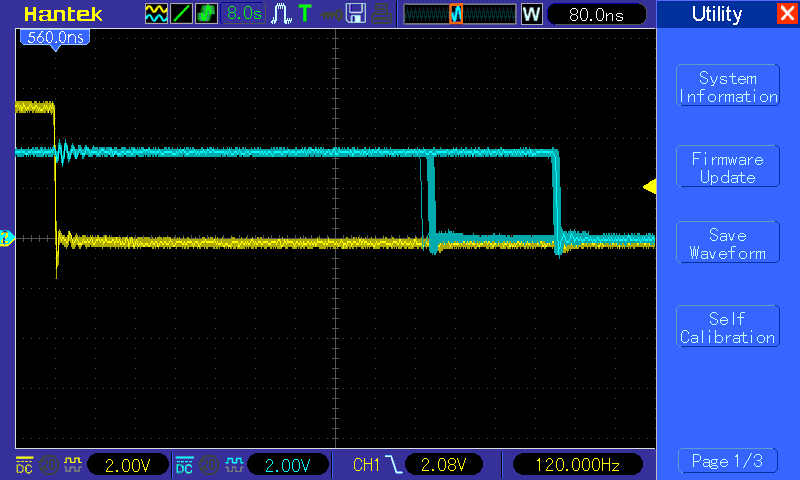

Here we see the random variance in in time to trigger the ISR with a 1us custom glitch filter enabled. Again, ISR triggered at about 120Hz and 8 seconds of persistence on the scope.

Can anyone explain why the custom glitch filter is adding so much uncertainty to the timing of my input?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Joseph,

I have reproduced the behavior you mention, I will continue investigating about this and will get back to you as soon as possible.

Best Regards!

Carlos Mendoza

Technical Support Engineer