- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

-

- Home

- :

- i.MX Forums

- :

- i.MX RT

- :

- Using 2 ISO endpoint tranfer in RT1060

Using 2 ISO endpoint tranfer in RT1060

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Using 2 ISO endpoint tranfer in RT1060

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

we are trying to interface the camera and the I2S mic in RT1060. RT1060 will enumerate as UVC and UAC USB class. I used the two different ISO endpoint for the UVC as well as UAC. While we trying to stream the UVC and UAC separately we are able to get the proper video and audio data without any issues. While we trying to stream both the camera and i2s mic then we are getting noise is audio data which means through ISO transfer audio data is not sending properly. Both the data are sent using the api "USB_DeviceSendRequest". What may be root cause for this issue?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Seems I can't add the other pictures in the above post, just add another freertos version picture:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi sathishkumar_r,

You are using the RT1060 to enumerate as UVC and UAC USB class, do you use the RT1060 SDK USB examples? Or you do it by yourself?

If you are referring the RT1060 SDK USB code, please also tell me which detail code you are using?

Just tell me how to use the MIMXRT1060-EVK board to reproduce the issues with the SDK code.

Waiting for your reply!

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kerry,

Thanks for your reply...

Actually I found the root cause for this issue. The problem is because of getting the video data from the sensor. For getting the full frame buffer from image sensor we have implemented the while wait stage because of this ISO audio data is not sent properly.

while (kStatus_Success != CAMERA_RECEIVER_GetFullBuffer(&cameraReceiver, &FrameBufferPointer1))

I have used the SDK example of UVC and UAC. Please suggest an alternative way to avoid the wait stage for getting the frame buffer.

I have attached the source code for your reference. In source code, we used the static audio data and connected the MT9M114 image sensor which provided in dev kit itself. To recreate the issue you need to stream both the video and audio data at same time.

Thanks in advance!!!

Regards,

Sathish

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi sathishkumar_r,

Thanks for your updated information and let me know the newest SDK still the same result.

You mentioned:

Currently, we are using the source code which shared by NXP team, which streams the MT9M114 720p in UVC.

Do you mean, you get the MT9M114 with the usb_device_video_virtual_camera project also from NXP side? Could you please tell me who give you this project? This will be let me know the detail information from the internal side.

Now answer your questions:

1) For transferring the image data we can use the frame done dma callback instead of using the USB callback. so that we no need to wait until the buffer fill. Once we got the filled buffer we can start send the image data through USB. Why we are using the USB callback instead of using the frame done callback? Is that any issues behind this like affecting data rate or any thing else?

Answer: Do you try the dma callback directly, whether that works or not?

I need to know more information from your side, if your project with the camera sensor is from the NXP side, I need to contact with your code engeer, to know the details. If you just find it from the SDK, please also let me know, I will also check with an internal expert.

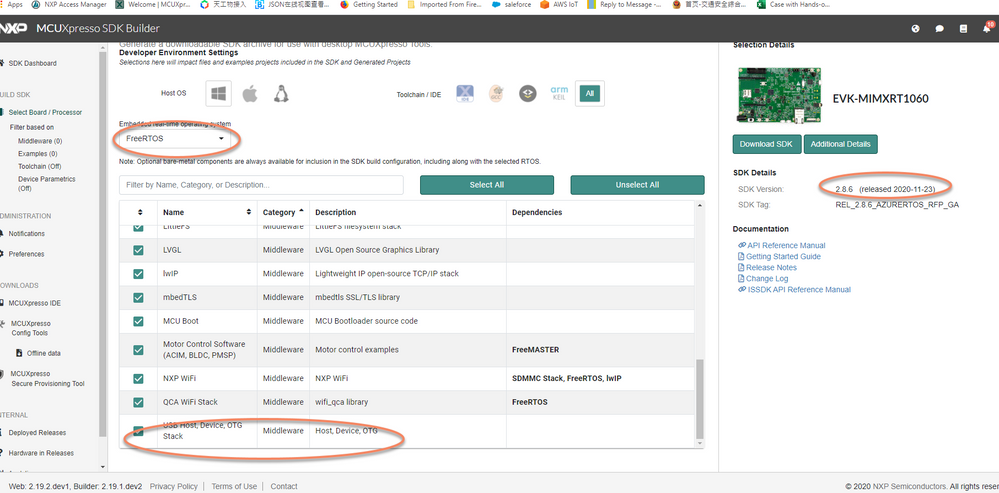

2) If we go for an RTOS, which RTOS should be use for USB either Amazon FreeRTOS or Azure RTOS?. In latest SDK we don't find any USB related Amazon free RTOS. Do we have any RTOS source code which streams the MT9M114 sensor in UVC?

Answer: in fact, you can use the FreeRtos.

You just need to download the newest SDK version in the rtos mode, then you will find the usb_device_video_virtual_camera related freertos version.

SDK_2.8.6_EVK-MIMXRT1060_freertos\boards\evkmimxrt1060\usb_examples\usb_device_video_virtual_camera\freertos

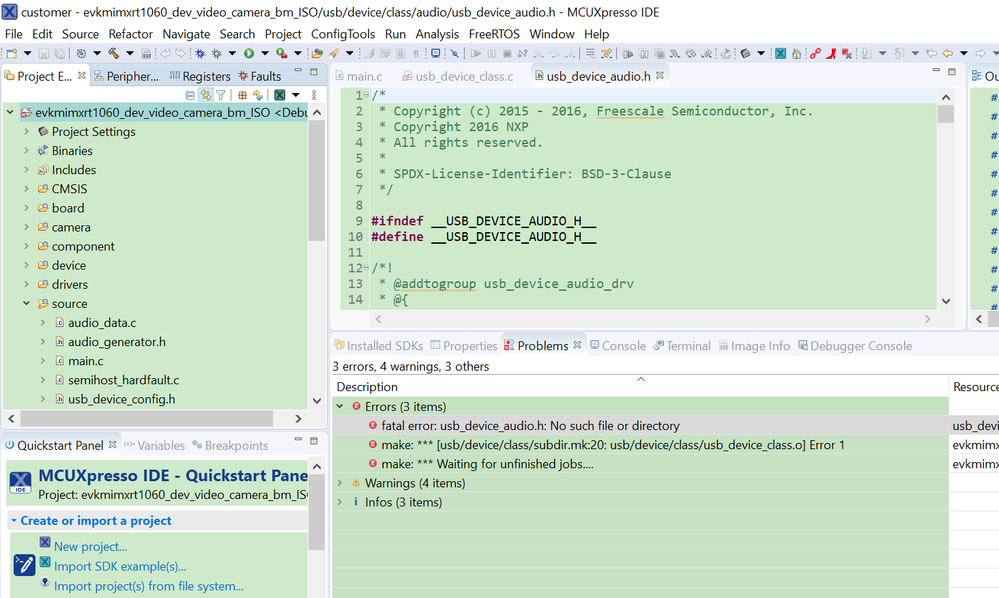

Now I can download the code to my board, your project has one build error, seems caused by the header file path missing,

after I add the path, it builds without any issues, and can download the code now.

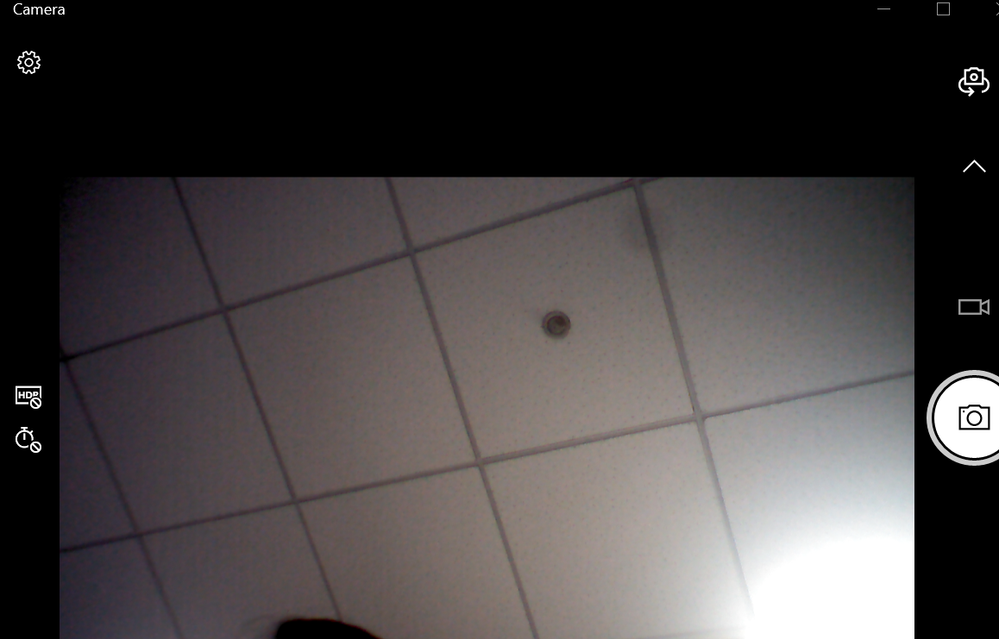

You mentioned to reproduce the problem need to stream both the video and audio data at same time, any PC configuration? As I didn't find the audio USB interface now, could you please let me know how to select the audio and let it play in the same with the video?

I can get the video from the on board camera to the PC now, but I don't know how to select the audio to play it at the same time.

Please give me more details, then I can test it and check with our internal side.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kerry,

Thanks for your answers.

Below is the answer for your questions.

1) Do you mean, you get the MT9M114 with the usb_device_video_virtual_camera project also from NXP side? Could you please tell me who give you this project? This will be let me know the detail information from the internal side.

Answer: Mr.Victor Jimenez shared the source code, through the support ticket. That source code streams the Mt9M114 720p sensor through UVC, which works in IAR IDE.

2) I need to know more information from your side, if your project with the camera sensor is from the NXP side, I need to contact with your code engeer, to know the details. If you just find it from the SDK, please also let me know, I will also check with an internal expert.

Answer: Currently we are using the camera sensor from NXP. To discuss more about the project can we create a ticket? so that we can discuss further in private.

Its glad that you have got streaming from my source code.

You can't test the mic from your side

Regards,

Sathish Kumar

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi sathishkumar_r,

Thanks so much for your updated information.

Victor Jimenez is my colleague, he is on the vacation now, will back until 4th of July.

Yes, I also think you can create the new case, then we can talk the details in private.

After you create the case, please also let me know your case number, then I will help you do more checking and reply you in the case email directly. You also can share me the case number which Victor Jimenez share you this code, thanks so much.

About the audio, I will keep on testing it.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi kerry,

Thanks for your support.

I have created the new case, this is the case number : 00325703

This is the case number where the source code shared : 00241428

Regards,

Sathish Kumar

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI sathishkumar_r

Thanks for your cooperation!

I can see your case now and take it, please give me more time to do the checking.

Any updated information will let you know in your case, please keep patient, thanks so much.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi kerry,

Sorry, you can test the mic in your side also. Since we sending the static audio data.

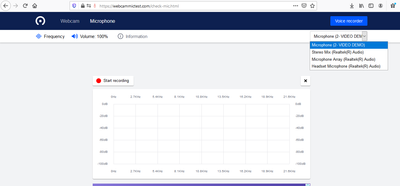

To test the audio, please go the below website,

https://webcammictest.com/check-mic.html

Click on the Check microphone option.

Then select the audio device "VIDEO DEMO". If you playing the audio data alone without streaming the camera means, you will hear the audio sound. While you streaming the both camera and audio data means the audio sound will differ where during camera streaming audio data is not sent because of this we will get that sound differently.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi sathishkumar_r,

Thanks for your updated information.

So, your issue is caused by the CAMERA_RECEIVER while blocking the ISO audio data.

I think , you may can't use the while to wait, to the camera recevice, you can use the if to check the receive is finished, if it is not finished, you also can run other code, just instead of the while wait directly. And you can use some timer to check that if timely, until it gives the success feedback.

Do you use this code, the newest SDK version?

\SDK_2.8.6_EVK-MIMXRT1060\boards\evkmimxrt1060\usb_examples\usb_device_video_virtual_camera\bm

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kerry,

I tried of using the if case but its not useful, getting corruption image this may because the usb transcation is fast, so in that time am not getting the filled buffer data.

Do you think will timer or other code will solve this issue?

Currently am using the SDK version 2.7.0. Will check on the new sdk also 2.8.6

Regards,

Sathish

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi sathishkumar_r,

Thanks for your updated information and let me know the if still no help.

When you import it to the SDK2.8.6, please also share me your new project, I will find time to test it, after I reproduce the issues, I will help you to check with our internal expert, then any useful feedback will let you know.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kerry,

I have cross checked the source code of SDK 2.8.6 with my source code SDK 2.7.0. I hope there is no major changes regarding USB side and in latest SDK also will get the same issue.

Currently we are using the source code which shared by NXP team, which streams the MT9M114 720p in UVC.

In source code we are having some queries below,

1) For transferring the image data we can use the frame done dma callback instead of using the USB callback. so that we no need to wait until the buffer fill. Once we got the filled buffer we can start send the image data through USB. Why we are using the USB callback instead of using the frame done callback? Is that any issues behind this like affecting data rate or any thing else?

2) If we go for an RTOS, which RTOS should be use for USB either Amazon FreeRTOS or Azure RTOS?. In latest SDK we don't find any USB related Amazon free RTOS. Do we have any RTOS source code which streams the MT9M114 sensor in UVC?

Please let me know your suggestions on above queries!!

Regards,

Sathish