- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

-

- Home

- :

- i.MX Forums

- :

- i.MX RT

- :

- Re: AR1335 camera sensor driver

AR1335 camera sensor driver

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

AR1335 camera sensor driver

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am wondering if you have used this camera sensor: AR1335 with any of your NXP MCUs. If so please share with me the driver source code.

Regards,

Farid

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

AR1335 Linux device driver for Jetson nano:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi fmabrouk,

Until now, I didn't find the RT chip driver about the AR1335 , I also search it internally, didn't find it.

Maybe you can search it from the internet, some 3rd part may have AR1335 drivers.

Nxp official side, didn't find it, until now, RT mainly use MT9M114 or OV7725 camera module.

Sorry for the inconvenience bring you, and thanks a lot for your understanding.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am still trying to port AR1335 camera driver to ImX RT1170 CCI2 mipi demo code.

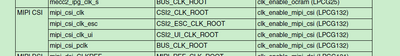

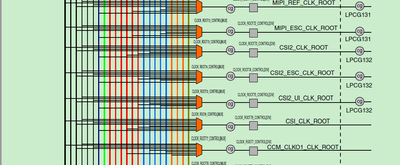

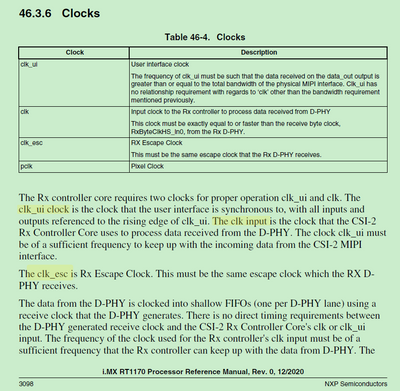

In the CS2 mipi code I found these clocks:

CLOCK_SetRootClock(kCLOCK_Root_Csi2, &csi2ClockConfig);

CLOCK_SetRootClock(kCLOCK_Root_Csi2_Esc, &csi2EscClockConfig);

CLOCK_SetRootClock(kCLOCK_Root_Csi2_Ui, &csi2UiClockConfig);

Can anyone explains what these clocks are for?

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi fmabrouk,

About your mentioned clock, you can find it from the RT1170 reference manual:

Wish it helps you!

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using this camera sensor: AR1335 instead of OV5640 on imX RT1170 dev board. AR1335 sends out only 10 bits image. I believe OV5640 pixel data bus is 16 bits.

How can I modify the mipi csi demo code you that comes with the NXP sdk so that I can get an image with this sensor?

I wished if there some documentation that guides through the process of importing another camera sensor into this demo code.

Thank you so much in advance!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi fmabrouk,

I am really so sorry for the later reply.

Just get the internal AE reply, and really a sad story to you: MIPI-CSI2 can't support raw data due to VIDEO_MUX bugs, and this information will add to the RT1170 reference manual.

So, could you please check your external AR1335, whether that can be configured to support, eg. RGB?

To raw data 10bit now, really no workaround now.

I am so sorry for the later response and thanks so much for your effort.

Best Regards,

Kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Kerry,

just for clarification: does this mean using an 8bit monochrome grayscale image sensor is impossible with the RT1176 (OV9281 to be precise, data format is RAW8)? Is this a fundamental limitation of the hardware, or just an issue in the SDK that will be fixed in the future?

I already have this sensor running using an NXP iMX8M Mini SoC which seems to use a similar MIPI CSI peripheral, but need to port to RT1176.

Best, Michael

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Michael,

>>I already have this sensor running using an NXP iMX8M Mini SoC

I am very interested in this. Is it possible for you to provide the source code as well as device tree file?

I am trying to make this work for iMX8M-plus which is very similar.

Thank you, MC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello.

I am interested to know if u were successful with the connection of camera AR1335 with Mx8 plus

please

Fabio

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fabio,

Yes, I was able to do it using stock driver.

I used HD 1080p resolution so far.

The plan is to also try 4K and above in a few days.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, thank you. I am new in this, so my question can be silly. Did you use Debian or Yocto? Could you please provide link for stock driver?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Fabio,

I am using yocto.

You will find latest ar1335 sensor kernel driver here:

https://github.com/nxp-imx/isp-vvcam/tree/lf-5.15.y_2.0.0/vvcam/v4l2/sensor/ar1335

If you use Yocto, you also need isp-imx additionally to isp-vvcam above:

If you are interested in this source code, you can get it by downloading and executing bin file in your Ubuntu Host:

https://www.nxp.com/lgfiles/NMG/MAD/YOCTO/isp-imx-4.2.2.20.0.bin

Replace version with what you are using in your BSP (here we use v20)

Here is an example on how to deploy driver for another sensor:

https://www.nxp.com/docs/en/application-note/AN13712.pdf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi michael_parthei,

From the internal AE description, it is the chip MIPI CSI can't support it, not the SDK issues.

So the reference manual will add the related description in the future.

But if you want to use the raw data, you need to use the parallel CSI interface instead of the mipi-csi.

Sorry for the inconvenience we bring you.

Best Regards,

kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, Kerry! I opened a new topic to discuss this issue: https://community.nxp.com/t5/i-MX-RT/RT1170-Using-MIPI-CSI-with-grayscale-or-raw-image-sensors/m-p/1...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi michael_parthei,

Already have you double check with our internal expert and reply you in your new post.

Any new issues, please follow that new post.

Best Regards,

kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi fmabrouk,

Until now, we still don't have the directly document about importing another camera sensor.

As I know, the camera sensor should also have the configure tools, which can do the configuration and generate the code. Whether your AR1335 already have the related drivers from it's own company?

I also check internally, didn't find the AR1335 RT related drivers until now.

Best Regards,

kerry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Besides the questions I posted above, if I need to setup my pixel clock to 80MHz. What registers should I change in CSI2 mipi demo code of imx RT1170?

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Below is a summary of the image parameters that I am interested in:;

[PLL PARAMETERS]

; Target Vt Pixel Frequency: 220 MHz

; Input Clock Frequency: 24 MHz

;

; Actual Vt Pixel Clock: 220 MHz

; Actual Op Pixel Clock: 110 MHz

;

; pll_multiplier (M value) = 55

; pre_pll_clk_div (N value) = 2

; pll_multiplier2 (M2 value) = 55

; pre_pll_clk_div2 (N2 value) = 2

; Fpfd = 12 MHz

; Fvco = 660 MHz

; Fvco2 = 660 MHz

; Vt Sys Divider = 1

; Vt Pix Divider = 3

; Op Sys Divider = 1

; Op Pix Divider = 6

;

; [IMAGE PARAMETERS]

; Requested Frames Per Second: 30

; Output Columns: 640

; Output Rows: 480

; Use Y Summing: Unchecked

; X-only Binning: Unchecked

; Allow Skipping: Checked

; Blanking Computation: HB Max then VB

;

; Max Frame Time: 33.3333 msec

; Max Frame Clocks: 7333333.3 clocks

; Readout Mode: 1, YSum: No, XBin: No

; Horiz clks: 640 active + 1688 blank = 2328 total

; Vert rows: 480 active + 2674 blank = 3154 total

; Output Cols: 640

; Output Rows: 480

; FOV Cols: 640

; FOV Rows: 480

; Actual Frame Clocks: 7342512 clocks

; Row Time: 10.582 usec / 2328 clocks

; Integration Time: 33 msec.

; Frame time: 33.375055 msec

; Maximum Frame Rate allowed: 191.739fps

; Frames per Sec: 29.962 fps

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am using mipi CSI2 demo code on imx RT1170 to get an 480x640 image from AR1335.

I found that the output format of this camera sensor is: 10bits. what changes do I need to do in the CSI driver so that I can capture the image i need.

I used a manufacturer tool to generate the configuration file, yet i am far from getting an image due to my wrongfully configured CSI driver.

Could you please advice on what need to be modified in the demo code CSI driver to capture an image from AR1335.

Kind regards,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I verified with my scope that the camera sensor is streaming data; and I was able to capture the CSI reading registers while debugging my code: please see attached screen shot. However, I am not able to get a full frame buffer CSI interrupt. Again this has to do with the way I initialized the MIPI CSI, could you or some one else help me set thiis up properly, based on the image requirements I stated in my previous email.

Cheers!