- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Home

- :

- i.MX Forums

- :

- i.MX RT

- :

- Re: 11 Channel ADC 12000 samples per second

11 Channel ADC 12000 samples per second

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

11 Channel ADC 12000 samples per second

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

We are facing issue while taking samples. We needs to take 12000 sample per second.

We are using free rtos based project.

ADC task we are running as one thread except that five more thread we are running.

Questions :

1) How to achieve 12000 samples per second using free rtos ?

2) How to configure PIT timer for taking this much samples per second ?

3) We have use DMA or any other suggestion ?

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thank you for your interest in NXP Semiconductor products and for the opportunity to serve you.

1) How to achieve 12000 samples per second using free RTOS & How to configure the PIT timer for taking this many samples per second?

-- I'd like to suggest you use the PIT timer to trigger the ADC_ETC module to sample the external signal, the ADC_ETC supports the SyncMode, trigger

chain and other features, these features are very essential to the huge ADC sample implementation.

So please review the ADC External Trigger Control (ADC_ETC) chapter in the RM to learn it.

3) Do we have to use DMA or any other suggestion?

-- Yes, the DMA is used to transfer the result after ADC convert completes.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi jeremyzhou,

Thank you for your response.

ADC_ETC we already implemented with 11 channel sync mode trigger.

We needs to take 12800 samples per second.

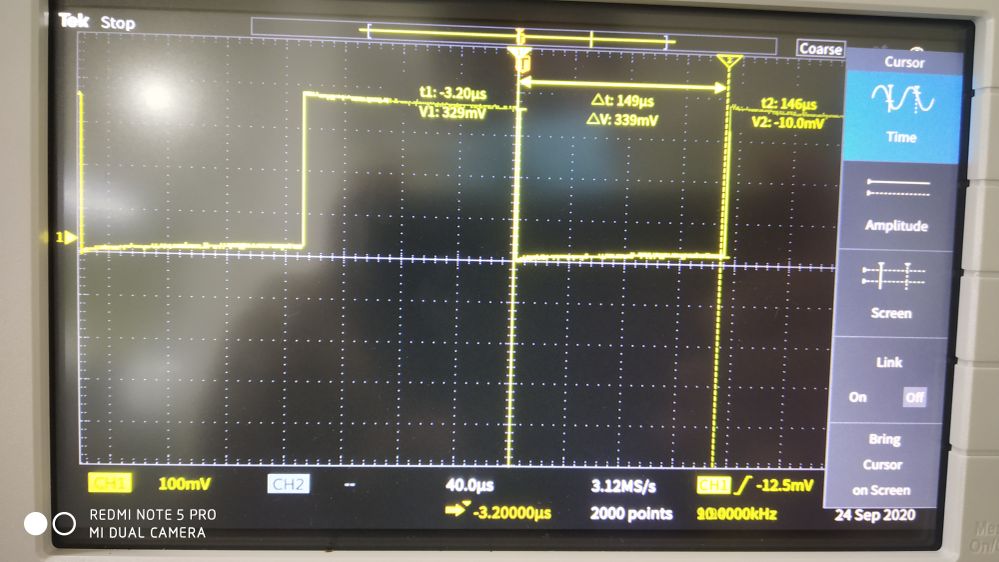

We have configured pit timer with 78 microseconds for every adc callback trigger. But adc callback not at all triggering if we change 156 microseconds callback is triggered.

/* Set timer period for channel 0 */

test_ticks = USEC_TO_COUNT(78U, CLOCK_GetFreq(kCLOCK_OscClk)); //78 microseconds

PIT_SetTimerPeriod(ELM_PIT_BASE, ELM_PIT_TIMER_CHANNEL, test_ticks);Why pit timer is not working 78 microseconds ?

Please give your suggestion to work this issue.

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for your reply.

I was wondering if you can describe the phenomenon that caused by issue happens, it can help me to figure it out.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Sorry for the late reply.

Now we can able to take 12800 samples per second.

We have created two project one is barametal and another one is free rtos based project.

Same free rtos adc project we are porting to our original project it contains (mqtt,http etc..).

We have created adc task is one thread whenever adc thread is runs other threads not at all running.

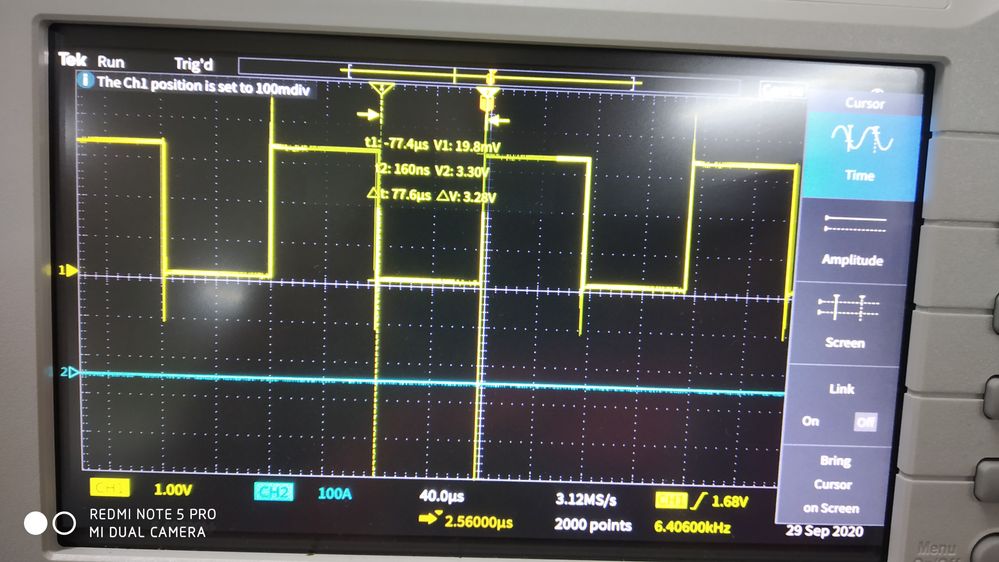

We found issue in adc Pit timer is running every 78microseconds this time other threads not at all running.

If we increase pit timer interval to more than 400 microseconds other threads are running fine.

We want to run pit timer 78microseconds in free rtos based project.

How to solve this issue ?

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for your reply

I think I need more information, so I was wondering if you can introduce how you use the PIT trigger the ADC to sample the external signal with 12000 times per Sec and share the code.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Thanks for your reply.

I have attached free rtos project.

We want to run pit timer with 78 microseconds but upto 150 micro seconds adc working if we change to 78 micro seconds error isr ADC_ETC_ERROR_IRQ_IRQHandler triggered.

We are toggle gpio in callback function.

Please give solution for solve this issue.

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

After having a brief review of your demo, in my opinion, the demo code is bad.

Firstly, I don't think you completely understand the way that ADC ETC triggers the ADC conversion, then it causes many errors in the codes, for example, when using the ADC ETC, the Input Channel Select will be determined by the ADC ETC instead of ADC, select the correct HWTS[7:0] when initializing the ADC ETC, etc.

Plear refer to the evkmimxrt1064_adc_etc_hardware_trigger_conv demo in SDK lirary carefully.

In your demo, a PIT trigger would lead to 8 ADC conversion in sequence, so it means that it definitely needs to set PIT to generate a trigger per 78 microseconds, otherwise, the coming PIT trigger will be ignored by ADC_ETC due to the previous trigger not finished yet and the error interrupt occurs too.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Attached code single ADC1 6 channel pit timer with 78us working fine.

Whenever adding ADC2 only we are facing issue.

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

ADC1 8 channel running fine with 78us.

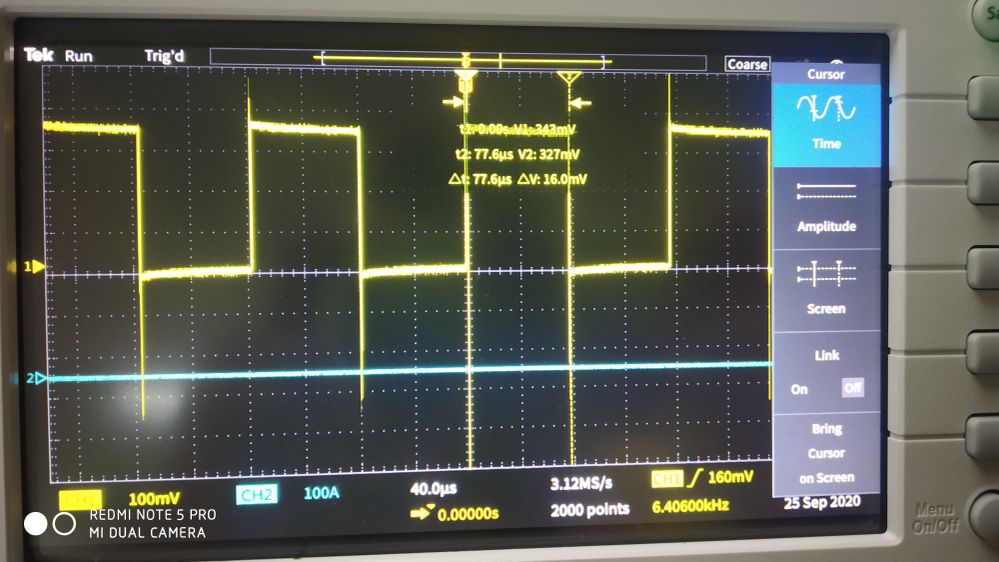

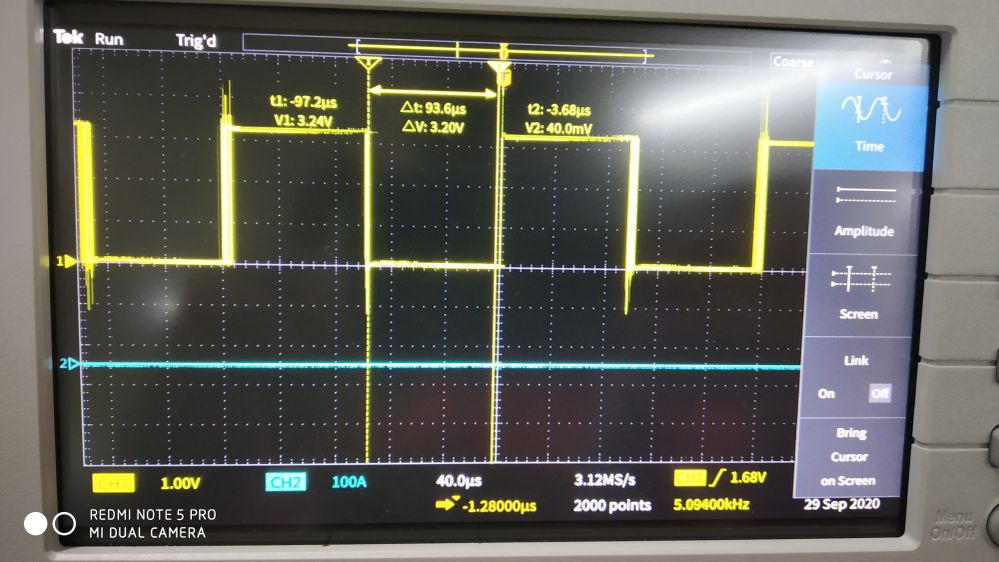

If we add ADC1 6 channel and ADC2 5 channel running but not proper time interval.

How to run ADC1 6 channel and ADC2 5 ?

Can we run ADC1 with 11 channel ?

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your reply.

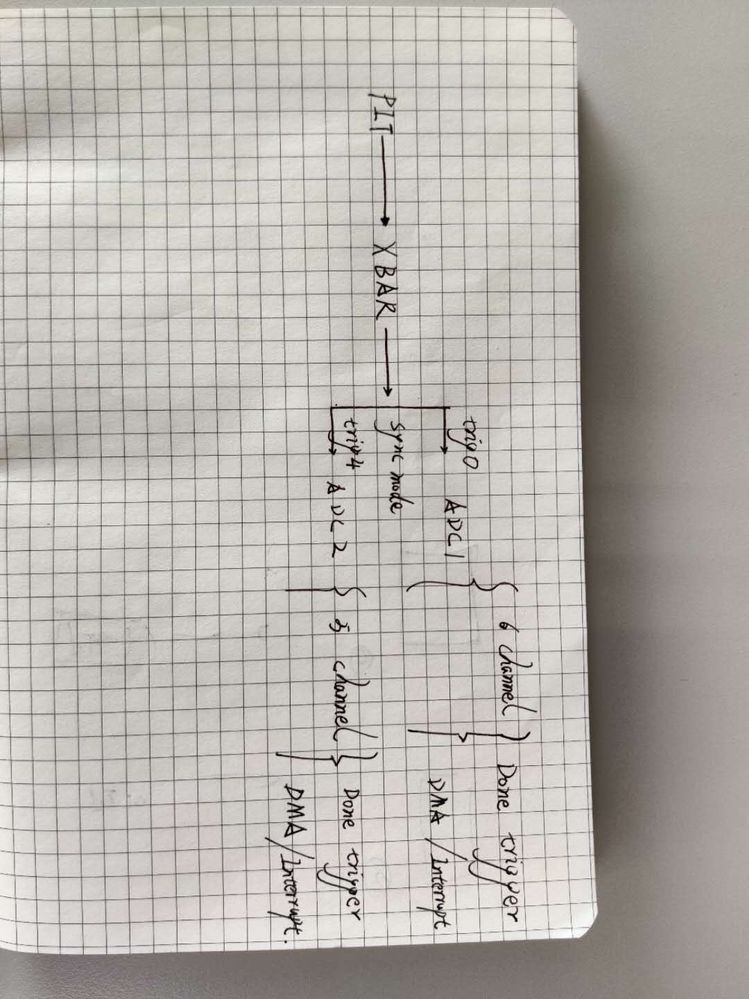

1) How to run ADC1 6 channel and ADC2 5?

-- The attachment shows the flow of using ADC1 and ADC2 to sample the signal. I think it's more simple than your approach.

2) Can we run ADC1 with 11 channels?

-- Yes, it's possible. However, comparing the above approach, you need to use the trig0 and trig 1 to trigger the ADC1 to complete 11 conversions round, as the ADC1 can't sample the external signal on multiple pins simultaneously, it means that ADC needs to sample them in turn, then it will cost more time to complete the 11 conversions than the above approach.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Thanks for your brief explanation.

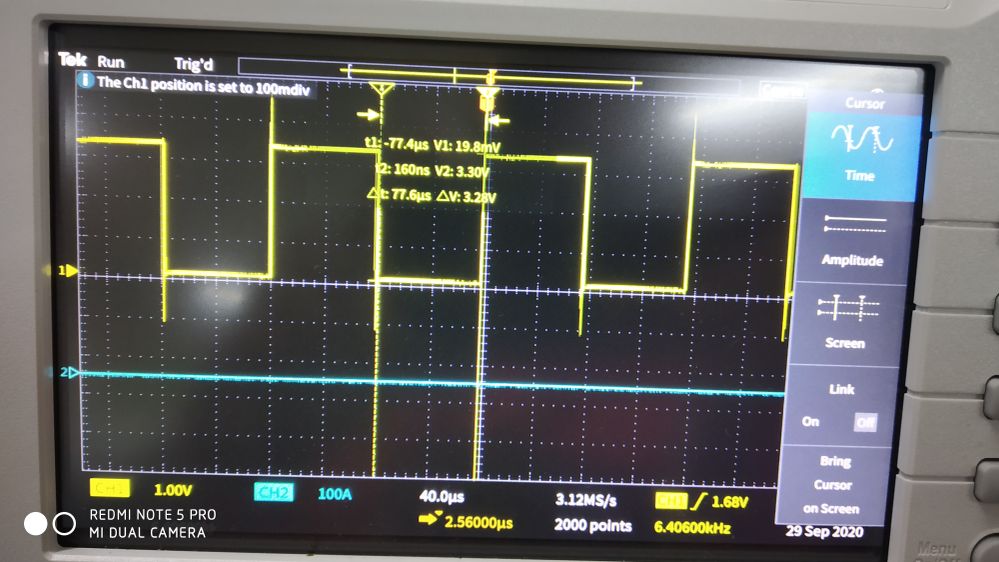

Now adc1 and adc2 free rtos project running with 78us.

But we are facing one more issue.

If we use OCRAM as a internal ram running fine.

If we use SDRAM as a internal ram isr latency increase(20us).

//SCB_DisableDCache(); comment this function means running fine.

SCB_DisableDCache(); uncommand this function means isr callback latency increasing.

We found the issue:

Inside ADC callback function we are doing some calculation that's why if we disable D cache interrupt callback latency increasing.

1) Why SRAM & SDRAM(without disabling D cache) running fine along with our calculation ?

2) In SDRAM (disable d cache) why interrupt latency is increasing ?

3) In SDRAM(disable d cache) if we use DMA we can solve the issue ?

4) SDRAM(disable d cache) other callback interrupt latency also increase ?

5) How to use ADC+DMA (peripheral to memory method) ?

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your reply.

1) In SDRAM (disable d cache) why interrupt latency is increasing?

-- SDRAM read performance is not very good under DCACHE to disable, and its performance will increase by 5 times after enabling DCACHE.

2) How to use ADC+DMA (peripheral to memory method)?

-- ADC_ETC can trigger a DMA request once the ADC convert completes.

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Thanks for your reply.

1) It's compulsory to disable DCache ?

/* Data cache must be temporarily disabled to be able to use sdram */

SCB_DisableDCache();Our main project containing network related task tls, lcd etc..

2) ADC+DMA

/* Initialize the ADC_ETC. */

ADC_ETC_GetDefaultConfig(&adcEtcConfig);

adcEtcConfig.XBARtriggerMask = 1U; /* Enable the external XBAR trigger0. */

adcEtcConfig.dmaMode = kADC_ETC_TrigDMAWithLatchedSignal;

ADC_ETC_Init(DEMO_ADC_ETC_BASE, &adcEtcConfig);

ADC_ETC_EnableDMA(DEMO_ADC_ETC_BASE, 1);Only enable is sufficient ADC_ETC_EnableDMA(DEMO_ADC_ETC_BASE, 1) ?

I have refereed https://community.nxp.com/t5/i-MX-RT/ADC-DMA/td-p/918230 this link but not fully understood.

In order work ADC_ETC + DMA which method we have to follow ?

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for your reply.

1) It's compulsory to disable DCache?

-- Of course not, it depends on you, however, to balance read and write performance, as well as practices application, recommend to enable CACHE in most cases.

2) In order to work ADC_ETC + DMA which method we have to follow?

-- In my opinion, you'd better learn how to use the DMA by reviewing the RM at firstly, then refer to application notes in the link:

https://community.nxp.com/t5/i-MX-RT/ADC-DMA/td-p/918230

Have a great day,

TIC

-------------------------------------------------------------------------------

Note:

- If this post answers your question, please click the "Mark Correct" button. Thank you!

- We are following threads for 7 weeks after the last post, later replies are ignored

Please open a new thread and refer to the closed one, if you have a related question at a later point in time.

-------------------------------------------------------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Thanks for your reply,

1) if we enable d cache Ethernet + TLS not working.

2) I will read then get back to you.

Thanks & Regards,

Vasu

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jeremyzhou,

Thanks for your reply.

we want to trigger 11 channel adc using pit timer.

If possible can you give information about how to configure xbara properly.

ADC1 we are planning to use 6 channel and ADC2 5 channel all channels should trigger same time.

ADC1 and ADC2 can we run single time using single callback function.

Thanks & Regards,

Vasu