- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- i.MXプロセッサ

- :

- i.MX RT Crossover MCUs Knowledge Base

- :

- Recognition model for microcontroller use

Recognition model for microcontroller use

- RSS フィードを購読する

- 新着としてマーク

- 既読としてマーク

- ブックマーク

- 購読

- 印刷用ページ

- 不適切なコンテンツを報告

Recognition model for microcontroller use

Recognition model for microcontroller use

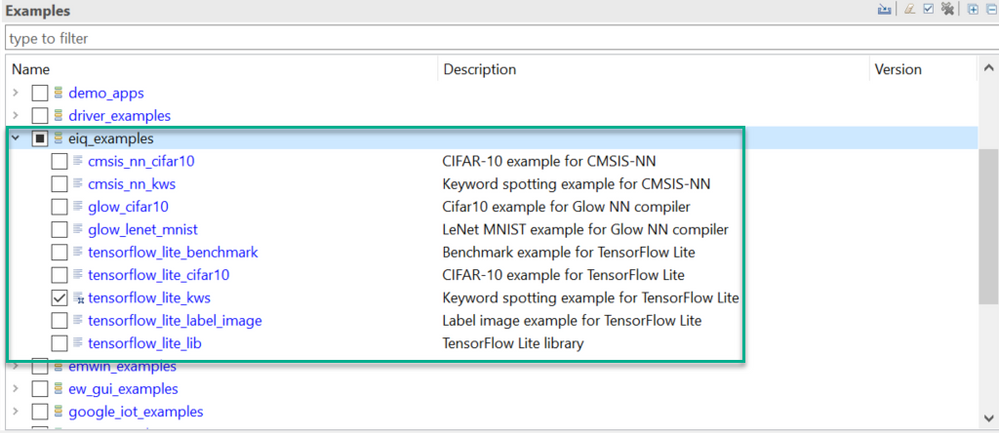

In the SDK_2.7.0_EVKB-IMXRT1050, it contains some eIQ machine learning demo projects, there's the tensorflow_lite_kws among them.

It's a keyword spotting example that is based on Keyword spotting for Microcontrollers and it deploys a deepwise separable convolutional neural network called MobileNet in this demo project. It can classify a one-second audio clip as either silence, an unknown word, "yes", "no", "up", "down", "left", "right", "on", "off", "stop", or "go".

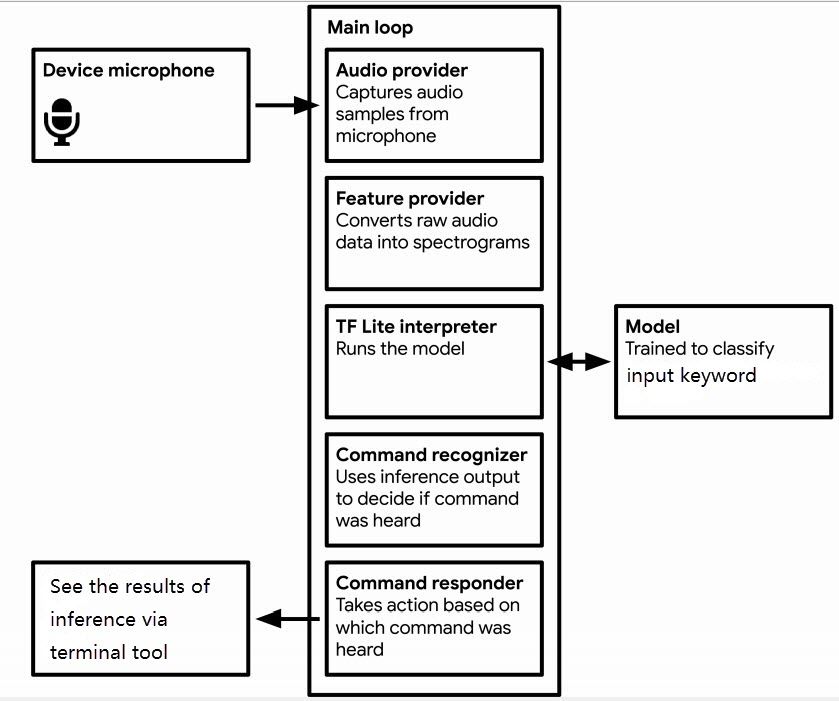

Figure 1 shows the components that comprise it.

Fig 1

Training Our New Model

The model we are using is trained with the TensorFlow script which is designed to demonstrate how to build and train a model for audio recognition using TensorFlow.

The script makes it very easy to train an audio recognition model. Among other things, it allows us to do the following:

- Download a dataset with audio featuring 20 spoken words.

- Choose which subset of words to train the model on.

- Specify what type of preprocessing to use on the audio.

- Choose from several different types of the model architecture.

- Optimize the model for microcontrollers using quantization.

When we run the script, it downloads the dataset, trains a model, and outputs a file representing the trained model. We then use some other tools to convert this file into the correct form for TensorFlow Lite.

Training in virtual machine (VM)

Preparation

Make sure the TensorFlow has been installed, and since the script downloads over 2GB of training data, it'll need a good internet connection and enough free space on the machine.

Note that: The training process itself can take several hours, be patient.

Training

To begin the training process, use the following commands to clone ML-KWS-for-MCU.

git clone https://github.com/ARM-software/ML-KWS-for-MCU.gitThe training scripts are configured via a bunch of command-line flags that control everything from the model’s architecture to the words it will be trained to classify.

The following command runs the script that begins training. You can see that it has a lot of command-line arguments:

python ML-KWS-for-MCU/train.py --model_architecture ds_cnn --model_size_info 5 64 10 4 2 2 64 3 3 1 1 64 3 3 1 1 64 3 3 1 1 64 3 3 1 1 \

--wanted_words=zero, one, two, three, four, five, six, seven, eight, nine \

--dct_coefficient_count 10 --window_size_ms 40 \

--window_stride_ms 20 --learning_rate 0.0005,0.0001,0.00002 \

--how_many_training_steps 10000,10000,10000 \

--data_dir=./speech_dataset --summaries_dir ./retrain_logs --train_dir ./speech_commands_train Some of these, like --wanted_words=zero, one, two, three, four, five, six, seven, eight, nine. By default, the selected words are yes, no, up, down, left, right, on, off, stop, go, but we can provide any combination of the following words, all of which appear in our dataset:

- Common commands: yes, no, up, down, left, right, on, off, stop, go, backward, forward, follow, learn

- Digits zero through nine: zero, one, two, three, four, five, six, seven, eight, nine

- Random words: bed, bird, cat, dog, happy, house, Marvin, Sheila, tree, wow

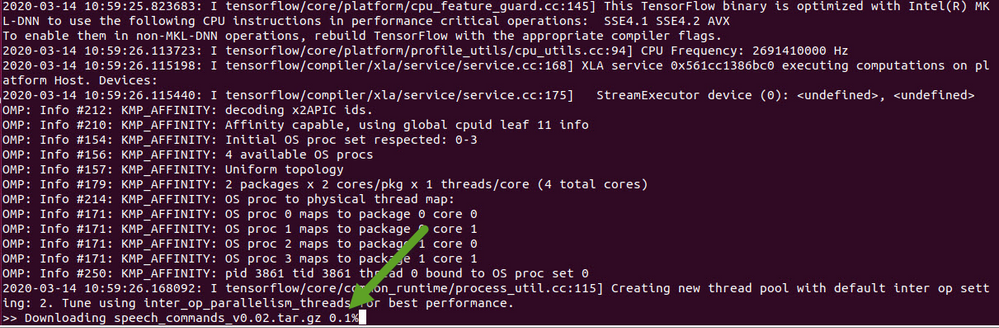

Others set up the output of the script, such as --train_dir=/content/speech_commands_train, which defines where the trained model will be saved. Leave the arguments as they are, and run it. The script will start off by downloading the Speech Commands dataset (Figure 2), which consists of over 105,000 WAVE audio files of people saying thirty different words. This data was collected by Google and released under a CC BY license, and you can help improve it by contributing five minutes of your own voice. The archive is over 2GB, so this part may take a while, but you should see progress logs, and once it's been downloaded once you won't need to do this step again. You can find more information about this dataset in this Speech Commands paper.

Fig 2

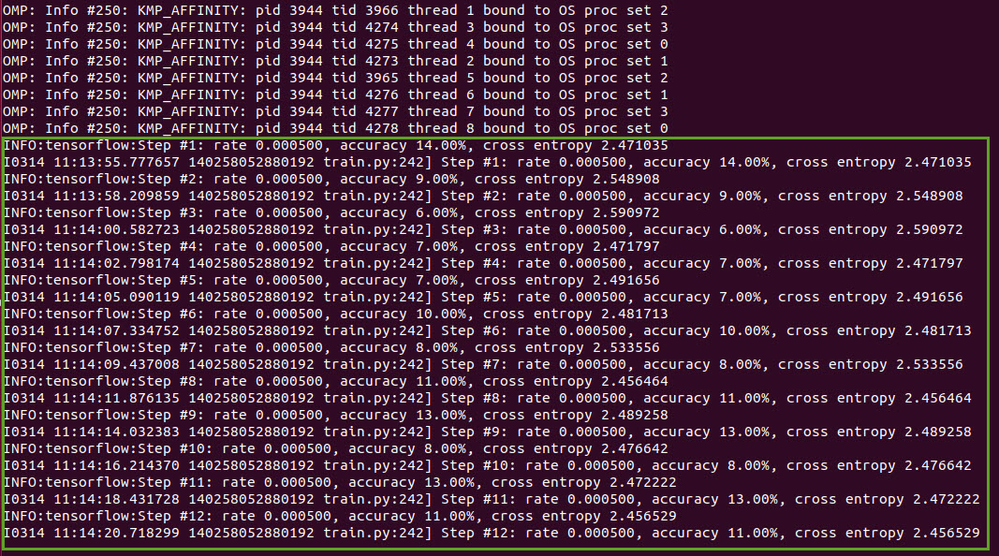

Once the downloading has completed, some more output will appear. There might be some warnings, which you can ignore as long as the command continues running. Later, you'll see logging information that looks like this (Figure 3).

Fig 3

This shows that the initialization process is done and the training loop has begun. You'll see that it outputs information for every training step. Here's a break down of what it means:

Step shows that we're on the step of the training loop. In this case, there are going to be 30,000 steps in total, so you can look at the step number to get an idea of how close it is to finishing.

rate is the learning rate that's controlling the speed of the network's weight updates. Early on this is a comparatively high number (0.0005), but for later training cycles it will be reduced 5x, to 0.0001, then to 0.00002 at last.

accuracy is how many classes were correctly predicted on this training step. This value will often fluctuate a lot, but should increase on average as training progresses. The model outputs an array of numbers, one for each label, and each number is the predicted likelihood of the input being that class. The predicted label is picked by choosing the entry with the highest score. The scores are always between zero and one, with higher values representing more confidence in the result.

cross-entropy is the result of the loss function that we're using to guide the training process. This is a score that's obtained by comparing the vector of scores from the current training run to the correct labels, and this should trend downwards during training.

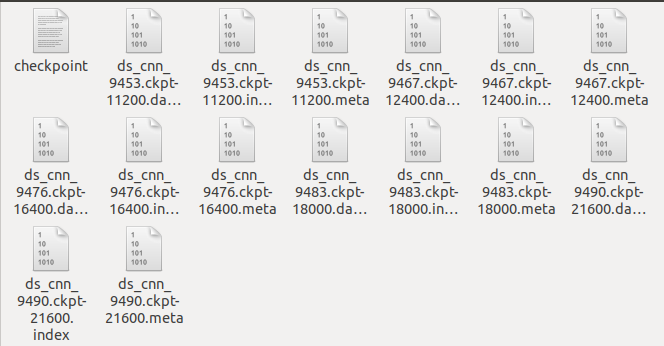

checkpoint

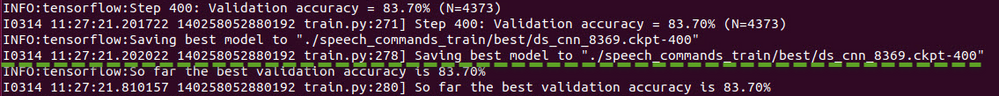

After a hundred steps, you should see a line like this:

This is saving out the current trained weights to a checkpoint file (Figure 4). If your training script gets interrupted, you can look for the last saved checkpoint and then restart the script with --start_checkpoint=/tmp/speech_commands_train/best/ds_cnn_xxxx.ckpt-400 as a command line argument to start from that point .

Fig 4

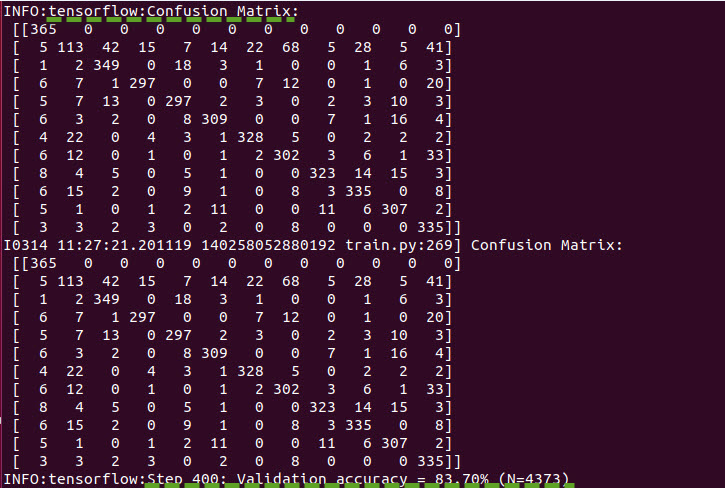

- Confusion Matrix

After four hundred steps, this information will be logged:

The first section is a confusion matrix. To understand what it means, you first need to know the labels being used, which in this case are "silence", "unknown", "zero", "one", "two", "three", "four", "five", "six", "seven", "eight", and "nine". Each column represents a set of samples that were predicted to be each label, so the first column represents all the clips that were predicted to be silence, the second all those that were predicted to be unknown words, the third "zero", and so on.

Each row represents clips by their correct, ground truth labels. The first row is all the clips that were silence, the second clips that were unknown words, the third "zero", etc.

This matrix can be more useful than just a single accuracy score because it gives a good summary of what mistakes the network is making. In this example you can see that all of the entries in the first row are zero (Figure 5), apart from the initial one. Because the first row is all the clips that are actually silence, this means that none of them were mistakenly labeled as words, so we have no false negatives for silence. This shows the network is already getting pretty good at distinguishing silence from words.

If we look down the first column though, we see a lot of non-zero values. The column represents all the clips that were predicted to be silence, so positive numbers outside of the first cell are errors. This means that some clips of real spoken words are actually being predicted to be silence, so we do have quite a few false positives.

A perfect model would produce a confusion matrix where all of the entries were zero apart from a diagonal line through the center. Spotting deviations from that pattern can help you figure out how the model is most easily confused, and once you've identified the problems you can address them by adding more data or cleaning up categories.

Fig 5

- Validation

After the confusion matrix, you should see a line like Figure 5 shows.

It's good practice to separate your data set into three categories. The largest (in this case roughly 80% of the data) is used for training the network, a smaller set (10% here, known as "validation") is reserved for evaluation of the accuracy during training, and another set (the last 10%, "testing") is used to evaluate the accuracy once after the training is complete.

The reason for this split is that there's always a danger that networks will start memorizing their inputs during training. By keeping the validation set separate, you can ensure that the model works with data it's never seen before. The testing set is an additional safeguard to make sure that you haven't just been tweaking your model in a way that happens to work for both the training and validation sets, but not a broader range of inputs.

The training script automatically separates the data set into these three categories, and the logging line above shows the accuracy of model when run on the validation set. Ideally, this should stick fairly close to the training accuracy. If the training accuracy increases but the validation doesn't, that's a sign that overfitting is occurring, and your model is only learning things about the training clips, not broader patterns that generalize

Training Finished

In general, training is the process of iteratively tweaking a model’s weights and biases until it produces useful predictions. The training script writes these weights and biases to checkpoint files (Figure 6).

Fig 6

A TensorFlow model consists of two main things:

- The weights and biases resulting from training

- A graph of operations that combine the model’s input with these weights and biases to produce the model’s output

At this juncture, our model’s operations are defined in the Python scripts, and its trained weights and biases are in the most recent checkpoint file. We need to unite the two into a single model file with a specific format, which we can use to run inference. The process of creating this model file is called freezing—we’re creating a static representation of the graph with the weights frozen into it.

To freeze our model, we run a script that is called as follows:

python ML-KWS-for-MCU/freeze.py --model_architecture ds_cnn --model_size_info 5 64 10 4 2 2 64 3 3 1 1 64 3 3 1 1 64 3 3 1 1 64 3 3 1 1 \

--wanted_words=zero, one, two, three, four, five, six, seven, eight, nine \

--dct_coefficient_count 10 --window_size_ms 40 \

--window_stride_ms 20 --checkpoint ./speech_commands_train/best/ds_cnn_9490.ckpt-21600 \

--output_file=./ds_cnn.pbTo point the script toward the correct graph of operations to freeze, we pass some of the same arguments we used in training. We also pass a path to the final checkpoint file, which is the one whose filename ends with the total number of training steps.

The frozen graph will be output to a file named ds_cnn.pb. This file is the fully trained TensorFlow model. It can be loaded by TensorFlow and used to run inference. That’s great, but it’s still in the format used by regular TensorFlow, not TensorFlow Lite.

Convert to TensorFlow Lite

Conversion is a easy step: we just need to run a single command. Now that we have a frozen graph file to work with, we’ll be using toco, the command-line interface for the TensorFlow Lite converter.

toco --graph_def_file=./ds_cnn.pb --output_file=./ds_cnn.tflite \

--input_shapes=1,49,10,1 --input_arrays=Reshape_1 --output_arrays='labels_softmax' \

--inference_type=QUANTIZED_UINT8 --mean_values=227 --std_dev_values=1 \

--change_concat_input_ranges=false \

--default_ranges_min=-6 --default_ranges_max =6In the arguments, we specify the model that we want to convert, the output location for the TensorFlow Lite model file, and some other values that depend on the model architecture. we also provide some arguments (inference_type, mean_values, and std_dev_values) that instruct the converter how to map its low-precision values into real numbers.

The converted model will be written to ds_cnn.tflite, this a fully formed TensorFlow Lite model!

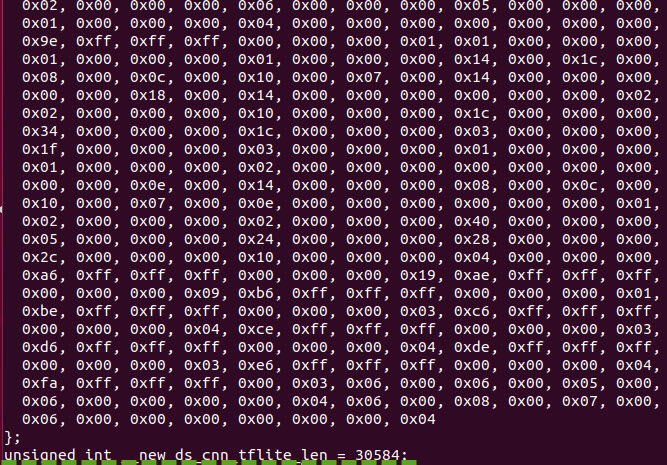

Create a C array

We’ll use the xxd command to convert a TensorFlow Lite model into a C array in the following.

xxd -i ./ds_cnn.tflite > ./ds_cnn.h

cat ./ds_cnn.hThe final part of the output is the file’s contents, which are a C array and an integer holding its length, as follows:

Fig 7

Next, we’ll integrate this newly trained model with the tensorflow_lite_kws project.

Using the Model in tensorflow_lite_kws Project

To use the new model, we need to do two things:

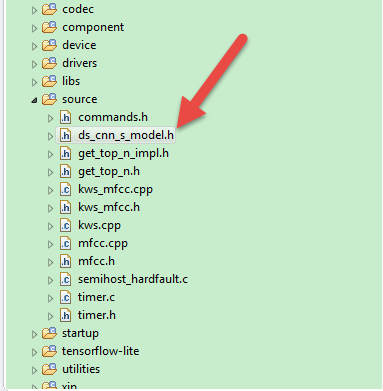

- In source/ds_cnn_s_model.h, replace the original model data with our new model.

- Update the label names in source/kws.cpp with our new ''zero'', ''one'', ''two'', ''three'', ''four'', ''five'', ''six'', ''seven'', ''eight'' and ''nine'' labels.

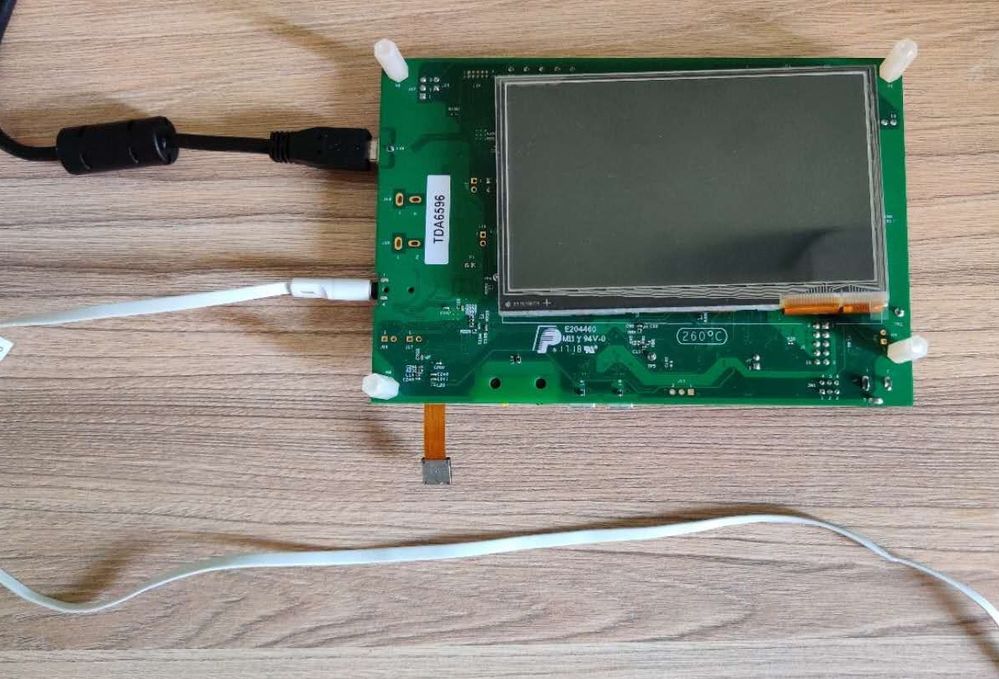

const std::string labels[] = {"Silence", "Unknown","zero", "one", "two", "three","four", "five", "six", "seven","eight", "nine"};Before running the model in the EVKB-IMXRT1050 board (Figure 8), please refer to the readme.txt to do the preparation, in further, the file also demonstrates the steps of testing, please follow them.

Fig 8

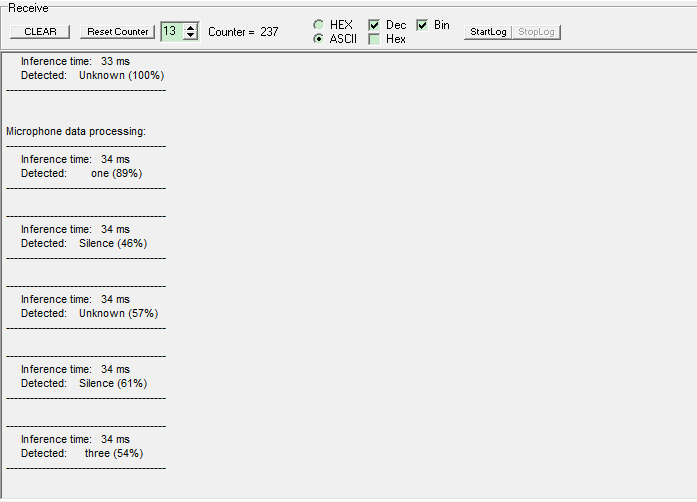

Figure 9 shows the testing I did, I've attached the model file, please give a try by yourself.

Fig 9

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

@jeremyzhou, First of all thanks for this example. I bought the board and followed the instructions to add KSW to IMXRT1050 board. I am stuck at final stage at section:

Using the Model in tensorflow_lite_kws Project

I cant find source/ds_cnn_s_model.h to replace the trained ds_cnn.h. I have searched online and in downloaded SDK_2.7.0_EVKB-IMXRT1050. There is no kws_tensorflow_lite example nor directory in it.

Appreciate your quick help here.

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi ,

In the MCUXpreeso, expand the source fold on the Workspace window, you can see the ds_cnn_s_mode.h, as below shows.

BR,

Jeremy

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Thanks jeremyzhou for your quick response. My problem is that I cant find the project kws_tensorflow_lite. Your screen shot shows only the source folder. Not sure how you got the source folder in your IDE? Which project is it? Can you please share the link to that?

I have searched the downloaded MCUExpresso IDE workspace folder (C:\Users\B2F\Documents\MCUXpressoIDE_11.1.1_3241\workspace) which came with all examples, but no luck. Is there some kind of version mismatch?

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi,

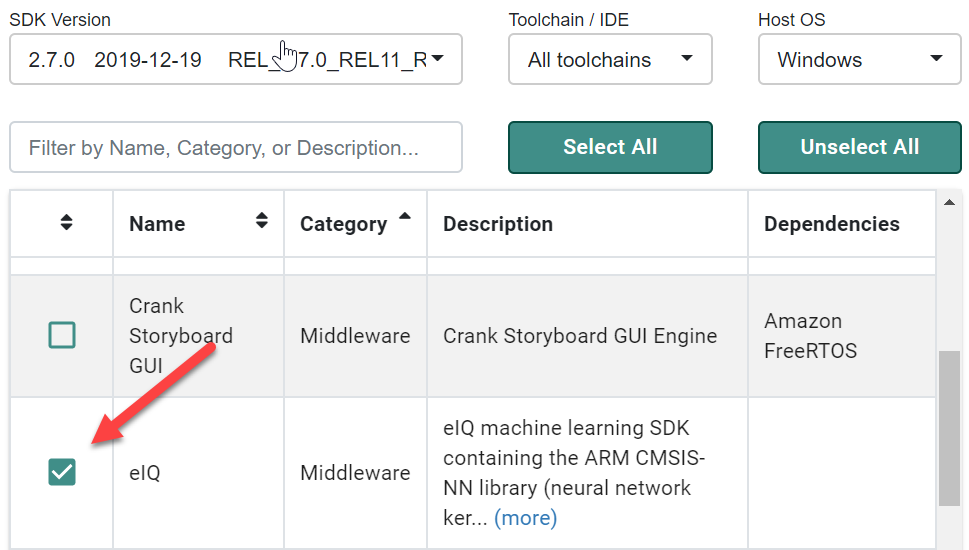

As I point out at the beginning of this article, the tensorflow_lite_kws demo is from the SDK_2.7.0_EVKB-IMXRT1050, and you can download this SDK library via the below link. Then install the SDK library in the MCUXpreeso IDE, after that, you can load the tensorflow_lite_kws demo.

So please give a try.

https://mcuxpresso.nxp.com/en/welcome

BR,

Jeremy

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

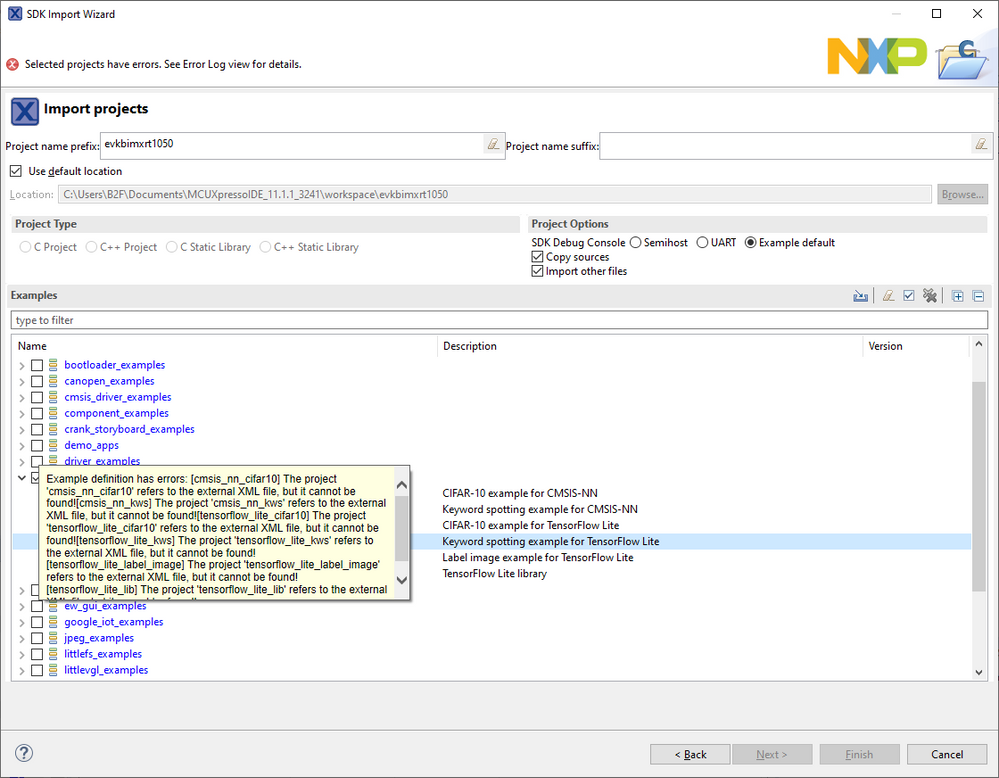

jeremyzhou, turns out I had followed all the steps but never selected eiq while building the SDK and hence it was missing.

Now that I have it, I cant import any of eiq examples including tensorflow_lite_kws

"tensorflow_lite_kws] The project 'tensorflow_lite_kws' refers to the external XML file, but it cannot be found!"

On eiq

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi,

Thanks for your reply.

It seems a bit weird, I've not encountered it before.

So I'd like to suggest you delete the installed SDK library and clean-up the projects in the workspace directory, then build a complete new SDK library (as below shows), then install it to the MCUXpresso IDE.

After doing that, reimport the eIQ demos again.

BR,

Jeremy

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Yes Only selecting eid with tensorflow_lite_kws worked great. I am able to test deault Keywords and the 0 to 9. Just that the recognition is not very accurate.

Also do you know if I can increase the label size from 12 to 20? Where I can add numbers to existing buffer, combining both models data? I tried but didnt work.

Do you have any project where you used the display in your picture above based on KSW? I am trying to display the output on the screen rather than on Tera Term.

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi ,

Thanks for your reply.

1) Do you know if I can increase the label size from 12 to 20?

-- Yes, you can, however, it needs to train a completely new model for that, and the article has already demonstrated the training procedure.

2) Do you have any project where you used the display in your picture above based on KSW?

-- In the SDK library, there're some GUI demos, maybe you can refer to them.

BR,

Jeremy