- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- Neural Processing UnitsNeural Processing Units

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

- Neural Processing Units

-

- NXP Tech Blogs

- Home

- :

- i.MX Forums

- :

- i.MX Processors

- :

- Using DMA with native CS for a SPI peripheral

Using DMA with native CS for a SPI peripheral

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Using DMA with native CS for a SPI peripheral

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone,

I had faced an issue with the delay between CS assertion and SCLK trigger for SPI for which I received a suggestion to use native CS with DMA disabled. This the link of the entire discussion.

The problem with disabling the DMA is that the CPU will be burdened a lot, so I am still looking for the solutions of below two queries from my previous post :

- How does enabling DMA causes the native CS pin to go high after each byte transfer?

- The project that I am working on also has ADC interfaced to SPI (not the same bus as DAC). So communicating continuously to both ADC and DAC for high sample rates will burden the CPU a lot. For this reason I was thinking of forcing DMA for every transaction over SPI. With your current solution I will not be able to do that. So, is there any way to get this issue resolved with DMA enabled? If not, what can I do to lower the burden from CPU?

Thanks,

Mehul

IMX8MPLUS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @mehul_dabhi

I hope you are doing very well.

Could you please share steps to replicate the issue by my side?

Also, are you using Cortex M or Cortex A?

SPI as Master or Slave? (I guess is master).

Also, please share your Device tree related to SPI.

A captured Image of signals would be helpful.

Best regards,

Salas.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Manuel_Salas,

Apologies for my delayed response.

Could you please share steps to replicate the issue by my side?

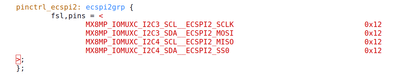

>> 1. Configure the SPI node as per shared snap of dts.

2. Disable dma from spi_imx.c as below snap

3. Transmit some data over SPI.

Also, are you using Cortex M or Cortex A?

>> I am using Cortex A.

SPI as Master or Slave? (I guess is master).

>> Yes, the SPI is configured as Master.

Also, please share your Device tree related to SPI.

>>

A captured Image of signals would be helpful.

>> When I switched to native CS I had observed below waveform when I was trying to transmit 24bits with 8 bits-per-word (Yellow - CS & Blue - SCLK). So the CS was going high after each byte transfer.

To resolve the above issue I was suggested to disable DMA as I mentioned in above steps and got it resolved (below snap yellow - clock and blue - clock).

If anything is yet required to recreate the issue, I am happy to help with that.

Thanks,

Mehul

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @mehul_dabhi

Actually, this behavior is expected. When you are using the SPI as Master, the CS signal should be an IO pad, then the driver will handle when CS should be asserted.

When you are using the SPI as Slave, you should select the CS signal as the dedicated CS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Manuel_Salas,

Thanks for your prompt reply.

Please try changing the native ECSPI2_SS0 for any GPIO without disable DMA.

>> Initially this is what I had configured and had faced an issue of the delay between CS assertion and first rising edge of SCLK and then switched to dedicated CS.

The original issue link is here. Also below is the snap for your reference.

So if I use the GPIO pad for CS won't I face this issue again and go back in the same loop?

Thanks,

Mehul

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Manuel_Salas,

I tried tweaking the driver source and I could only find hard delay being used in the section shown in the below snap.

Here, the delay to be generated was found to be 0. Apart from this, is there any hard delays that might affect? If I intend to reduce these delays, where would you suggest I make changes to improve the throughput?

Thanks,

Mehul

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Manuel_Salas ,

I would really appreciate some insights or suggestions on my recent querry.

Thanks,

Mehul

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are facing a roadblock in development due to an issue with SPI.

Without DMA, the CS delay is minimal, but CPU utilisation becomes extremely high. Using DMA helps offload the CPU, but we are encountering significant CS delays as described in above issue, making it infeasible.

We are currently stuck and would appreciate any insights on reducing these delays while using DMA. If optimisation isn't possible, could you shed some light on the underlying reasons? Understanding this will help us reconsider our hardware choices.

Looking forward to your guidance.

Thanks,

Mehul