- Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- Wireless ConnectivityWireless Connectivity

- RFID / NFCRFID / NFC

- Advanced AnalogAdvanced Analog

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

- S32M

- S32Z/E

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

- Generative AI & LLMs

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

- Cloud Lab Forums

-

- Knowledge Bases

- ARM Microcontrollers

- i.MX Processors

- Identification and Security

- Model-Based Design Toolbox (MBDT)

- QorIQ Processing Platforms

- S32 Automotive Processing Platform

- Wireless Connectivity

- CodeWarrior

- MCUXpresso Suite of Software and Tools

- MQX Software Solutions

- RFID / NFC

- Advanced Analog

-

- NXP Tech Blogs

- Home

- :

- i.MXプロセッサ

- :

- i.MXプロセッサ ナレッジベース

- :

- Video Playback Performance Evaluation on i.MX6DQ Board

Video Playback Performance Evaluation on i.MX6DQ Board

- RSS フィードを購読する

- 新着としてマーク

- 既読としてマーク

- ブックマーク

- 購読

- 印刷用ページ

- 不適切なコンテンツを報告

Video Playback Performance Evaluation on i.MX6DQ Board

Video Playback Performance Evaluation on i.MX6DQ Board

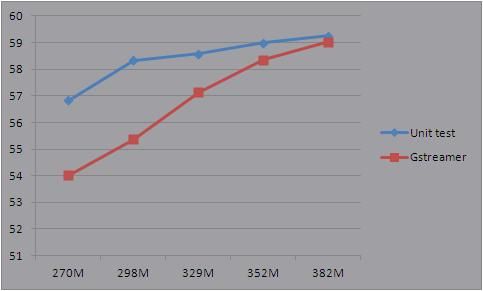

In this article, some experiments are done to verify the capability of i.MX6DQ on video playback under different VPU clocks.

1. Preparation

Board: i.MX6DQ SD

Bitstream: 1080p sunflower with 40Mbps, it is considered as the toughest H264 clip. The original clip is copied 20 times to generate a new raw video (repeat 20 times of sun-flower clip) and then encapsulate into a mp4 container. This is to remove and minimize the influence of startup workload of gstreamer compared to vpu unit test.

Kernels: Generate different kernel with different VPU clock setting: 270MHz, 298MHz, 329MHz, 352MHz, 382MHz.

test setting: 1080p content decoding and display with 1080p device. (no resize)

2. Test command for VPU unit test and Gstreamer

The tiled format video playback is faster than NV12 format, so in below experiment, we choose tiled format during video playback.

Unit test command: (we set the frame rate -a 70, higher than 1080p 60fps HDMI refresh rate)

/unit_tests/mxc_vpu_test.out -D "-i /media/65a78bbd-1608-4d49-bca8-4e009cafac5e/sunflower_2B_2ref_WP_40Mbps.264 -f 2 -y 1 -a 70"

Gstreamer command: (free run to get the highest playback speed)

gst-launch filesrc location=/media/65a78bbd-1608-4d49-bca8-4e009cafac5e/sunflower_2B_2ref_WP_40Mbps.mp4 typefind=true ! aiurdemux ! vpudec framedrop=false ! queue max-size-buffers=3 ! mfw_v4lsink sync=false

3. Video playback framerate measurement

During test, we enter command "echo performance > /sys/devices/system/cpu/cpu0/cpufreq/scaling_governor" to make sure the CPU always work at highest frequency, so that it can respond to any interrupt quickly.

For each testing point with different VPU clock, we do 5 rounds of tests. The max and min values are removed, and the remaining 3 data are averaged to get the final playback framerate.

| #1 | #2 | #3 | #4 | #5 | Min | Max | Avg | |||||||

| Dec | Playback | Dec | Playback | Dec | Playback | Dec | Playback | Dec | Playback | Playback | Playback | Playback | ||

| 270M | unit test | 57.8 | 57.3 | 57.81 | 57.04 | 57.78 | 57.3 | 57.87 | 56.15 | 57.91 | 55.4 | 55.4 | 57.3 | 56.83 |

| GST | 53.76 | 54.163 | 54.136 | 54.273 | 53.659 | 53.659 | 54.273 | 54.01967 | ||||||

| 298M | unit test | 60.97 | 58.37 | 60.98 | 58.55 | 60.97 | 57.8 | 60.94 | 58.07 | 60.98 | 58.65 | 57.8 | 58.65 | 58.33 |

| GST | 56.755 | 49.144 | 53.271 | 56.159 | 56.665 | 49.144 | 56.755 | 55.365 | ||||||

| 329M | unit test | 63.8 | 59.52 | 63.92 | 52.63 | 63.8 | 58.1 | 63.82 | 58.26 | 63.78 | 59.34 | 52.63 | 59.52 | 58.56667 |

| GST | 57.815 | 55.857 | 56.862 | 58.637 | 56.703 | 55.857 | 58.637 | 57.12667 | ||||||

| 352M | unit test | 65.79 | 59.63 | 65.78 | 59.68 | 65.78 | 59.65 | 66.16 | 49.21 | 65.93 | 57.67 | 49.21 | 59.68 | 58.98333 |

| GST | 58.668 | 59.103 | 56.419 | 58.08 | 58.312 | 56.419 | 59.103 | 58.35333 | ||||||

| 382M | unit test | 64.34 | 56.58 | 67.8 | 58.73 | 67.75 | 59.68 | 67.81 | 59.36 | 67.77 | 59.76 | 56.58 | 59.76 | 59.25667 |

| GST | 59.753 | 58.893 | 58.972 | 58.273 | 59.238 | 58.273 | 59.753 | 59.03433 |

Note: Dec column means the vpu decoding fps, while Playback column means overall playback fps.

Some explanation:

Why does the Gstreamer performance data still improve while unit test is more flat? On Gstreamer, there is a vpu wrapper which is used to make the vpu api more intuitive to be called. So at first, the overall GST playback performance is constrained by vpu (vpu dec 57.8 fps). And finally, as vpu decoding performance goes to higher than 60fps when vpu clock increases, the constraint becomes the display refresh rate 60fps.

The video display overhead of Gstreamer is only about 1 fps, similar to unit test.

Based on the test result, we can see that for 352MHz, the overall 1080p video playback on 1080p display can reach ~60fps.

Or if time sharing by two pipelines with two displays, we can do 2 x 1080p @ 30fps video playback.

However, this experiment is valid for 1080p video playback on 1080p display. If for interlaced clip and display with size not same as 1080p, the overall playback performance is limited by some postprocessing like de-interlacing and resize.

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hello,

Thanks for presenting this information. It's very beneficial.

I'm debugging a related problem and have few question.

The problem:

I'm playing multiple network streams (4 - 8 - 16) to a 1080p screen using gstreamer. The screen supports DVI only so I'm using HDMI to DVI cable. Of course I'm scaling down the streams using the mfw_isink element to fit on the screen. All streams starts fine without any noticeable error but after few hours the video freeze's without giving any error messages.

The pipeline I'm using is

vpudec ->

appsr c-> output-selector -> input-selector -> mfw_isink

jpegdec->

My questions:

1. The system will be playing video 24/7 and I don't care about power consumption. Is it better to always use the command "echo performance > /sys/devices/system/cpu/cpu0/cpufreq/scaling_governor" ?

2. Why do you use "queue max-size-buffers=3" between decoder and sink? How do you decide the buffers size=3 ?

3. Do you have any tools for monitoring the VPU and IPU performance?

4. What is more likely to be the bottleneck, the display or the decoder ?

Thanks

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Tarek,

1. freezing issue: can you monitor the RAM memory usage/free (top -b | grep Mem) while your pipelines are running?

2. queue: the 'max-size-buffers' I believe the reason to reduce it is that the vpudec needs some number of buffers running on the pipeline all the time, if the default value is given, the vpudec at some point will run out of buffers.

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Leo,

1. I don't see any memory leaks. I've used Top valgrind and mtrace so I have no doubts.

2. I'm not quit sure I understand that! The default value is 20 buffers and we need to reduce this number to 3 buffers so the VPU does not run out of buffers! I would thought that we need to increase the number, right?

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

jackmao should have a better understanding of why we need to decrease the queue's buffers. AFAIK, the vpudec needs at least 6 buffers running, and we can not change through the vpudec properties, so the only parameter left is the queue's buffers. In fact, I inspected the queue element and seems that the default is 200!!

max-size-buffers : Max. number of buffers in the queue (0=disable)

flags: readable, writable

Unsigned Integer. Range: 0 - 4294967295 Default: 200 Current: 200

BTW, have you try using the mfw_ipucsc element for down-scaling?

Leo

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Leo,

I'm using the mfw_isink which I think is using the same IPU like mfw_ipucsc. Do you think there is additional benefit from using it?

I will try it anyway so my pipeline will be like this:

appsrc -> vpudec -> mfw_ipucsc -> mfw_isink. Is that correct?

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Tarek,

the mfw_ipucsc is a color space conversions and resolutiion scaler, so you can remove it from the pipeline if you do not need these features. In the other hand, If you add it and do not add any capsfilter in front, (I believe) it does not do anything.

Leo

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Leo,

I do need scaling down but my question is:

What is the difference between scaling down using mfw_ipucsc and mfw_isink? Is there any performance gain?

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Oh I see. I am not sure. jackmao, can you comment on this?

Leo

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

They should be no big different on scaling down, because they both use IPU

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

The vpu use 6 buffer for decode, the vl4sink use two of them, the queue use 3 buffer, you can't set too large, otherwise there no enough buffer to run

- 既読としてマーク

- 新着としてマーク

- ブックマーク

- ハイライト

- 印刷

- 不適切なコンテンツを報告

Hi Junping,

Do I also need to use a queue before mfw_isink?