- NXP Forums

- Product Forums

- General Purpose MicrocontrollersGeneral Purpose Microcontrollers

- i.MX Forumsi.MX Forums

- QorIQ Processing PlatformsQorIQ Processing Platforms

- Identification and SecurityIdentification and Security

- Power ManagementPower Management

- MCX Microcontrollers

- S32G

- S32K

- S32V

- MPC5xxx

- Other NXP Products

- Wireless Connectivity

- S12 / MagniV Microcontrollers

- Powertrain and Electrification Analog Drivers

- Sensors

- Vybrid Processors

- Digital Signal Controllers

- 8-bit Microcontrollers

- ColdFire/68K Microcontrollers and Processors

- PowerQUICC Processors

- OSBDM and TBDML

-

- Solution Forums

- Software Forums

- MCUXpresso Software and ToolsMCUXpresso Software and Tools

- CodeWarriorCodeWarrior

- MQX Software SolutionsMQX Software Solutions

- Model-Based Design Toolbox (MBDT)Model-Based Design Toolbox (MBDT)

- FreeMASTER

- eIQ Machine Learning Software

- Embedded Software and Tools Clinic

- S32 SDK

- S32 Design Studio

- Vigiles

- GUI Guider

- Zephyr Project

- Voice Technology

- Application Software Packs

- Secure Provisioning SDK (SPSDK)

- Processor Expert Software

-

- Topics

- Mobile Robotics - Drones and RoversMobile Robotics - Drones and Rovers

- NXP Training ContentNXP Training Content

- University ProgramsUniversity Programs

- Rapid IoT

- NXP Designs

- SafeAssure-Community

- OSS Security & Maintenance

- Using Our Community

-

-

- Home

- :

- Software Forums

- :

- eIQ Machine Learning Software Knowledge Base

- :

- Transfer Learning and the Importance of Datasets

Transfer Learning and the Importance of Datasets

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Transfer Learning and the Importance of Datasets

Transfer Learning and the Importance of Datasets

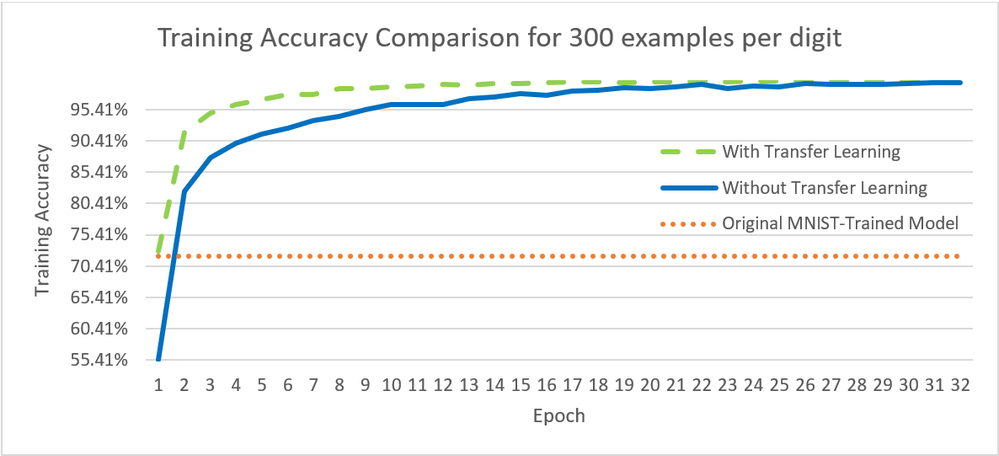

Transfer learning is one the most important techniques in machine learning. It gives machine learning models the ability to apply past experience to quickly and more accurately learn to solve new problems. This approach is most commonly used in natural language processing and image recognition. However, even with transfer learning, if you don't have the right dataset, you will not get very far.

This application note aims to explain transfer learning and the importance of datasets in deep learning. The first part of the AN goes through the theoretical background of both topics. The second part describes a use case example based on the application from AN12603. It shows how a dataset of handwritten digits can be collected to match the input style of the handwritten digit recognition application. Afterwards, it illustrates how transfer learning can be used with a model trained on the original MNIST dataset to retrain it on the smaller custom dataset collected in the use case.

In the end, the AN shows that although handwritten digit recognition is a simple task for neural networks, it can still benefit from transfer learning. Training a model from scratch is slower and yields worse accuracy, especially if a very small amount of examples is used for training.

Application note URL: https://www.nxp.com/docs/en/application-note/AN12892.pdf